Ever spent hours analyzing Google search results and ended up more frustrated and confused than before?

Python hasn’t.

In this article, we’ll explore why Python is an ideal choice for Google search analysis and how it simplifies and automates an otherwise time-consuming task.

We’ll also perform an SEO analysis in Python from start to finish. And provide code for you to copy and use.

But first, some background.

Why to Use Python for Google Search and Analysis

Python is known as a versatile, easy-to-learn programming language. And it really shines at working with Google search data.

Why?

Here are a few key reasons that point to Python as a top choice for scraping and analyzing Google search results:

Python Is Easy to Read and Use

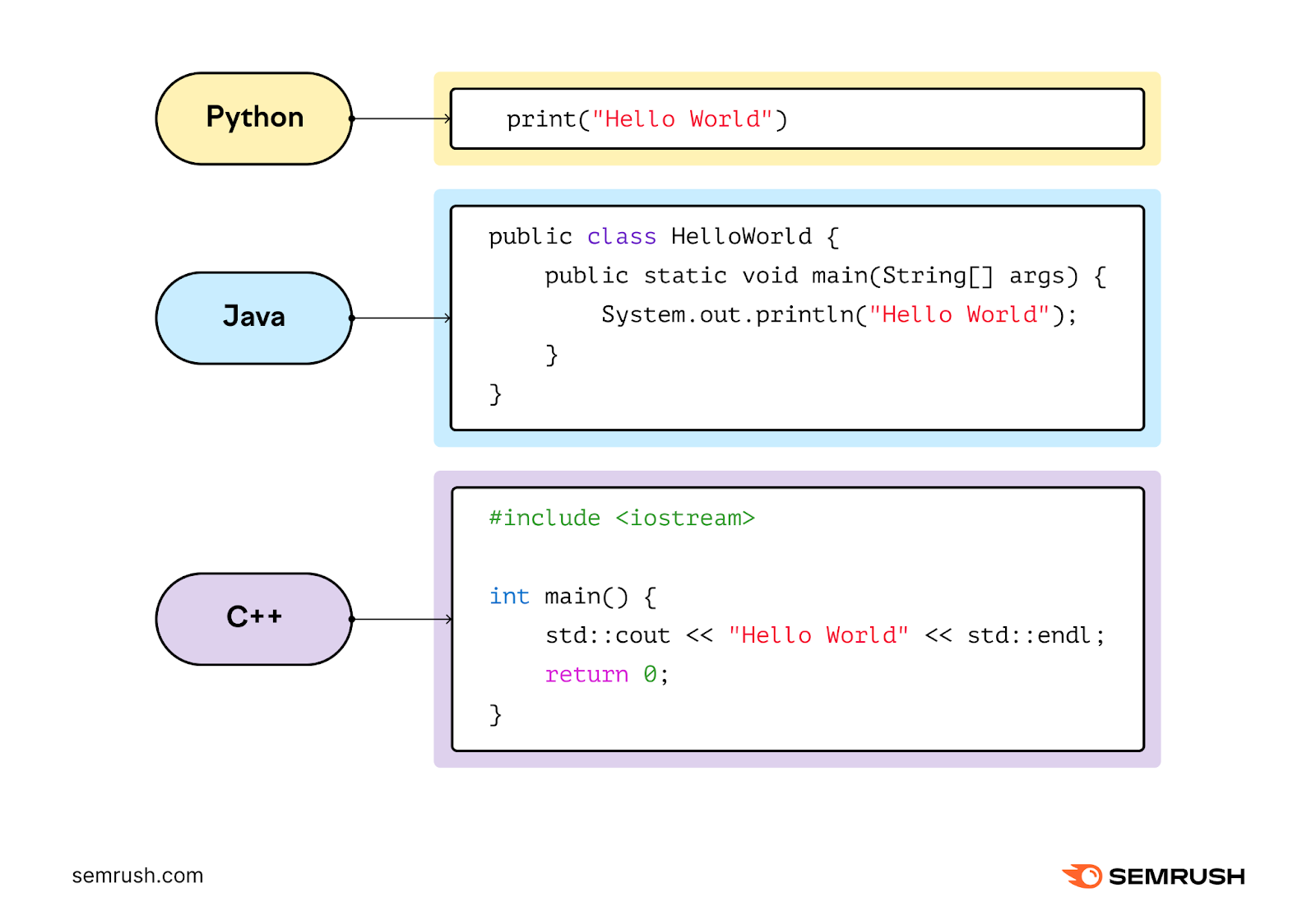

Python is designed with simplicity in mind. So you can focus on analyzing Google search results instead of getting tangled up in complicated coding syntax.

It follows an easy to grasp syntax and style. Which allows developers to write fewer lines of code compared to other languages.

Python Has Well-Equipped Libraries

A Python library is a reusable chunk of code created by developers that you can reference in your scripts to provide extra functionality without having to write it from scratch.

And Python now has a wealth of libraries like:

- Googlesearch, Requests, and Beautiful Soup for web scraping

- Pandas and Matplotlib for data analysis

These libraries are powerful tools that make scraping and analyzing data from Google searches efficient.

Python Offers Support from a Large Community and ChatGPT

You’ll be well-supported in any Python project you undertake, including Google search analysis.

Because Python's popularity has led to a large, active community of developers. And a wealth of tutorials, forums, guides, and third-party tools.

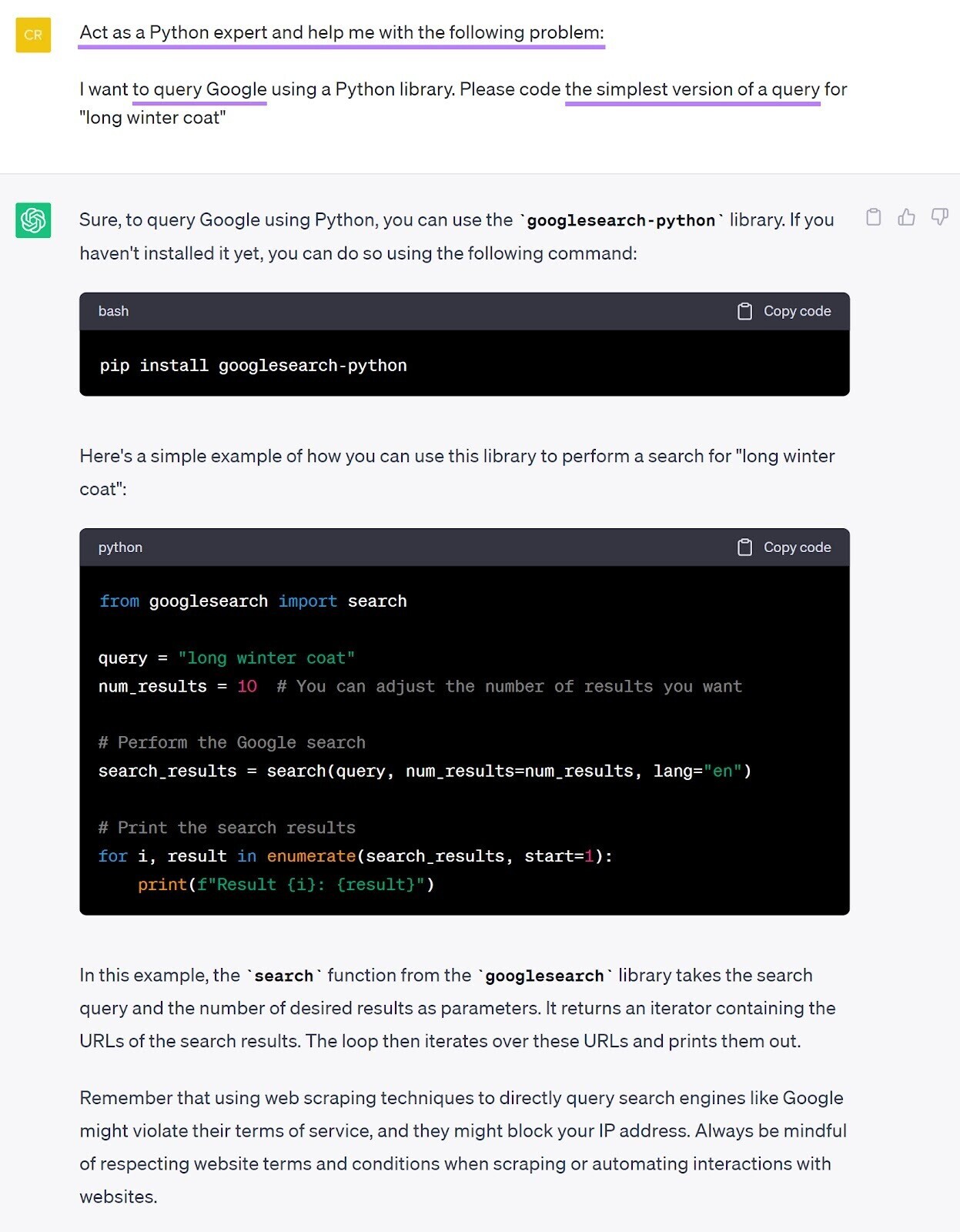

And when you can’t find pre-existing Python code for your search analysis project, chances are that ChatGPT will be able to help.

When using ChatGPT, we recommend prompting it to:

- Act as a Python expert and

- Help with a problem

Then, state:

- The goal (“to query Google”) and

- The desired output (“the simplest version of a query”)

Setting Up Your Python Environment

You'll need to set up your Python environment before you can scrape and analyze Google search results using Python.

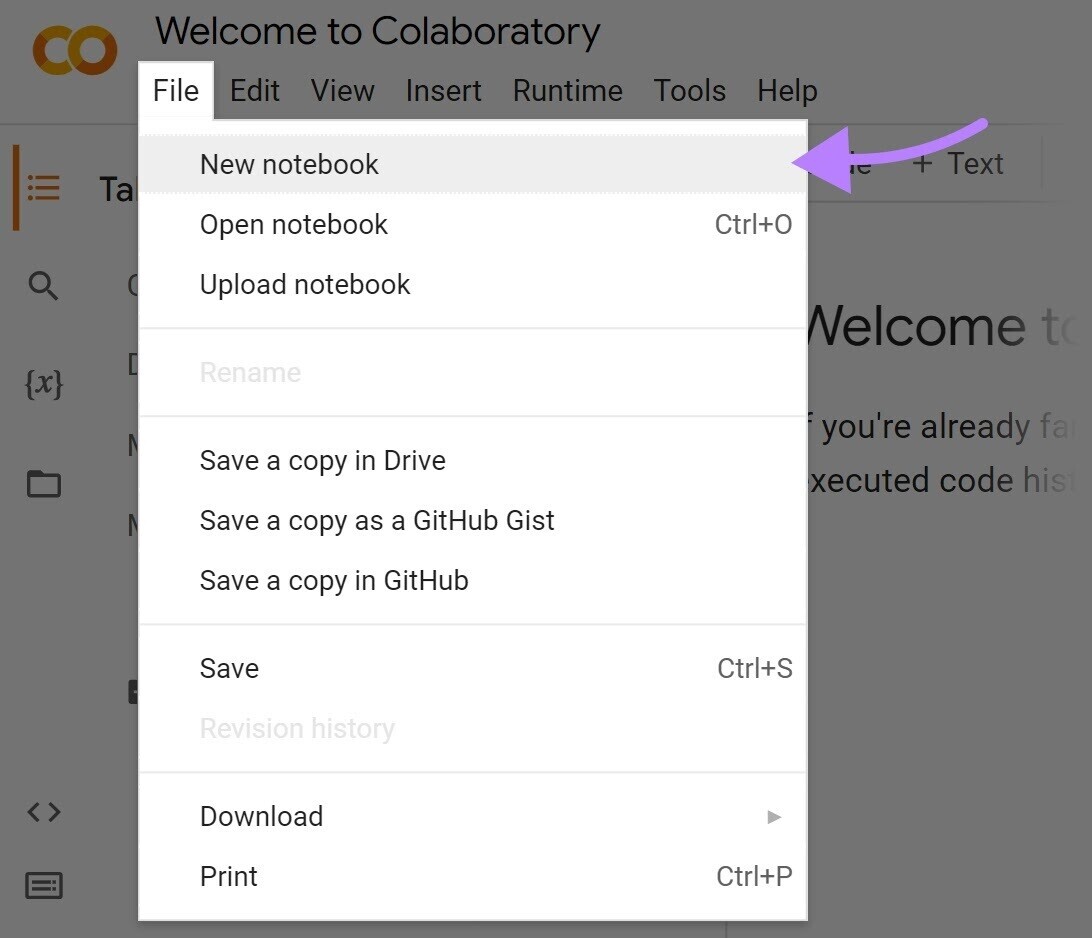

There are many ways to get Python up and running. But one of the quickest ways to start analyzing Google search engine results pages (SERPs) with Python is Google’s own notebook environment: Google Colab.

Here’s how easy it is to get started with Google Colab:

1. Access Google Colab: Open your web browser and go to Google Colab. If you have a Google account, sign in. If not, create a new account.

2. Create a new notebook: In Google Colab, click on “File” > “New Notebook” to create a new Python notebook.

3. Check installation: To ensure that Python is working correctly, run a simple test by entering and executing the code below. And Google Colab will show you the Python version that’s currently installed:

import sys

sys.versionWasn’t that simple?

There’s just one more step before you can perform an actual Google search.

Importing the Python Googlesearch Module

Use the googlesearch-python package to scrape and analyze Google search results with Python. It provides a convenient way to perform Google searches programmatically.

Just run the following code in a code cell to access this Python Google search module:

from googlesearch import search

print("Googlesearch package installed successfully!")One benefit of using Google Colab is that the googlesearch-python package is pre-installed. So, no need to do that first.

It’s ready to go once you see the message "Googlesearch package installed successfully!"

Now, we'll explore how to use the module to perform Google searches. And extract valuable information from the search results.

How to Perform a Google Search with Python

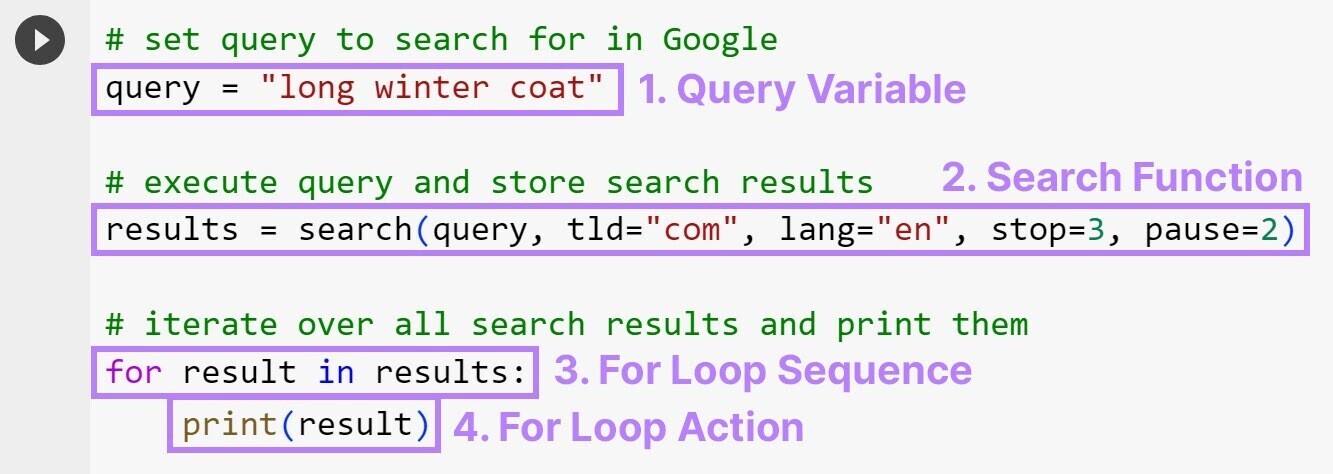

To perform a Google search, write and run a few lines of code that specify your search query, how many results to display, and a few other details (more on this in the next section).

# set query to search for in Google

query = "long winter coat"

# execute query and store search results

results = search(query, tld="com", lang="en", stop=3, pause=2)

# iterate over all search results and print them

for result in results:

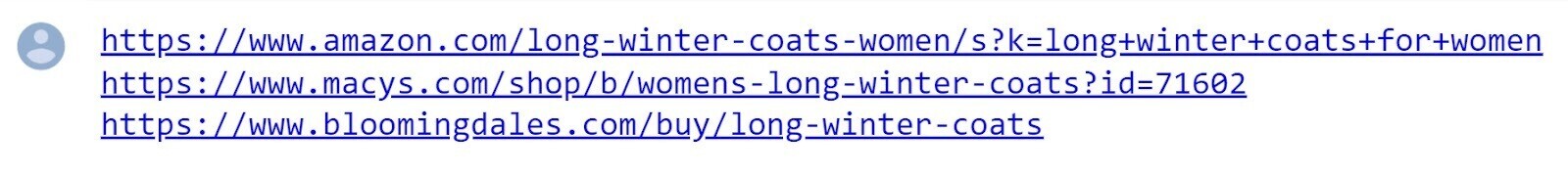

print(result)You’ll then see the top three Google search results for the query "long winter coat."

Here’s what it looks like in the notebook:

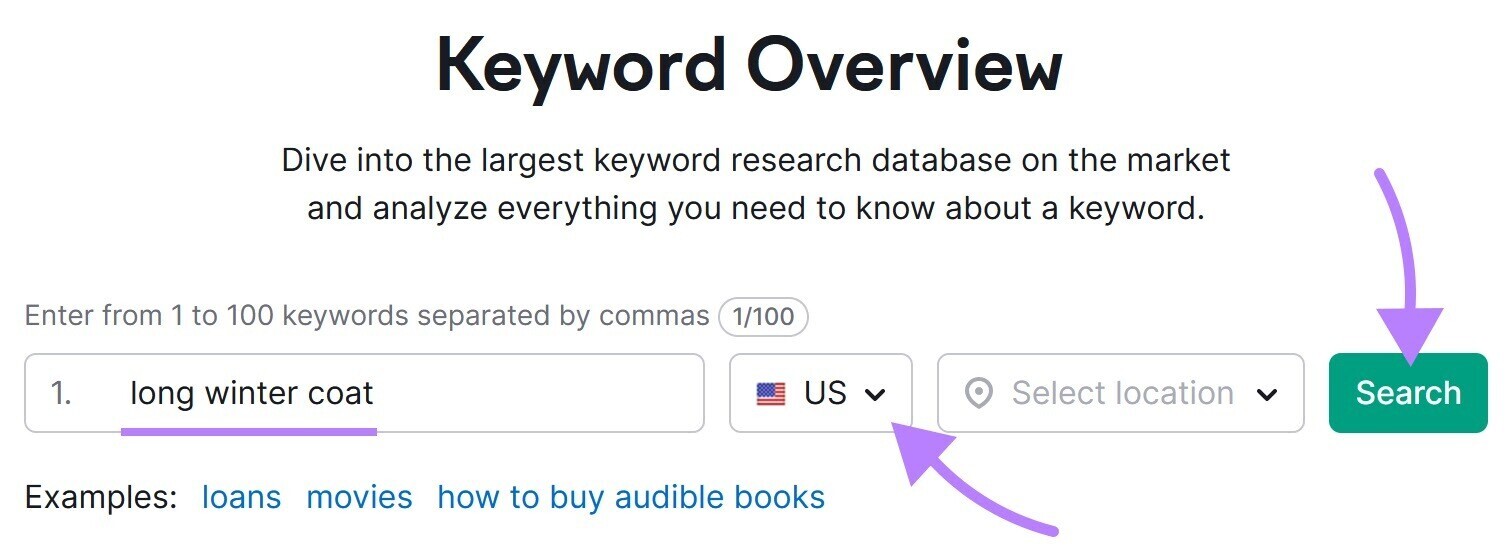

To verify that the results are accurate, you can use Keyword Overview.

Open the tool, enter "long winter coat" into the search box, and make sure the location is set to "U.S." And click "Search."

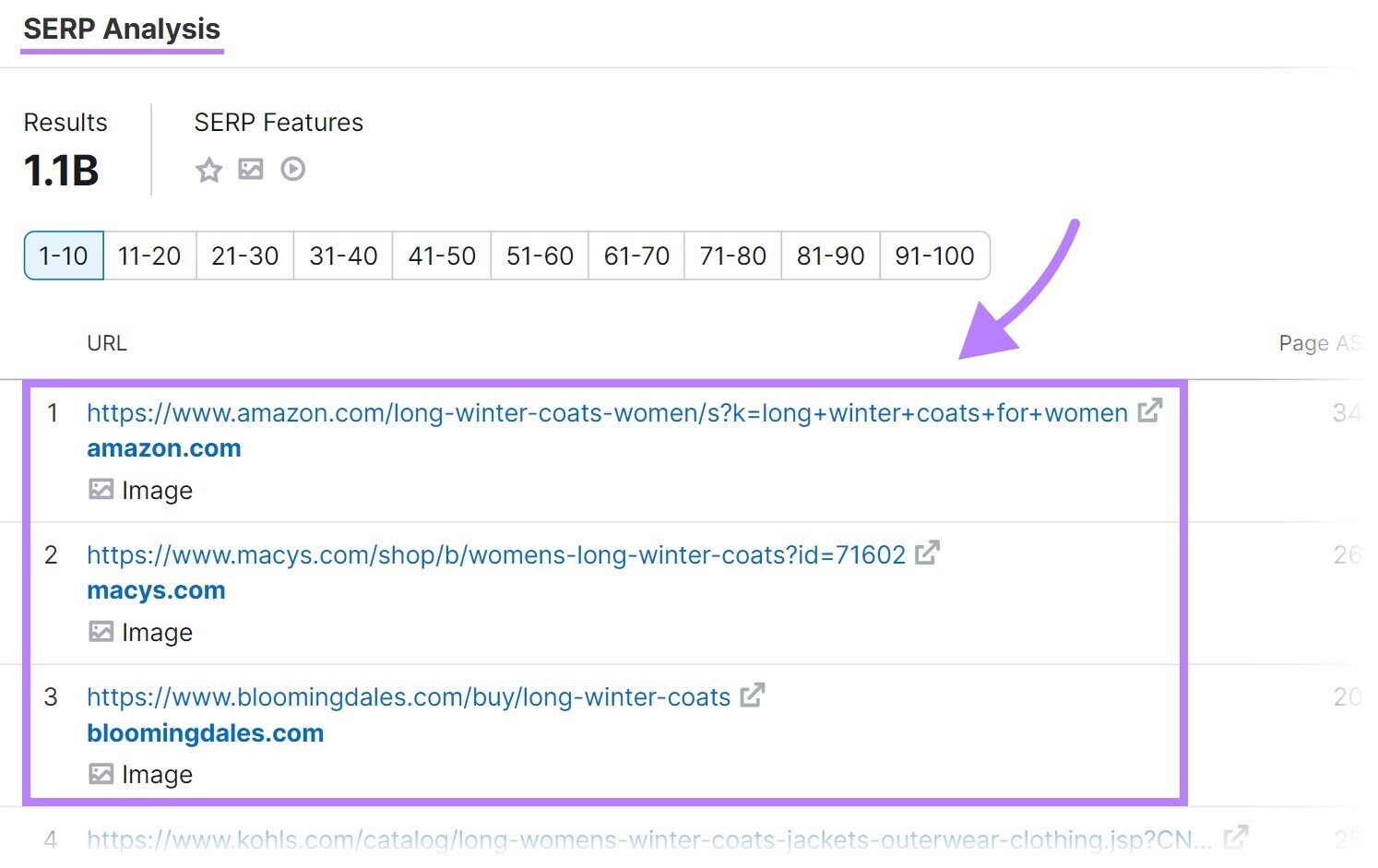

Scroll down to the "SERP Analysis" table. And you should see the same (or very similar) URLs in the top three spots.

Keyword Overview also shows you a lot of helpful data that Python has no access to. Like monthly search volume (globally and in your chosen location), Keyword Difficulty (a score that indicates how difficult it is to rank in the top 10 results for a given term), search intent (the reason behind a user’s query), and much more.

Understanding Your Google Search with Python

Let's go through the code we just ran. So you can understand what each part means and how to make adjustments for your needs.

We'll go over each part highlighted in the image below:

- Query variable: The query variable stores the search query you want to execute on Google

- Search function: The search function provides various parametersthat allow you to customize your search and retrieve specific results:

- Query: Tells the search function what phrase or word to search for. This is the only required parameter, so the search function will return an error without it. This is the only required parameter; all following ones are optional.

- Tld (short for top-level domain): Lets you determine which version of Google’s website you want to execute a search in. Setting this to "com" will search google.com; setting it to "fr" will search google.fr.

- Lang: Allows you to specify the language of the search results. And accepts a two-letter language code (e.g., "en" for English).

- Stop: Sets the number of the search results for the search function. We’ve limited our search to the top three results, but you might want to set the value to “10.”

- Pause: Specifies the time delay (in seconds) between consecutive requests sent to Google. Setting an appropriate pause value (we recommend at least 10) can help avoid being blocked by Google for sending too many requests too quickly.

- For loop sequence: This line of code tells the loop to iterate through each search result in the "results" collection one by one, assigning each search result URL to the variable "result"

- For loop action: This code block follows the for loop sequence (it’s indented) and contains the actions to be performed on each search result URL. In this case, they’re printed into the output area in Google Colab.

How to Analyze Google Search Results with Python

Once you’ve scraped Google search results using Python, you can use Python to analyze the data to extract valuable insights.

For example, you can determine which keywords’ SERPs are similar enough to be targeted with a single page. Meaning Python is doing the heavy lifting involved in keyword clustering.

Let’s stick to our query "long winter coat" as a starting point. Plugging that into Keyword Overview reveals over 3,000 keyword variations.

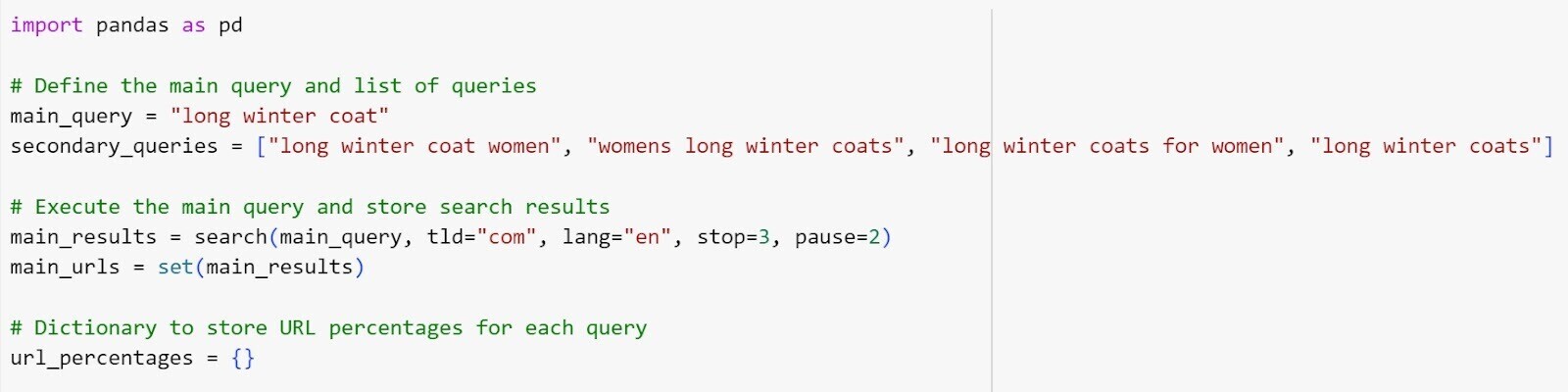

For the sake of simplicity, we’ll stick to the five keywords visible above. And have Python analyze and cluster them by creating and executing this code in a new code cell in our Google Colab notebook:

import pandas as pd

# Define the main query and list of queries

main_query = "long winter coat"

secondary_queries = ["long winter coat women", "womens long winter coats", "long winter coats for women", "long winter coats"]

# Execute the main query and store search results

main_results = search(main_query, tld="com", lang="en", stop=3, pause=2)

main_urls = set(main_results)

# Dictionary to store URL percentages for each query

url_percentages = {}

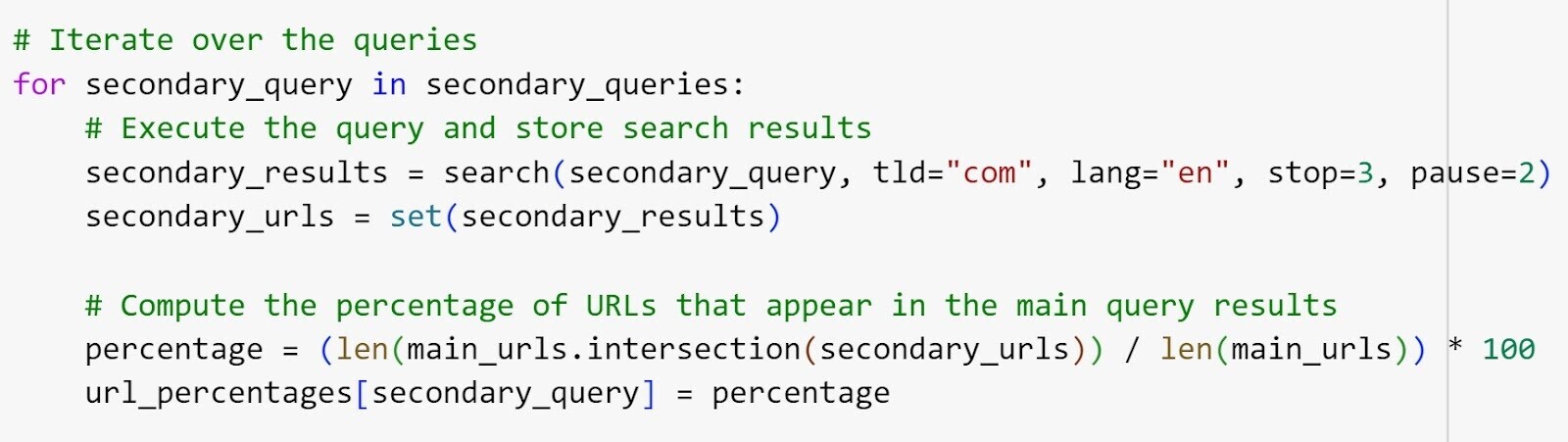

# Iterate over the queries

for secondary_query in secondary_queries:

# Execute the query and store search results

secondary_results = search(secondary_query, tld="com", lang="en", stop=3, pause=2)

secondary_urls = set(secondary_results)

# Compute the percentage of URLs that appear in the main query results

percentage = (len(main_urls.intersection(secondary_urls)) / len(main_urls)) * 100

url_percentages[secondary_query] = percentage

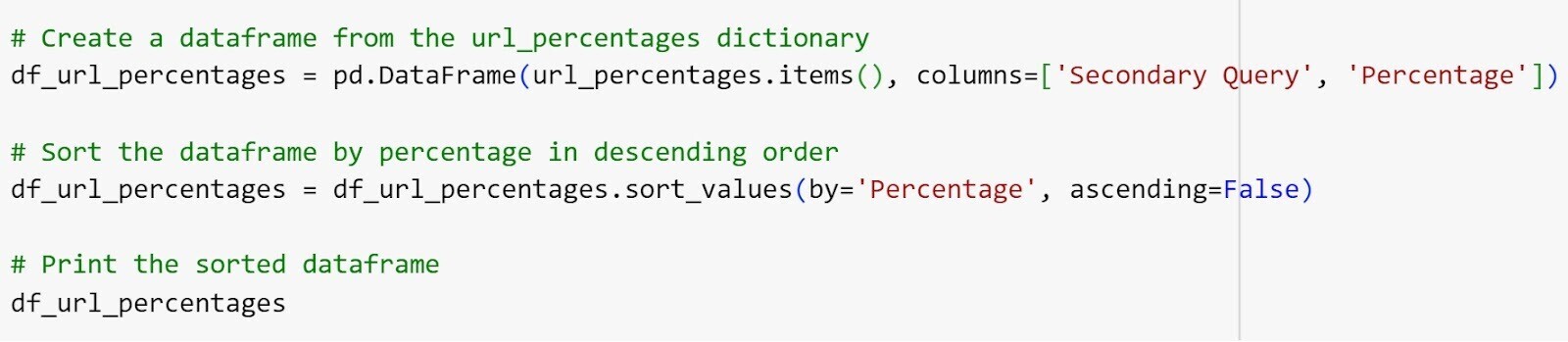

# Create a dataframe from the url_percentages dictionary

df_url_percentages = pd.DataFrame(url_percentages.items(), columns=['Secondary Query', 'Percentage'])

# Sort the dataframe by percentage in descending order

df_url_percentages = df_url_percentages.sort_values(by='Percentage', ascending=False)

# Print the sorted dataframe

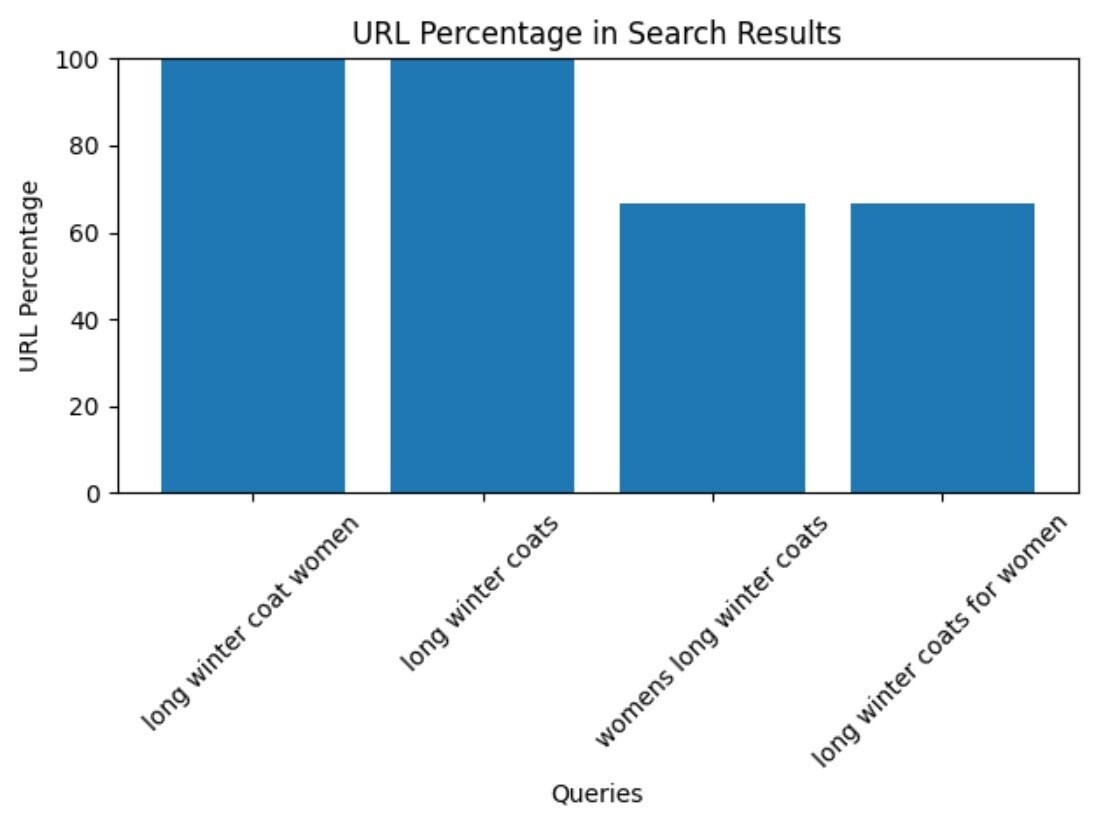

df_url_percentagesWith 14 lines of code and a dozen or so seconds of waiting for it to execute, we can now see that the top three results are the same for these queries:

- “long winter coat”

- “long winter coat women”

- “womens long winter coats”

- “long winter coats for women”

- “long winter coats”

So, those queries can be targeted with the same page.

Also, you shouldn’t try to rank for “long winter coat” or “long winter coats” with a page offering coats for men.

Understanding Your Google Search Analysis with Python

Once again, let’s go through the code we’ve just executed. It’s a little more complex this time, but the insights we’ve just generated are much more useful, too.

1. Import pandas as pd: Imports the Pandas library and makes it callable by the abbreviation "pd." We’ll use the Pandas library to create a "DataFrame," which is essentially a table inside the Python output area.

2. Main_query = "python google search": Defines the main query to search for on Google

3. Secondary_queries = ["google search python", "google search api python", "python search google", "how to scrape google search results python"]: Creates a list of queries to be executed on Google. You can paste many more queries and have Python cluster hundreds of them for you.

4. Main_results = search(main_query, tld="com", lang="en", stop=3, pause=2): Executes the main query and stores the search results in main_results. We limited the number of results to three (stop=3), because the top three URLs in Google’s search results often do the best job in terms of satisfying users’ search intent.

5. Main_urls = set(main_results): Converts the search results of the main query into a set of URLs and stores them in main_urls

6. Url_percentages = {}: Initializes an empty dictionary (a list with fixed value pairs) to store the URL percentages for each query

7. For secondary_query in secondary_queries :: Starts a loop that iterates over each secondary query in the secondary queries list

8. Secondary_results = search(secondary_query, tld="com", lang="en", stop=3, pause=2): Executes the current secondary query and stores the search results in secondary_results. We limited the number of results to three (stop=3) for the same reason we mentioned earlier.

9. Secondary_urls = set(secondary_results): Converts the search results of the current secondary query into a set of URLs and stores them in secondary_urls

10. Percentage = (len(main_urls.intersection(urls)) / len(main_urls)) * 100: Calculates the percentage of URLs that appear in both the main query results and the current secondary query results. The result is stored in the variable percentage.

11. Url_percentages[secondary_query] = percentage: Stores the computed URL percentage in the url_percentages dictionary, with the current secondary query as the key

12. Df_url_percentages = pd.DataFrame(url_percentages.items(), columns=['Secondary Query', 'Percentage']): Creates a Pandas DataFrame that holds the secondary queries in the first column and their overlap with the main query in the second column. The columns argument (which has three labels for the table added) is used to specify the column names for the DataFrame.

13. Df_url_percentages = df_url_percentages.sort_values(by='Percentage', ascending=False): Sorts the DataFrame df_url_percentages based on the values in the Percentage column. By setting ascending=False, the dataframe is sorted from the highest to the lowest values.

14. Df_url_percentages: Shows the sorted DataFrame in the Google Colab output area. In most other Python environments you would have to use the print() function to display the DataFrame. But not in Google Colab— plus the table is interactive.

In short, this code performs a series of Google searches and shows the overlap between the top three search results for each secondary query and the main query.

The larger the overlap is, the more likely you can rank for a primary and secondary query with the same page.

Visualizing Your Google Search Analysis Results

Visualizing the results of a Google search analysis can provide a clear and intuitive representation of the data. And enable you to easily interpret and communicate the findings.

Visualization comes in handy when we apply our code for keyword clustering to no more than 20 or 30 queries.

Note: For larger query samples, the query labels in the bar chart we’re about to create will bleed into each other. Which makes the DataFrame created above more useful for clustering.

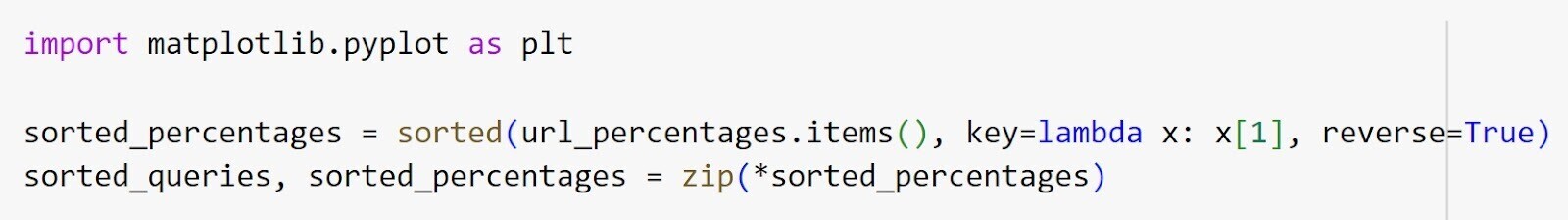

You can visualize your URL percentages as a bar chart using Python and Matplotlib with this code:

import matplotlib.pyplot as plt

sorted_percentages = sorted(url_percentages.items(), key=lambda x: x[1], reverse=True)

sorted_queries, sorted_percentages = zip(*sorted_percentages)

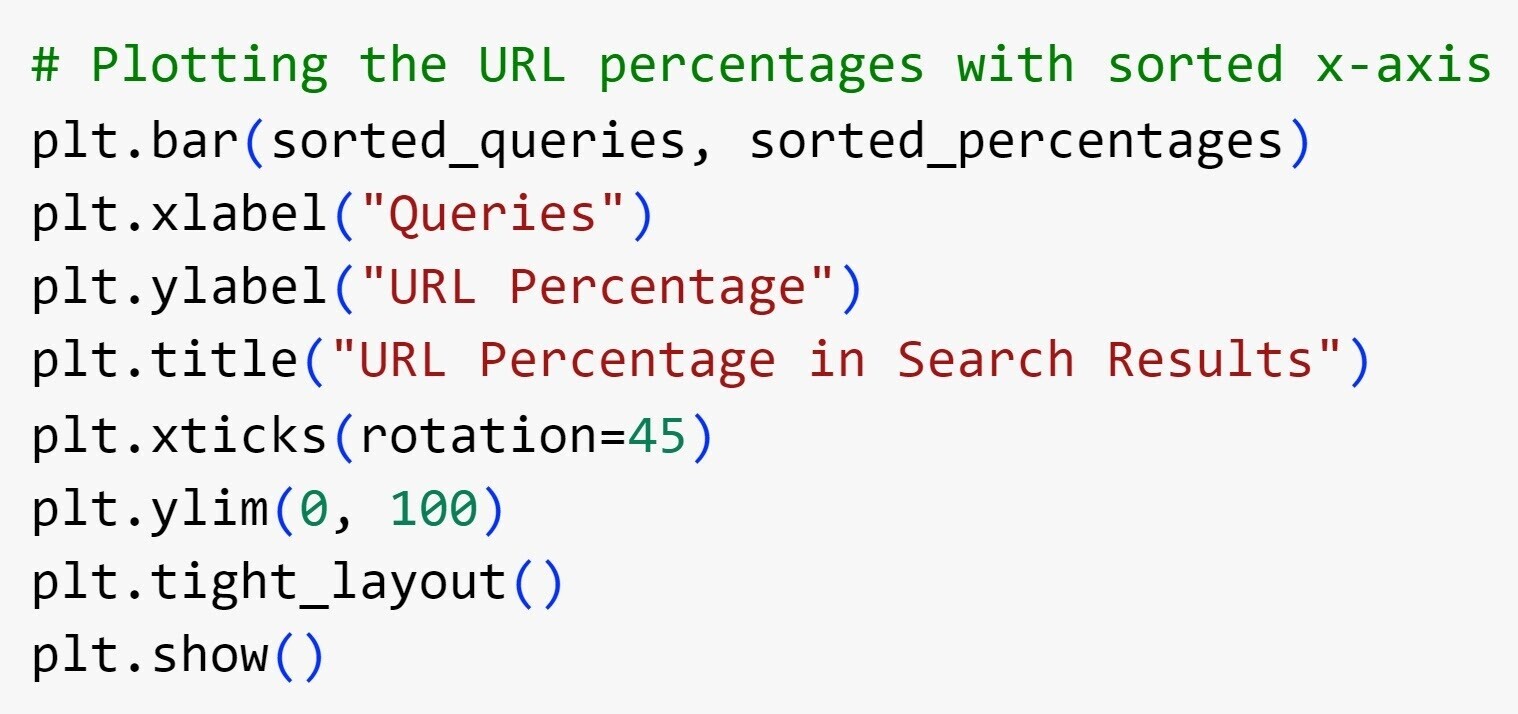

# Plotting the URL percentages with sorted x-axis

plt.bar(sorted_queries, sorted_percentages)

plt.xlabel("Queries")

plt.ylabel("URL Percentage")

plt.title("URL Percentage in Search Results")

plt.xticks(rotation=45)

plt.ylim(0, 100)

plt.tight_layout()

plt.show()We’ll quickly run through the code again:

1. Sorted_percentages = sorted(url_percentages.items(), key=lambda x: x[1], reverse=True): This specifies that the URL percentages dictionary (url_percentages) is sorted by value in descending order using the sorted() function. It creates a list of tuples (value pairs) sorted by the URL percentages.

2. Sorted_queries, sorted_percentages = zip(*sorted_percentages): This indicates the sorted list of tuples is unpacked into two separate lists (sorted_queries and sorted_percentages) using the zip() function and the * operator. The * operator in Python is a tool that lets you break down collections into their individual items

3. Plt.bar(sorted_queries, sorted_percentages): This creates a bar chart using plt.bar() from Matplotlib. The sorted queries are assigned to the x-axis (sorted_queries). And the corresponding URL percentages are assigned to the y-axis (sorted_percentages).

4. Plt.xlabel("Queries"): This sets the label "Queries" for the x-axis

5. Plt.ylabel("URL Percentage"): This sets the label "URL Percentage" for the y-axis

6. Plt.title("URL Percentage in Search Results"): This sets the title of the chart to "URL Percentage in Search Results"

7. Plt.xticks(rotation=45): This rotates the x-axis tick labels by 45 degrees using plt.xticks() for better readability

8. Plt.ylim(0, 100): This sets the y-axis limits from 0 to 100 using plt.ylim() to ensure the chart displays the URL percentages appropriately

9. Plt.tight_layout(): This function adjusts the padding and spacing between subplots to improve the chart’s layout

10. Plt.show(): This function is used to display the bar chart that visualizes your Google search results analysis

And here’s what the output looks like:

Master Google Search Using Python's Analytical Power

Python offers incredible analytical capabilities that can be harnessed to effectively scrape and analyze Google search results.

We’ve looked at how to cluster keywords, but there are virtually limitless applications for Google search analysis using Python.

But even just to extend the keyword clustering we’ve just conducted, you could:

- Scrape the SERPs for all queries you plan to target with one page and extract all the featured snippet text to optimize for them

- Scrape the questions and answers inside the People also ask box to adjust your content to show up in there

You’d need something more robust than the Googlesearch module. There are some great SERP application programming interfaces (APIs) out there that provide virtually all the information you find on a Google SERP itself, but you might find it simpler to get started using Keyword Overview.

This tool shows you all the SERP features for your target keywords. So you can study them and start optimizing your content.