What Is a Robots.txt File?

A robots.txt file is a set of instructions used by websites to tell search engines which pages should and should not be crawled. Robots.txt files guide crawler access but should not be used to keep pages out of Google's index.

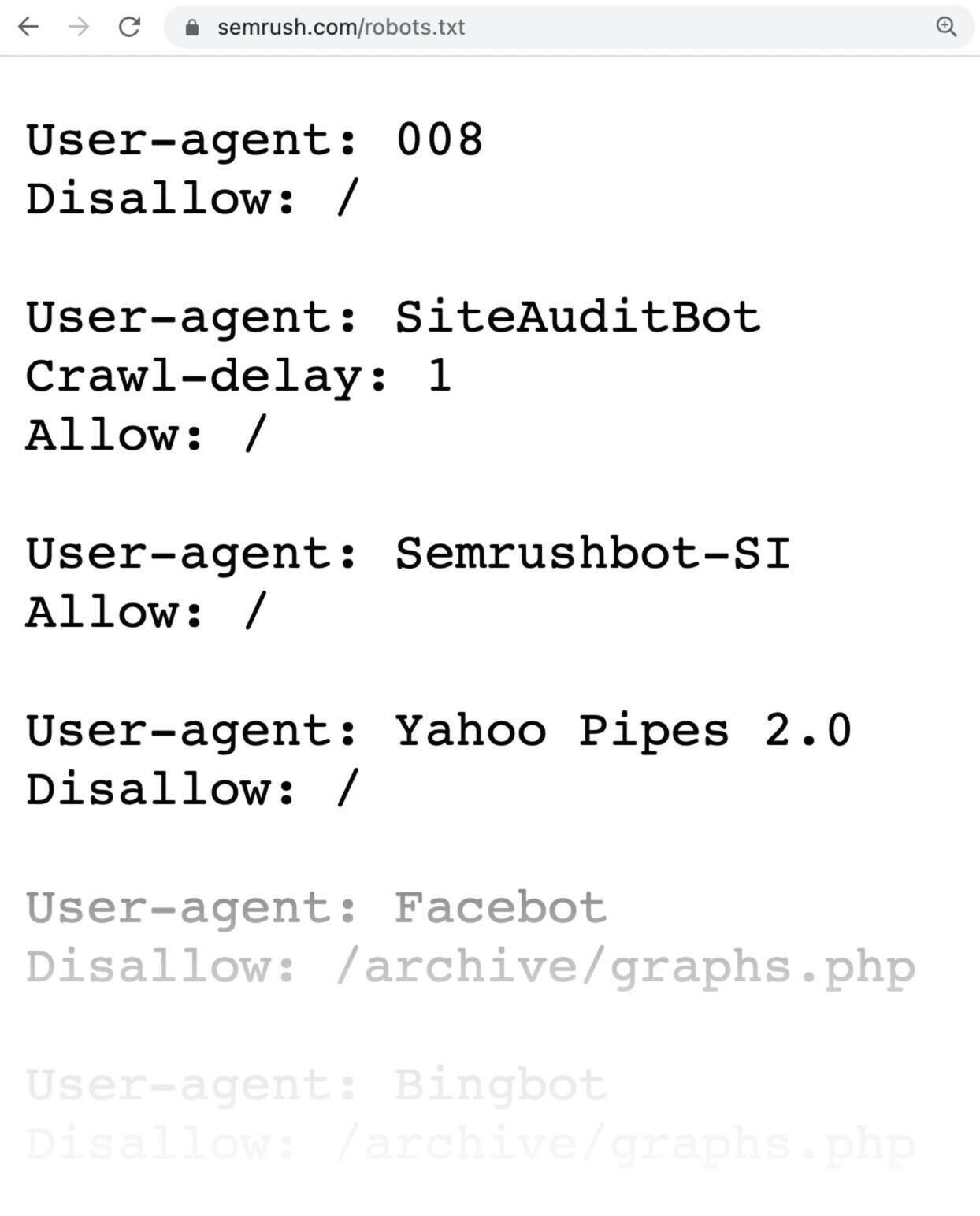

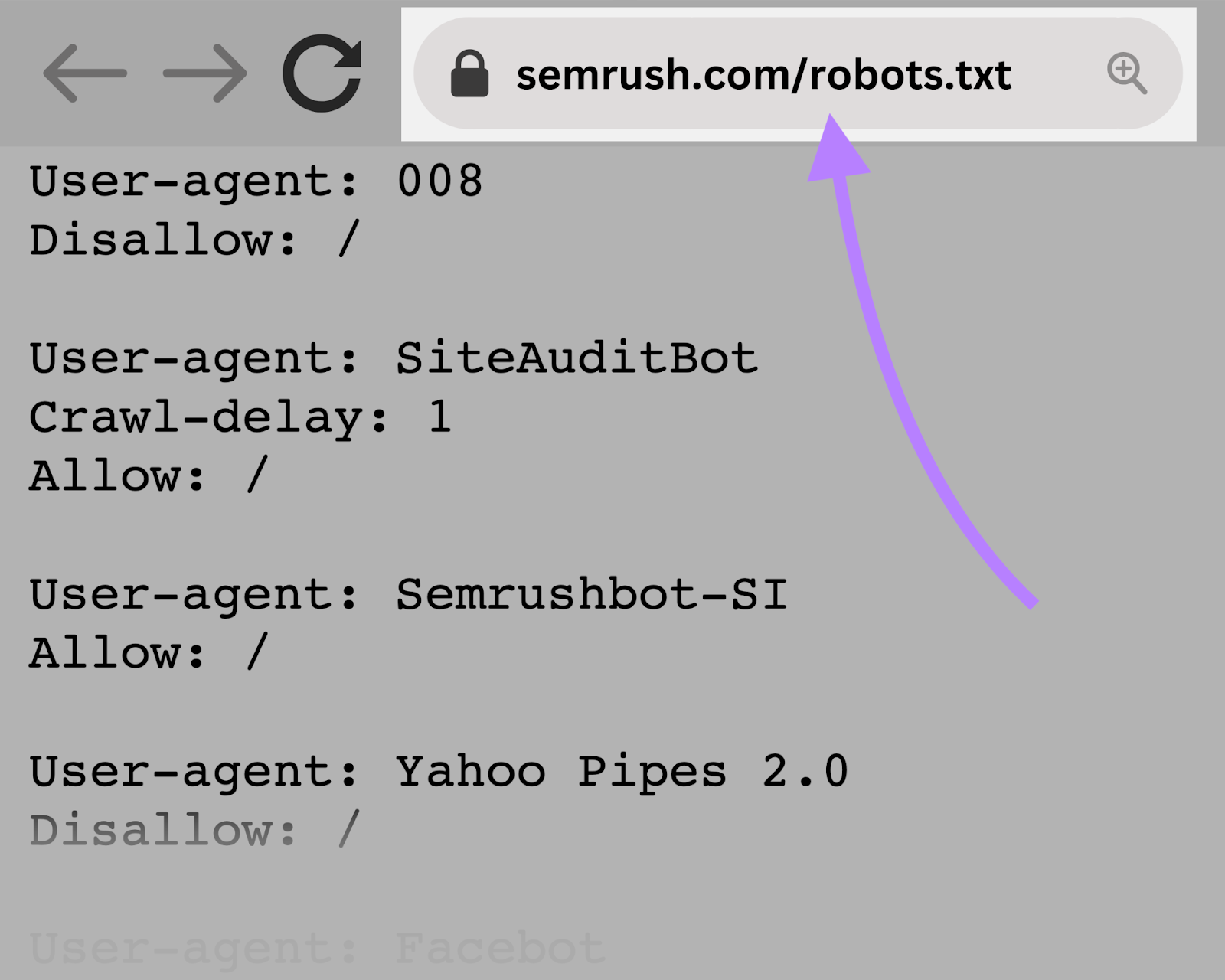

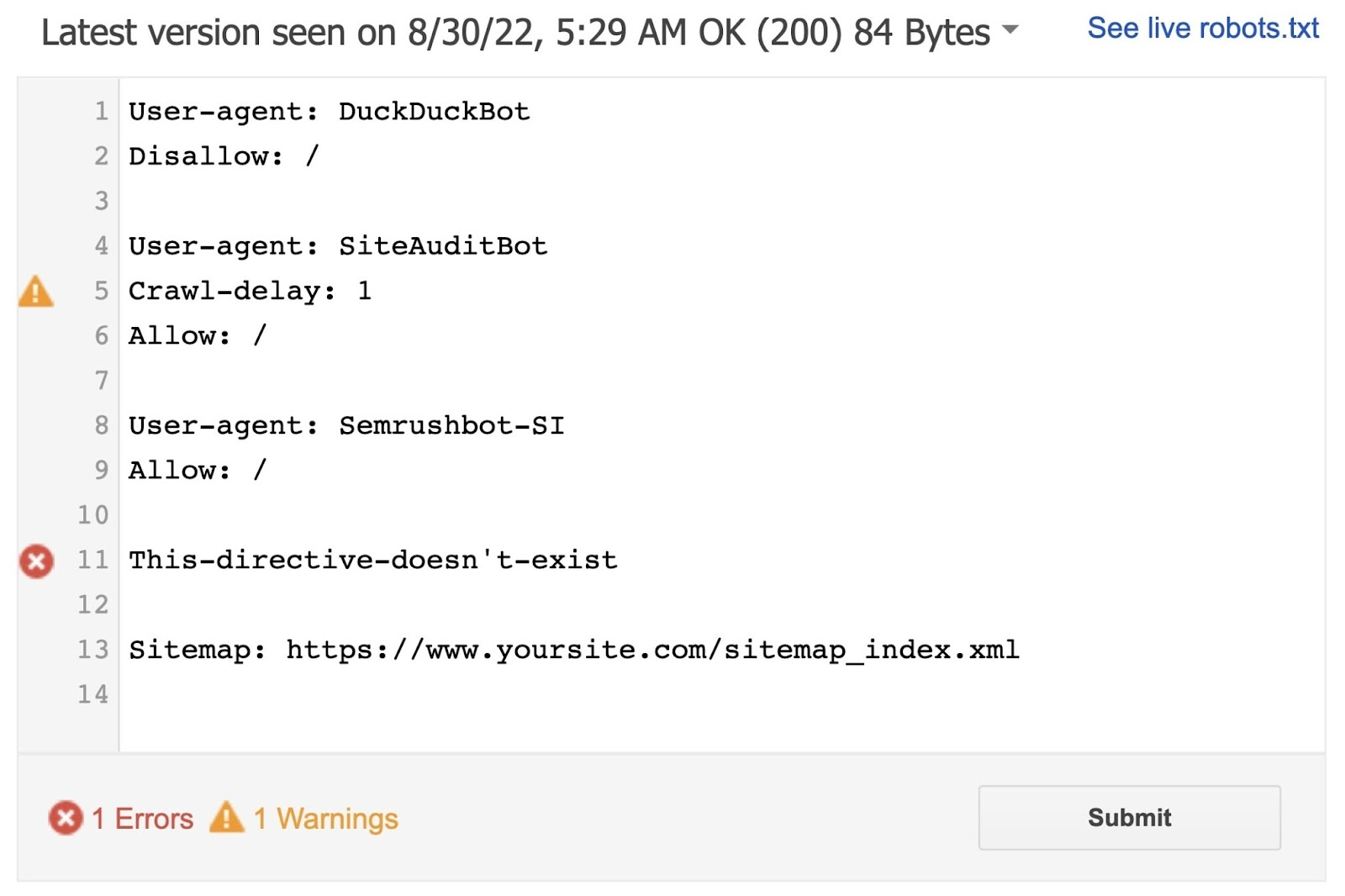

A robots.txt file looks like this:

Robots.txt files might seem complicated, but the syntax (computer language) is straightforward. We’ll get into those details later.

In this article we’ll cover:

- Why robots.txt files are important

- How robots.txt files work

- How to create a robots.txt file

- Robots.txt best practices

Why Is Robots.txt Important?

A robots.txt file helps manage web crawler activities so they don’t overwork your website or index pages not meant for public view.

Below are a few reasons to use a robots.txt file:

1. Optimize Crawl Budget

Crawl budget refers to the number of pages Google will crawl on your site within a given time frame.

The number can vary based on your site’s size, health, and number of backlinks.

If your website’s number of pages exceeds your site’s crawl budget, you could have unindexed pages on your site.

Unindexed pages won’t rank, and ultimately, you’ll waste time creating pages users won’t see.

Blocking unnecessary pages with robots.txt allows Googlebot (Google’s web crawler) to spend more crawl budget on pages that matter.

Note: Most website owners don’t need to worry too much about crawl budget, according to Google. This is primarily a concern for larger sites with thousands of URLs.

2. Block Duplicate and Non-Public Pages

Crawl bots don’t need to sift through every page on your site. Because not all of them were created to be served in the search engine results pages (SERPs).

Like staging sites, internal search results pages, duplicate pages, or login pages.

Some content management systems handle these internal pages for you.

WordPress, for example, automatically disallows the login page /wp-admin/ for all crawlers.

Robots.txt allows you to block these pages from crawlers.

3. Hide Resources

Sometimes you want to exclude resources such as PDFs, videos, and images from search results.

To keep them private or have Google focus on more important content.

In either case, robots.txt keeps them from being crawled (and therefore indexed).

How Does a Robots.txt File Work?

Robots.txt files tell search engine bots which URLs they can crawl and, more importantly, which ones to ignore.

Search engines serve two main purposes:

- Crawling the web to discover content

- Indexing and delivering content to searchers looking for information

As they crawl webpages, search engine bots discover and follow links. This process takes them from site A to site B to site C across millions of links, pages, and websites.

But if a bot finds a robots.txt file, it will read it before doing anything else.

The syntax is straightforward.

Assign rules by identifying the user-agent (the search engine bot), followed by the directives (the rules).

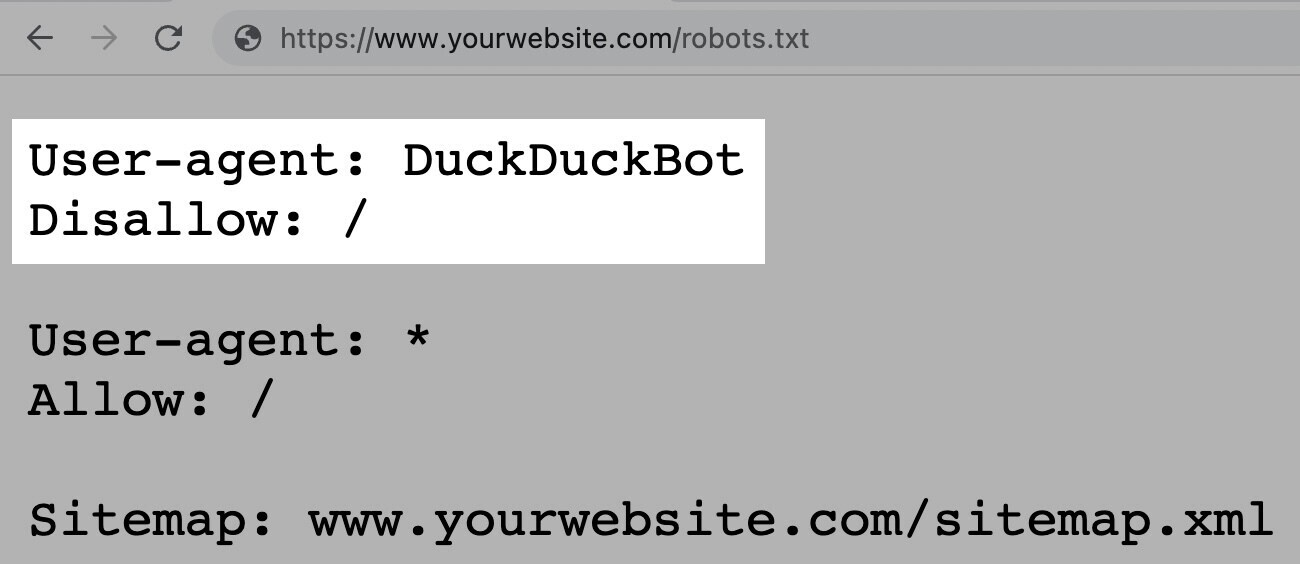

You can also use the asterisk (*) wildcard to assign directives to every user-agent, which applies the rule for all bots.

For example, the below instruction allows all bots except DuckDuckGo to crawl your site:

Note: Although a robots.txt file provides instructions, it can't enforce them. Think of it as a code of conduct. Good bots (like search engine bots) will follow the rules, but bad bots (like spam bots) will ignore them.

Semrush bots crawl the web to gather insights for our website optimization tools, such as Site Audit, Backlink Audit, On Page SEO Checker, and more.

Our bots respect the rules outlined in your robots.txt file.

If you block our bots from crawling your website, they won’t.

But it also means you can’t use some of our tools to their full potential.

For example, if you blocked our SiteAuditBot from crawling your website, you couldn’t audit your site with our Site Audit tool. To analyze and fix technical issues on your site.

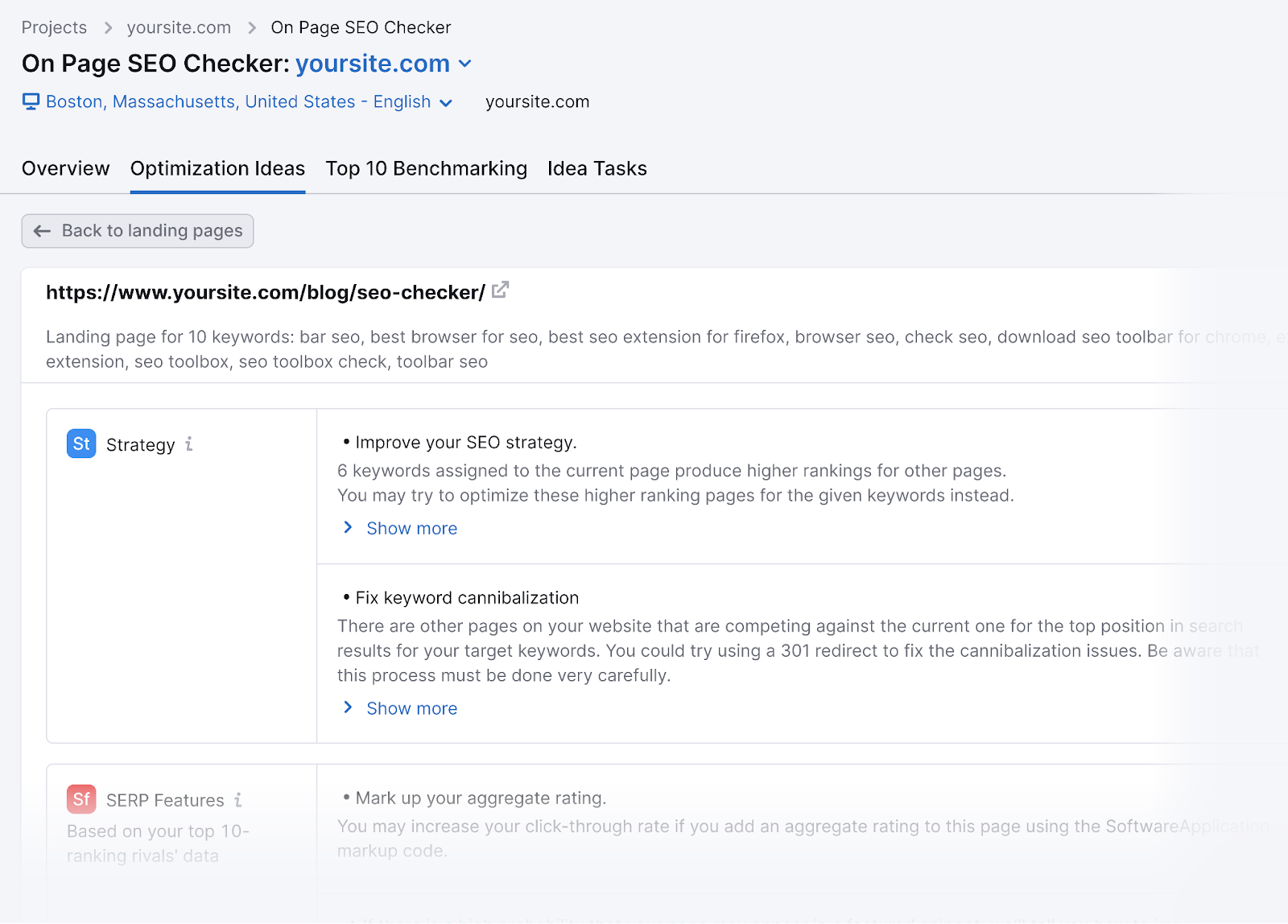

If you blocked our SemrushBot-SI from crawling your site, you couldn’t use the On Page SEO Checker tool effectively.

And you’d lose out on generating optimization ideas to improve your webpage rankings.

How to Find a Robots.txt File

The robots.txt file is hosted on your server, just like any other file on your website.

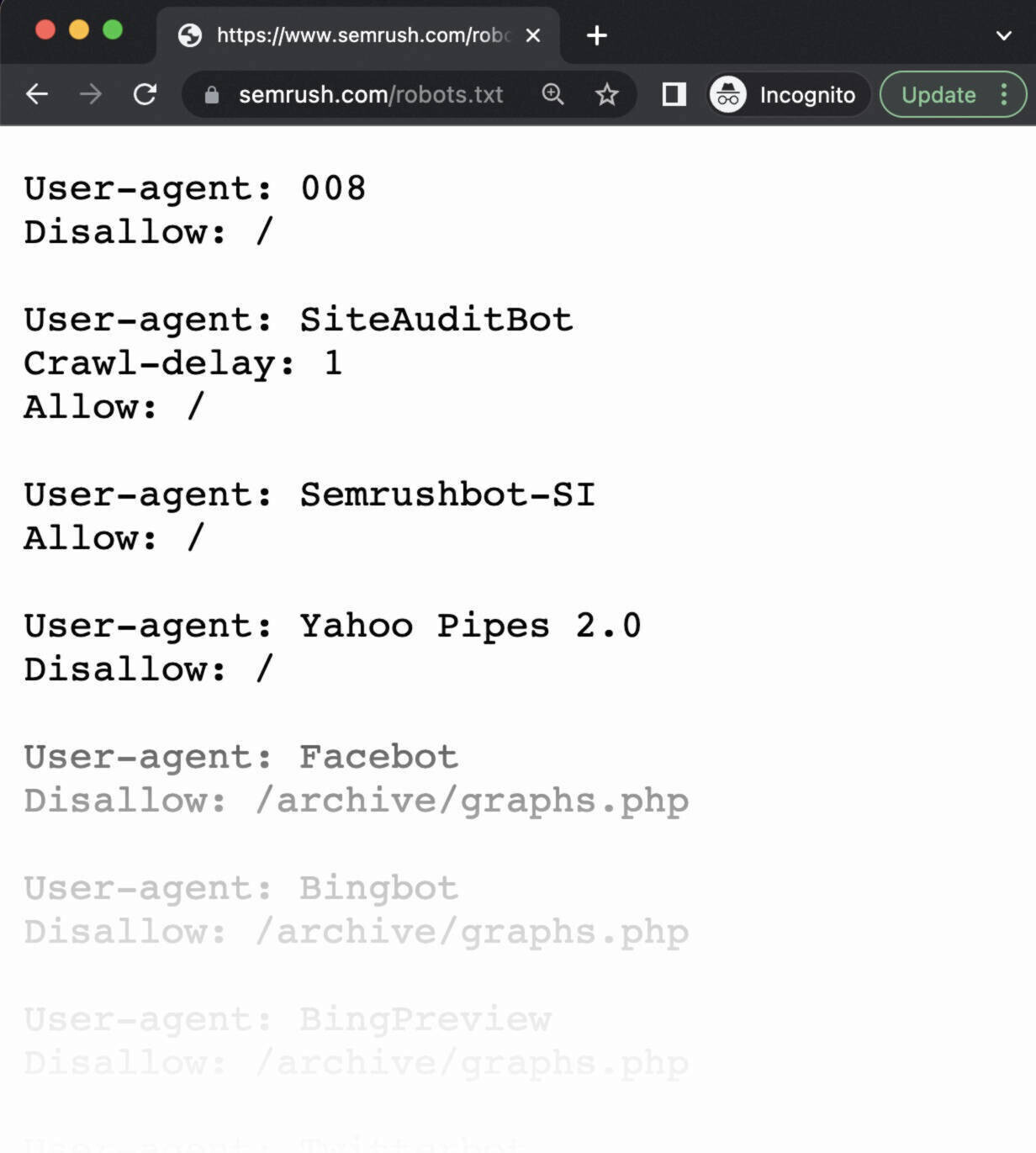

View the robots.txt file for any given website by typing the full URL for the homepage and adding “/robots.txt” at the end.

Like this: https://semrush.com/robots.txt.

Note: A robots.txt file should always live at the root domain level. For www.example.com, the robots.txt file lives at www.example.com/robots.txt. Place it anywhere else, and crawlers may assume you don’t have one.

Before learning how to create a robots.txt file, let’s look at their syntax.

Robots.txt Syntax

A robots.txt file is made up of:

- One or more blocks of “directives” (rules)

- Each with a specified “user-agent” (search engine bot)

- And an “allow” or “disallow” instruction

A simple block can look like this:

User-agent: Googlebot

Disallow: /not-for-google

User-agent: DuckDuckBot

Disallow: /not-for-duckduckgo

Sitemap: https://www.yourwebsite.com/sitemap.xmlThe User-Agent Directive

The first line of every directives block is the user-agent, which identifies the crawler.

If you want to tell Googlebot not to crawl your WordPress admin page, for example, your directive will start with:

User-agent: Googlebot

Disallow: /wp-admin/Note: Most search engines have multiple crawlers. They use different crawlers for standard indexing, images, videos, etc.

When multiple directives are present, the bot may choose the most specific block of directives available.

Let’s say you have three sets of directives: one for *, one for Googlebot, and one for Googlebot-Image.

If the Googlebot-News user agent crawls your site, it will follow the Googlebot directives.

On the other hand, the Googlebot-Image user agent will follow the more specific Googlebot-Image directives.

The Disallow Robots.txt Directive

The second line of a robots.txt directive is the “Disallow” line.

You can have multiple disallow directives that specify which parts of your site the crawler can’t access.

An empty “Disallow” line means you’re not disallowing anything—a crawler can access all sections of your site.

For example, if you wanted to allow all search engines to crawl your entire site, your block would look like this:

User-agent: *

Allow: /If you wanted to block all search engines from crawling your site, your block would look like this:

User-agent: *

Disallow: /Note: Directives such as “Allow” and “Disallow” aren’t case-sensitive. But the values within each directive are.

For example, /photo/ is not the same as /Photo/.

Still, you often find “Allow” and “Disallow” directives capitalized to make the file easier for humans to read.

The Allow Directive

The “Allow” directive allows search engines to crawl a subdirectory or specific page, even in an otherwise disallowed directory.

For example, if you want to prevent Googlebot from accessing every post on your blog except for one, your directive might look like this:

User-agent: Googlebot

Disallow: /blog

Allow: /blog/example-postNote: Not all search engines recognize this command. But Google and Bing do support this directive.

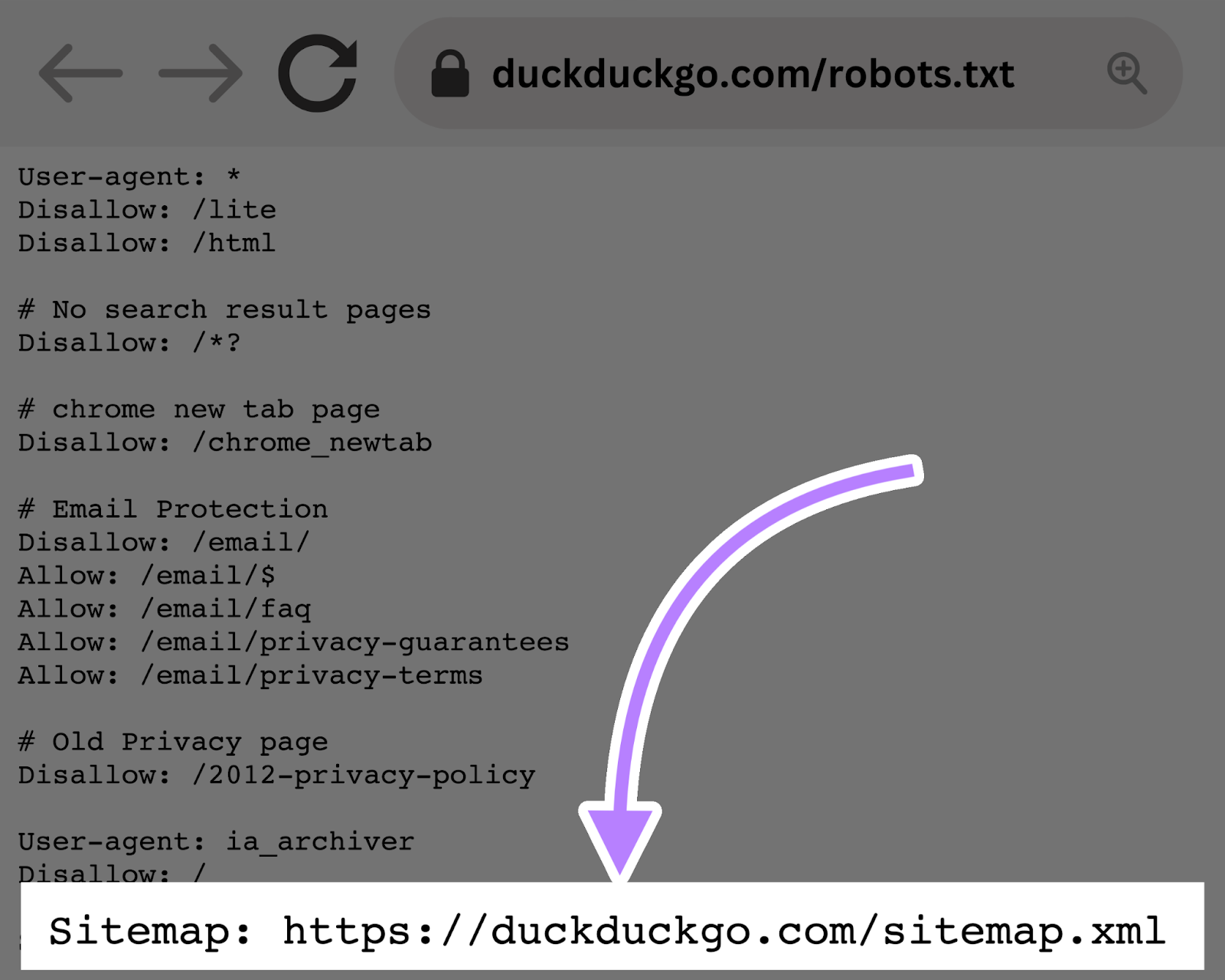

The Sitemap Directive

The Sitemap directive tells search engines—specifically Bing, Yandex, and Google—where to find your XML sitemap.

Sitemaps generally include the pages you want search engines to crawl and index.

This directive lives at the top or bottom of a robots.txt file and looks like this:

Adding a Sitemap directive to your robots.txt file is a quick alternative. But, you can (and should) also submit your XML sitemap to each search engine using their webmaster tools.

Search engines will crawl your site eventually, but submitting a sitemap speeds up the crawling process.

Crawl-Delay Directive

The crawl-delay directive instructs crawlers to delay their crawl rates. To avoid overtaxing a server (i.e., slow down your website).

Google no longer supports the crawl-delay directive. If you want to set your crawl rate for Googlebot, you’ll have to do it in Search Console.

Bing and Yandex, on the other hand, do support the crawl-delay directive. Here’s how to use it.

Let’s say you want a crawler to wait 10 seconds after each crawl action. Set the delay to 10, like so:

User-agent: *

Crawl-delay: 10Noindex Directive

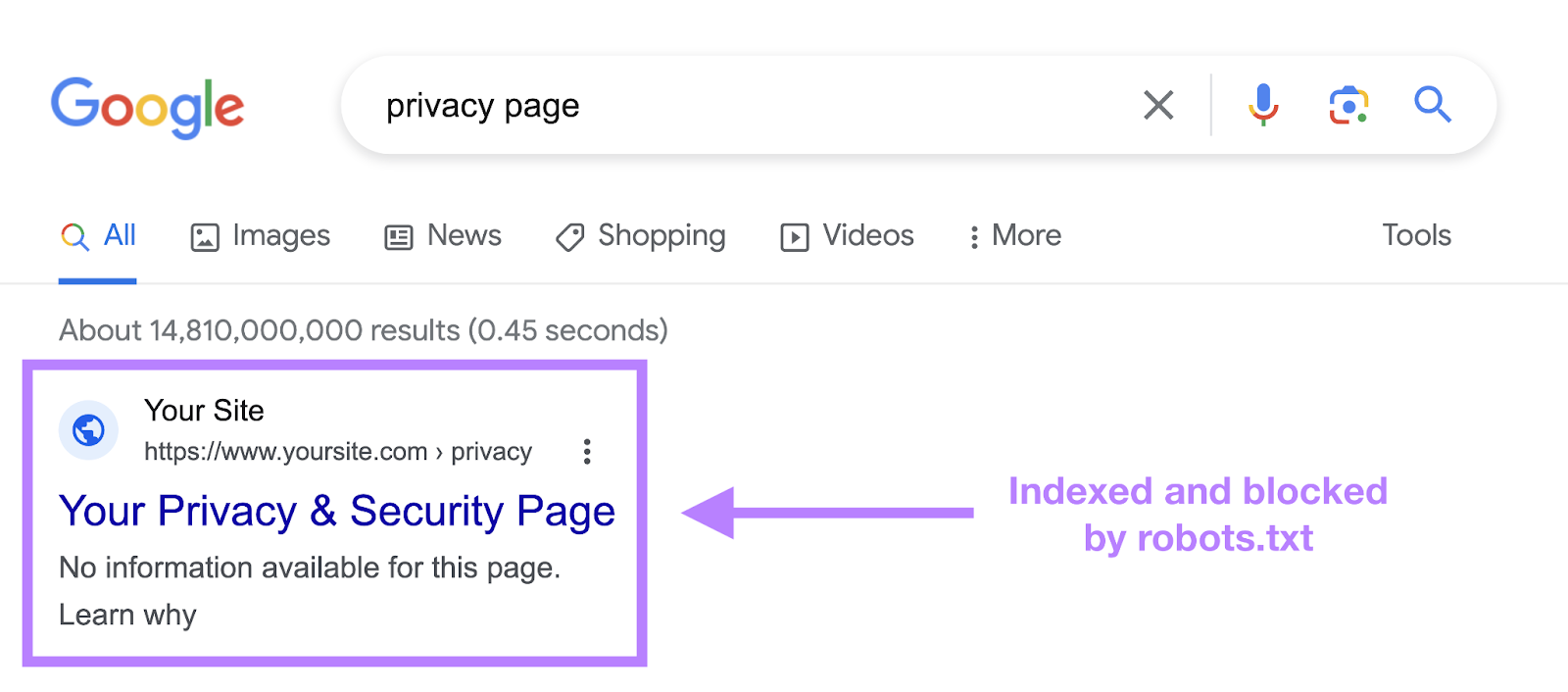

The robots.txt file tells a bot what it can or can’t crawl, but it can’t tell a search engine which URLs not to index and show in search results.

The page will still show up in search results, but the bot won’t know what’s on it, so your page will appear like this:

Google never officially supported this directive, but on September 1, 2019, Google announced that this directive is not supported.

If you want to reliably exclude a page or file from appearing in search results, avoid this directive altogether and use a meta robots noindex tag.

How to Create a Robots.txt File

Use a robots.txt generator tool or create one yourself.

Here’s how:

1. Create a File and Name It Robots.txt

Start by opening a .txt document within a text editor or web browser.

Note: Don’t use a word processor, as they often save files in a proprietary format that can add random characters.

Next, name the document robots.txt.

Now you’re ready to start typing directives.

2. Add Directives to the Robots.txt File

A robots.txt file consists of one or more groups of directives, and each group consists of multiple lines of instructions.

Each group begins with a “user-agent” and has the following information:

- Who the group applies to (the user-agent)

- Which directories (pages) or files the agent can access

- Which directories (pages) or files the agent can’t access

- A sitemap (optional) to tell search engines which pages and files you deem important

Crawlers ignore lines that don’t match these directives.

For example, let’s say you don’t want Google crawling your /clients/ directory because it’s just for internal use.

The first group would look something like this:

User-agent: Googlebot

Disallow: /clients/Additional instructions can be added in a separate line below, like so:

User-agent: Googlebot

Disallow: /clients/

Disallow: /not-for-googleOnce you’re done with Google’s specific instructions, hit enter twice to create a new group of directives.

Let’s make this one for all search engines and prevent them from crawling your /archive/ and /support/ directories because they’re for internal use only.

It would look like this:

User-agent: Googlebot

Disallow: /clients/

Disallow: /not-for-google

User-agent: *

Disallow: /archive/

Disallow: /support/Once you’re finished, add your sitemap.

Your finished robots.txt file would look something like this:

User-agent: Googlebot

Disallow: /clients/

Disallow: /not-for-google

User-agent: *

Disallow: /archive/

Disallow: /support/

Sitemap: https://www.yourwebsite.com/sitemap.xmlSave your robots.txt file. Remember, it must be named robots.txt.

Note: Crawlers read from top to bottom and match with the first most specific group of rules. So, start your robots.txt file with specific user agents first, and then move on to the more general wildcard (*) that matches all crawlers.

3. Upload the Robots.txt File

After you’ve saved the robots.txt file to your computer, upload it to your site and make it available for search engines to crawl.

Unfortunately, there’s no universal tool for this step.

Uploading the robots.txt file depends on your site’s file structure and web hosting.

Search online or reach out to your hosting provider for help on uploading your robots.txt file.

For example, you can search for "upload robots.txt file to WordPress."

Below are some articles explaining how to upload your robots.txt file in the most popular platforms:

- Robots.txt file in WordPress

- Robots.txt file in Wix

- Robots.txt file in Joomla

- Robots.txt file in Shopify

- Robots.txt file in BigCommerce

After uploading, check if anyone can see it and if Google can read it.

Here’s how.

4. Test Your Robots.txt

First, test whether your robots.txt file is publicly accessible (i.e., if it was uploaded correctly).

Open a private window in your browser and search for your robots.txt file.

For example, https://semrush.com/robots.txt.

If you see your robots.txt file with the content you added, you’re ready to test the markup (HTML code).

Google offers two options for testing robots.txt markup:

- The robots.txt Tester in Search Console

- Google’s open-source robots.txt library (advanced)

Because the second option is geared toward advanced developers, let’s test your robots.txt file in Search Console.

Note: You must have a Search Console account set up to test your robots.txt file.

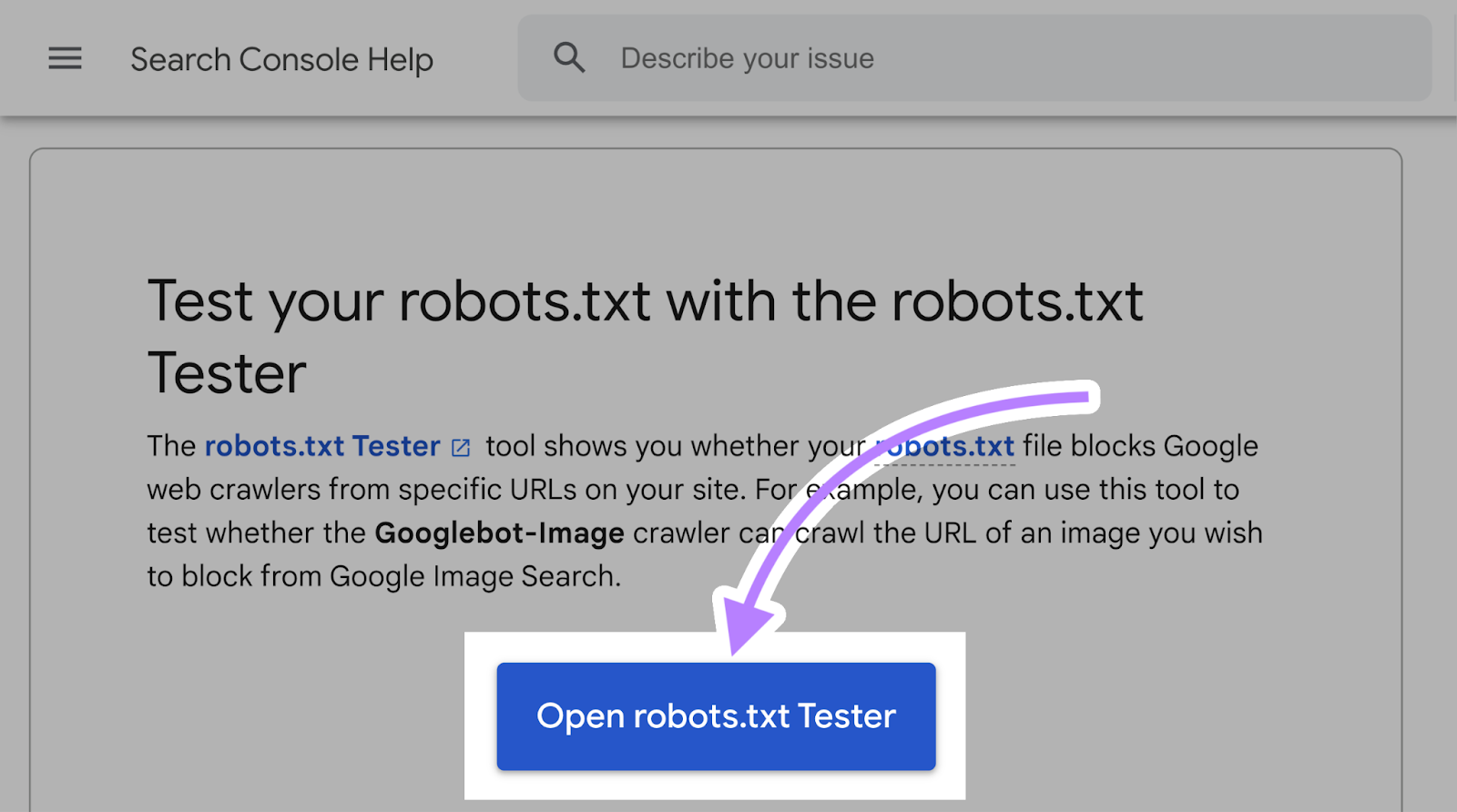

Go to the robots.txt Tester and click on “Open robots.txt Tester.”

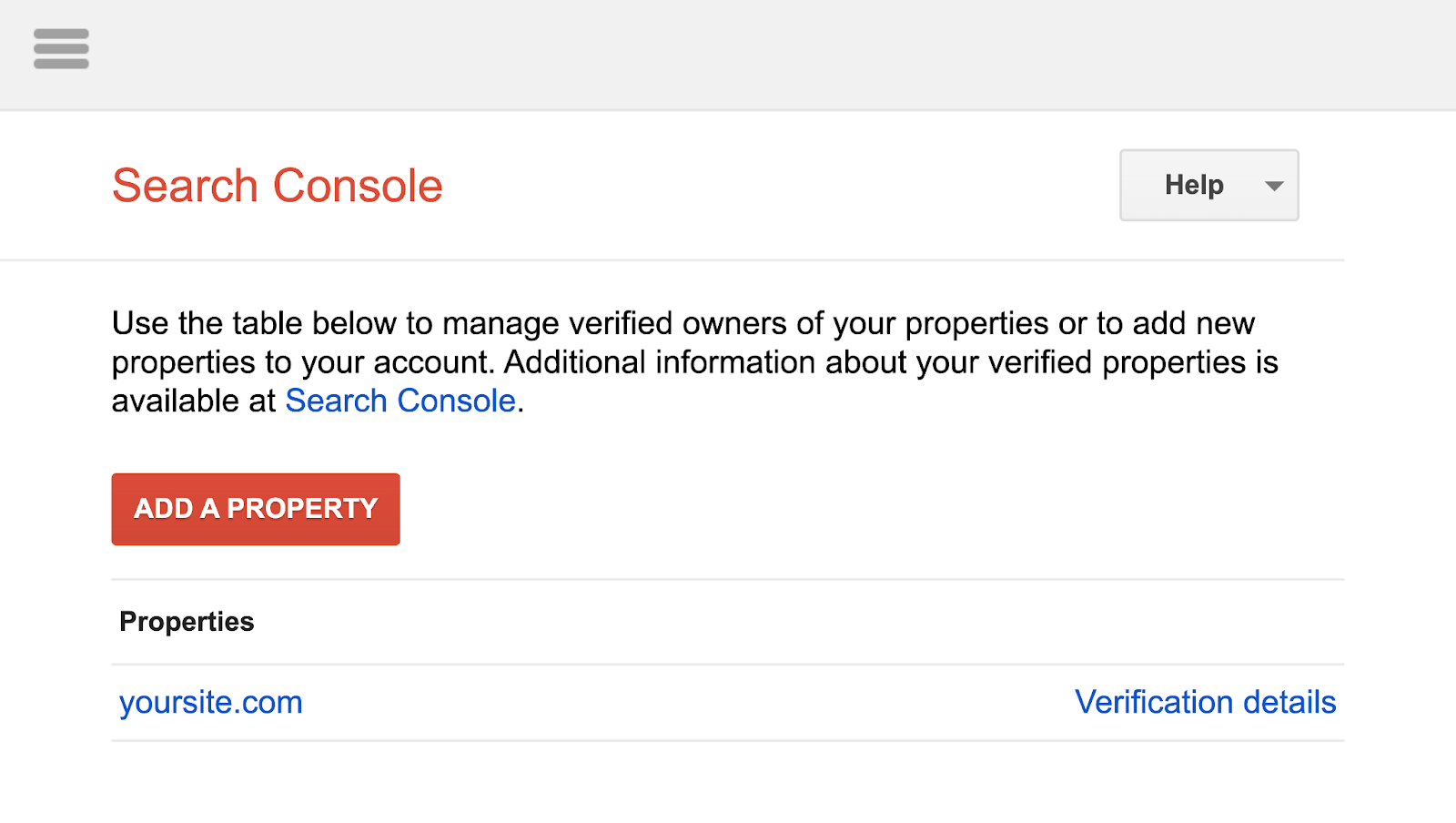

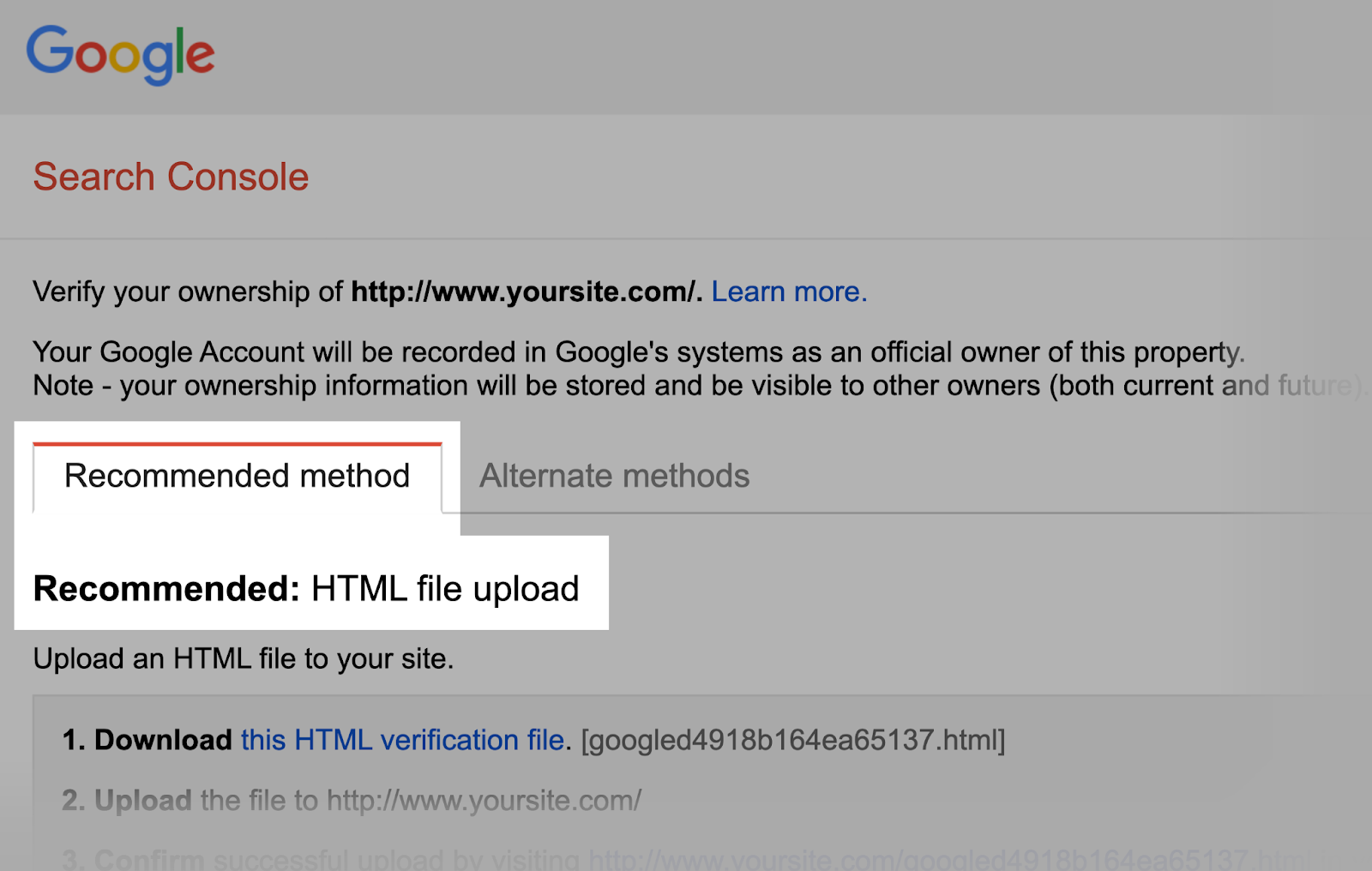

If you haven’t linked your website to your Google Search Console account, you’ll need to add a property first.

Then, verify you are the site’s real owner.

Note: Google is planning to shut down this setup wizard. So in the future, you’ll have to directly verify your property in the Search Console. Read our full guide to Google Search Console to learn how.

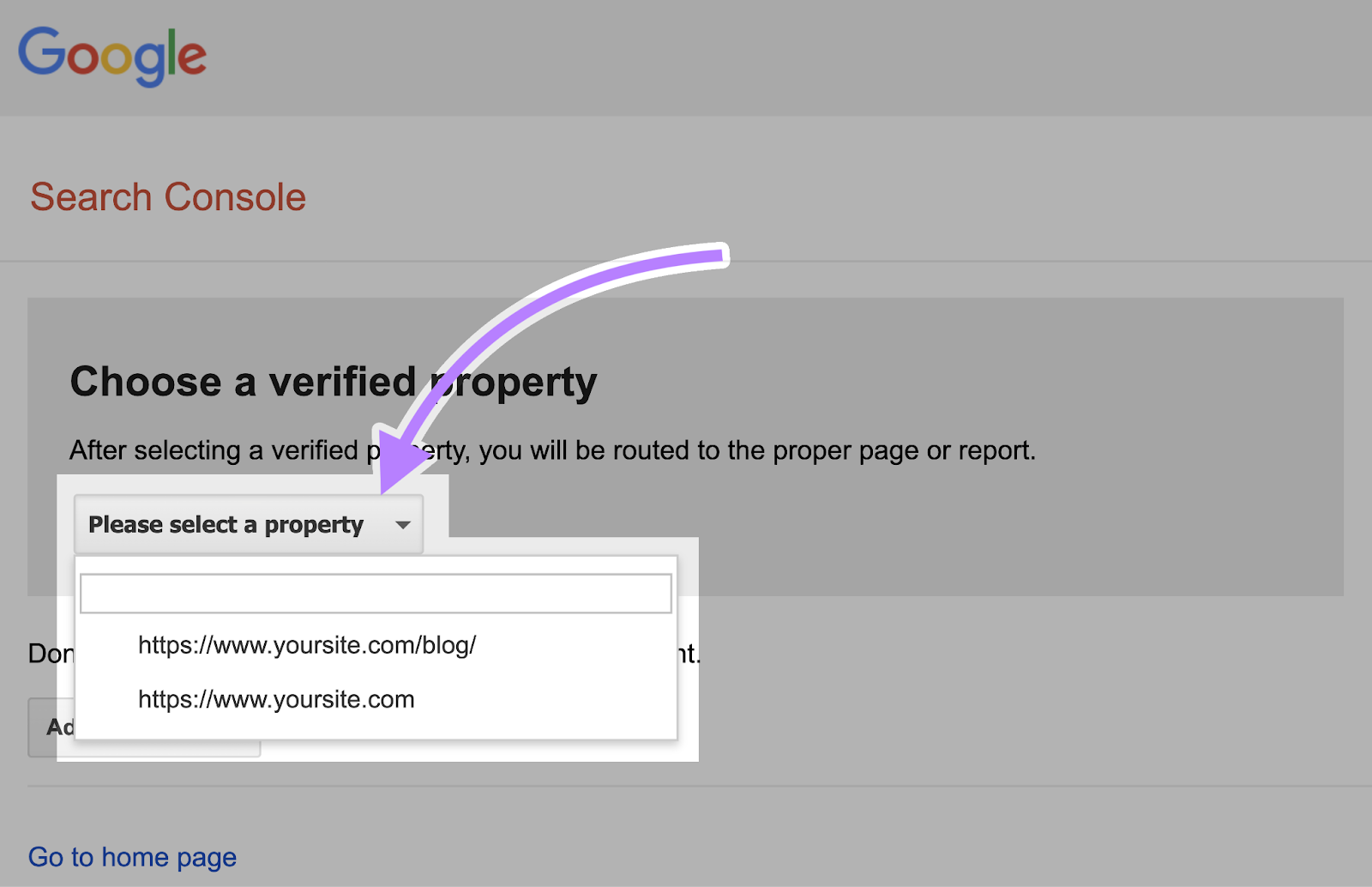

If you have existing verified properties, select one from the drop-down list on the Tester’s homepage.

The Tester will identify syntax warnings or logic errors.

And display the total number of warnings and errors below the editor.

You can edit errors or warnings directly on the page and retest as you go.

Any changes made on the page aren’t saved to your site. The tool doesn’t change the actual file on your site. It only tests against the copy hosted in the tool.

To implement any changes, copy and paste the edited test copy into the robots.txt file on your site.

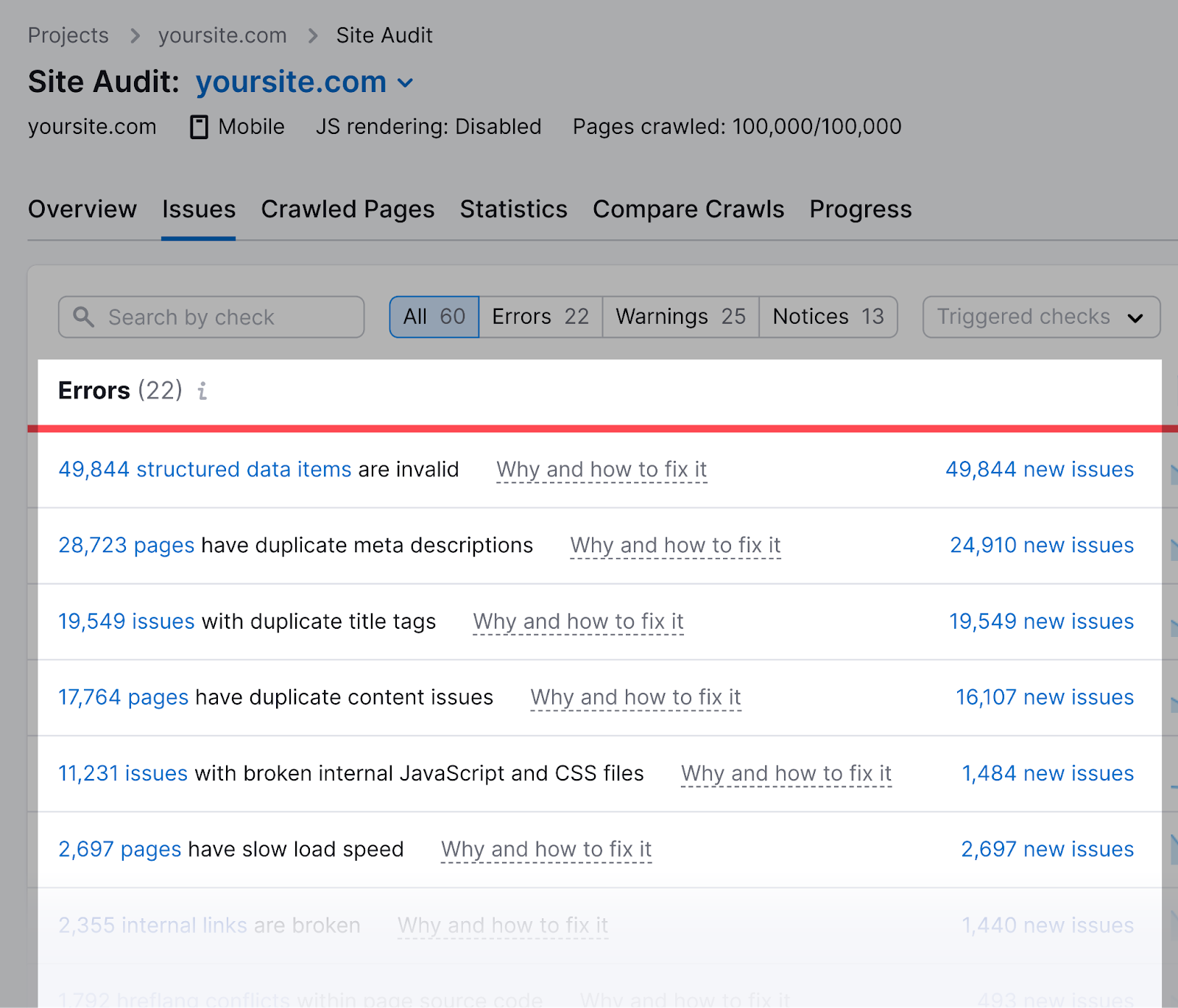

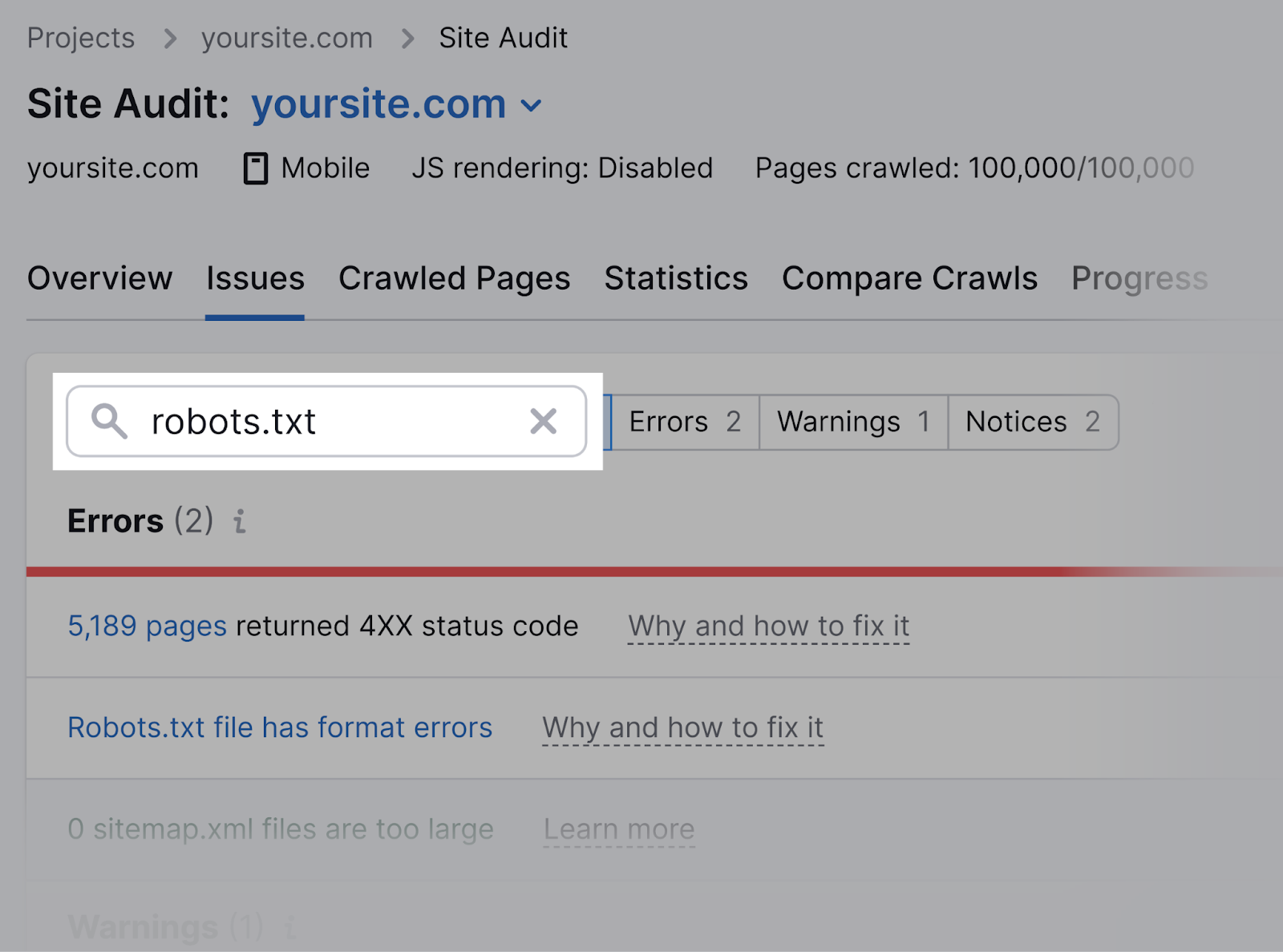

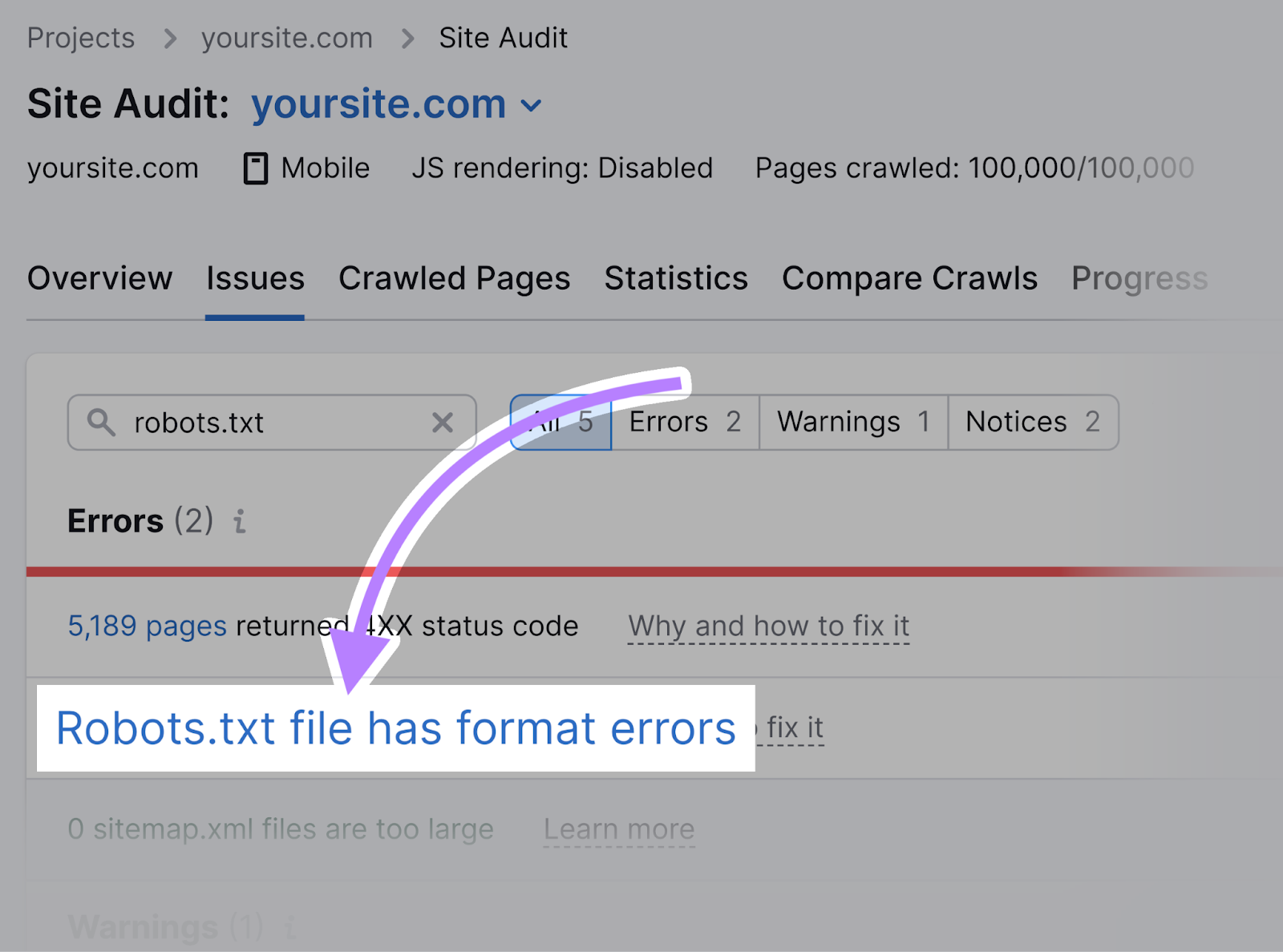

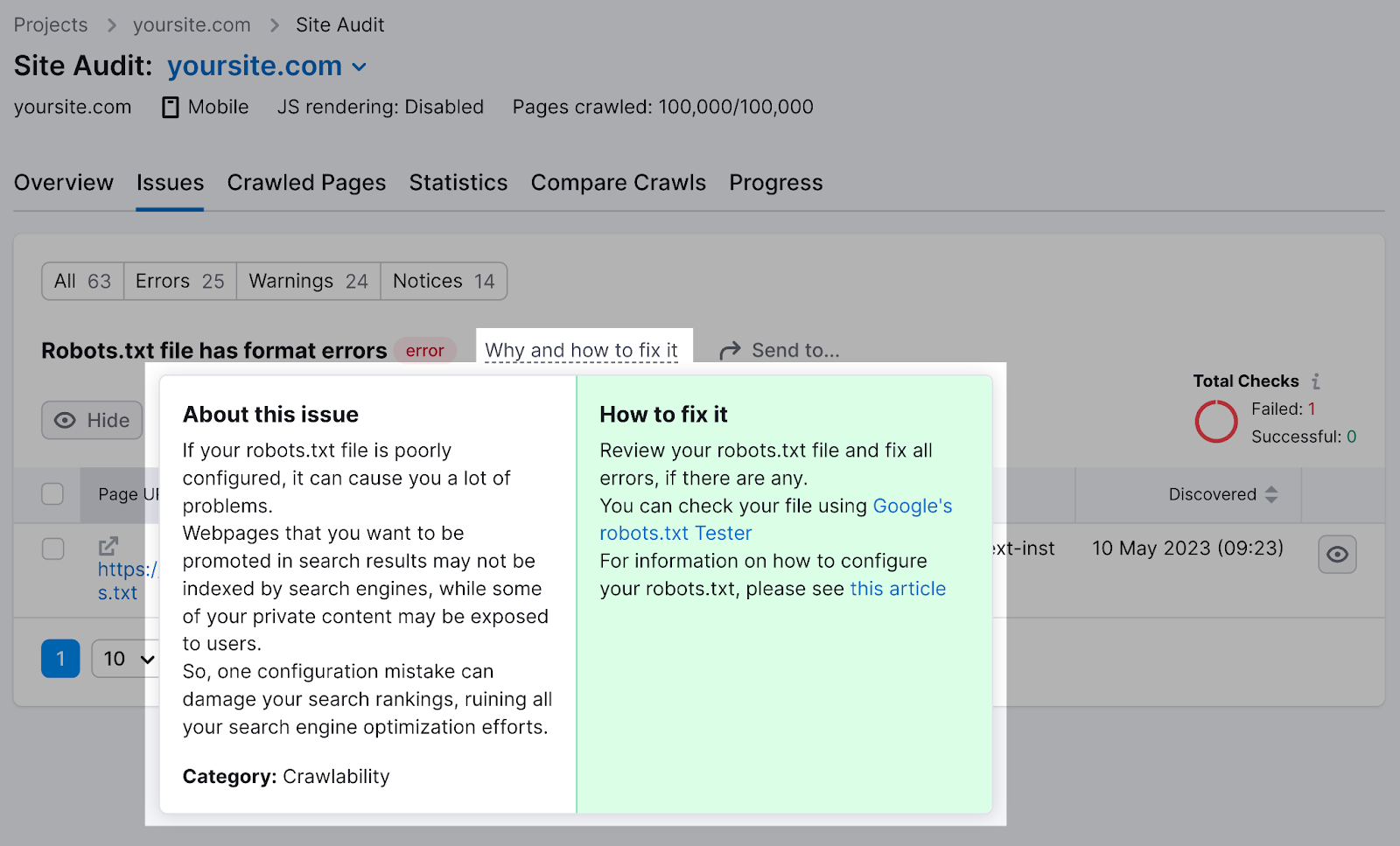

Semrush’s Site Audit tool can check for issues regarding your robots.txt file.

First, set up a project in the tool and audit your website.

Once complete, navigate to the “Issues” tab and search for “robots.txt.”

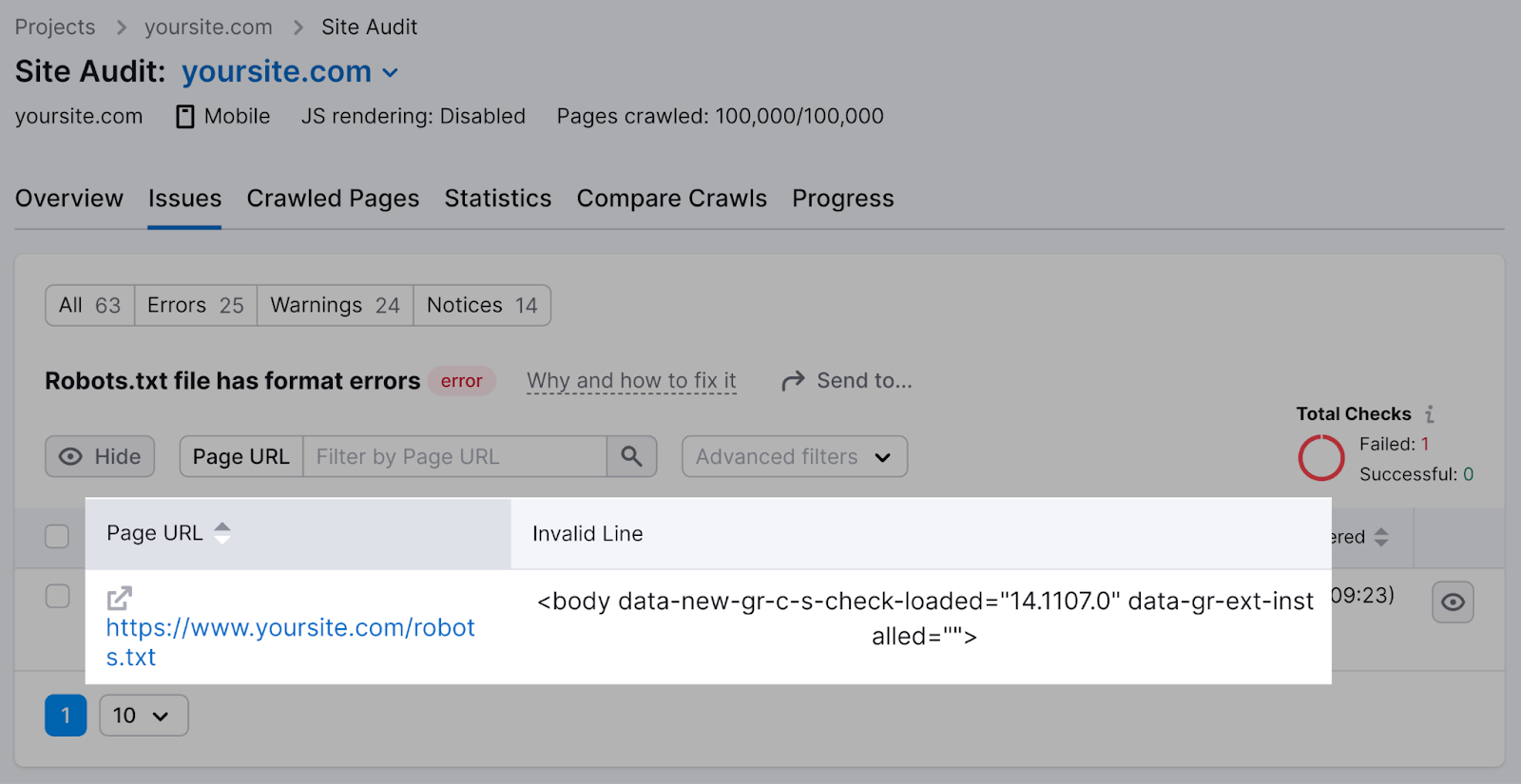

Click on the “Robots.txt file has format errors” link if it turns out that your file has format errors.

You’ll see a list of specific invalid lines.

You can click “Why and how to fix it” to get specific instructions on how to fix the error.

Checking your robots.txt file for issues is important, as even minor mistakes can negatively affect your site’s indexability.

Robots.txt Best Practices

Use New Lines for Each Directive

Each directive should sit on a new line.

Otherwise, search engines won’t be able to read them, and your instructions will be ignored.

Incorrect:

User-agent: * Disallow: /admin/

Disallow: /directory/Correct:

User-agent: *

Disallow: /admin/

Disallow: /directory/Use Each User-Agent Once

Bots don’t mind if you enter the same user-agent multiple times.

But referencing it only once keeps things neat and simple. And reduces the chance of human error.

Confusing:

User-agent: Googlebot

Disallow: /example-page

User-agent: Googlebot

Disallow: /example-page-2Notice how the Googlebot user-agent is listed twice.

Clear:

User-agent: Googlebot

Disallow: /example-page

Disallow: /example-page-2In the first example, Google would still follow the instructions and not crawl either page.

But writing all directives under the same user-agent is cleaner and helps you stay organized.

Use Wildcards to Clarify Directions

You can use wildcards (*) to apply a directive to all user-agents and match URL patterns.

For example, to prevent search engines from accessing URLs with parameters, you could technically list them out one by one.

But that’s inefficient. You can simplify your directions with a wildcard.

Inefficient:

User-agent: *

Disallow: /shoes/vans?

Disallow: /shoes/nike?

Disallow: /shoes/adidas?Efficient:

User-agent: *

Disallow: /shoes/*?The above example blocks all search engine bots from crawling all URLs under the /shoes/ subfolder with a question mark.

Use ‘$’ to Indicate the End of a URL

Adding the “$” indicates the end of a URL.

For example, if you want to block search engines from crawling all .jpg files on your site, you can list them individually.

But that would be inefficient.

Inefficient:

User-agent: *

Disallow: /photo-a.jpg

Disallow: /photo-b.jpg

Disallow: /photo-c.jpgInstead, add the “$” feature, like so:

Efficient:

User-agent: *

Disallow: /*.jpg$Note: In this example, /dog.jpg can’t be crawled, but /dog.jpg?p=32414 can be because it doesn’t end with “.jpg.”

The “$” expression is a helpful feature in specific circumstances such as the above. But it can also be dangerous.

You can easily unblock things you didn’t mean to, so be prudent in its application.

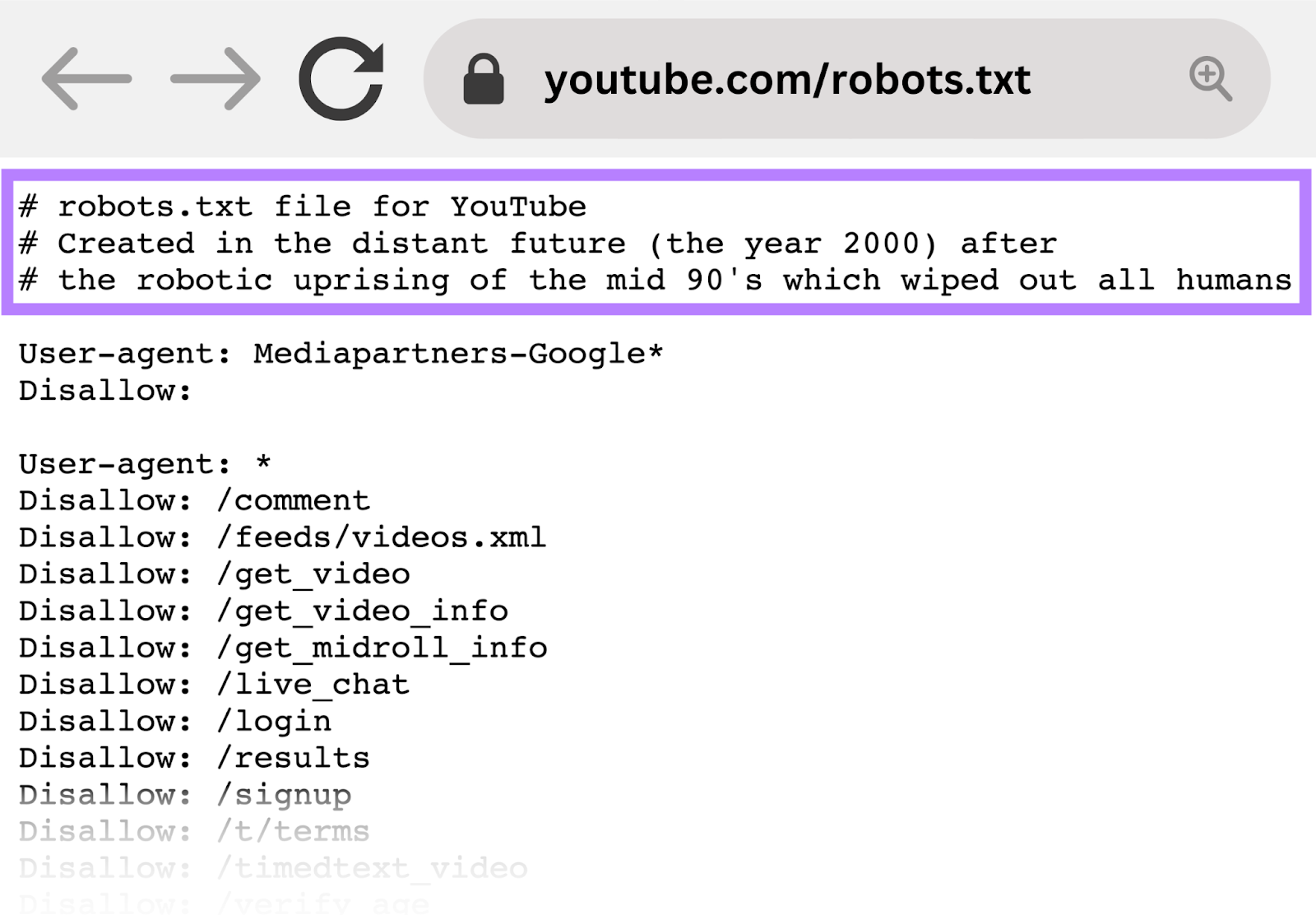

Use the Hash (#) to Add Comments

Crawlers ignore everything that starts with a hash (#).

So, developers often use a hash to add a comment in the robots.txt file. It helps keep the file organized and easy to read.

To add a comment, begin the line with a hash (#).

Like this:

User-agent: *

#Landing Pages

Disallow: /landing/

Disallow: /lp/

#Files

Disallow: /files/

Disallow: /private-files/

#Websites

Allow: /website/*

Disallow: /website/search/*Developers occasionally include funny messages in robots.txt files because they know users rarely see them.

For example, YouTube’s robots.txt file reads: “Created in the distant future (the year 2000) after the robotic uprising of the mid 90’s which wiped out all humans.”

And Nike’s robots.txt reads “just crawl it” (a nod to its “just do it” tagline) and its logo.

Use Separate Robots.txt Files for Different Subdomains

Robots.txt files control crawling behavior only on the subdomain in which they’re hosted.

To control crawling on a different subdomain, you’ll need a separate robots.txt file.

So, if your main site lives on domain.com and your blog lives on the subdomain blog.domain.com, you’d need two robots.txt files.

One for the main domain's root directory and the other for your blog’s root directory.

Keep Learning

Now that you have a good understanding of how robots.txt files work, here are a few additional resources to continue learning: