Search engines like Google use website crawlers to read and understand webpages.

But SEO professionals can also use web crawlers to uncover issues and opportunities within their own sites. Or to extract information from competing websites.

There are tons of crawling and scraping tools available online. While some are useful for SEO and data collection, others may have questionable intentions or pose potential risks.

To help you navigate the world of website crawlers, we’ll walk you through what crawlers are, how they work, and how you can safely use the right tools to your advantage.

What Is a Website Crawler?

A web crawler is a bot that automatically accesses and processes webpages to understand their content.

They go by many names, like:

- Crawler

- Bot

- Spider

- Spiderbot

The spider nicknames come from the fact that these bots crawl across the World Wide Web.

Search engines use crawlers to discover and categorize webpages. Then, serve the ones they deem best to users in response to search queries.

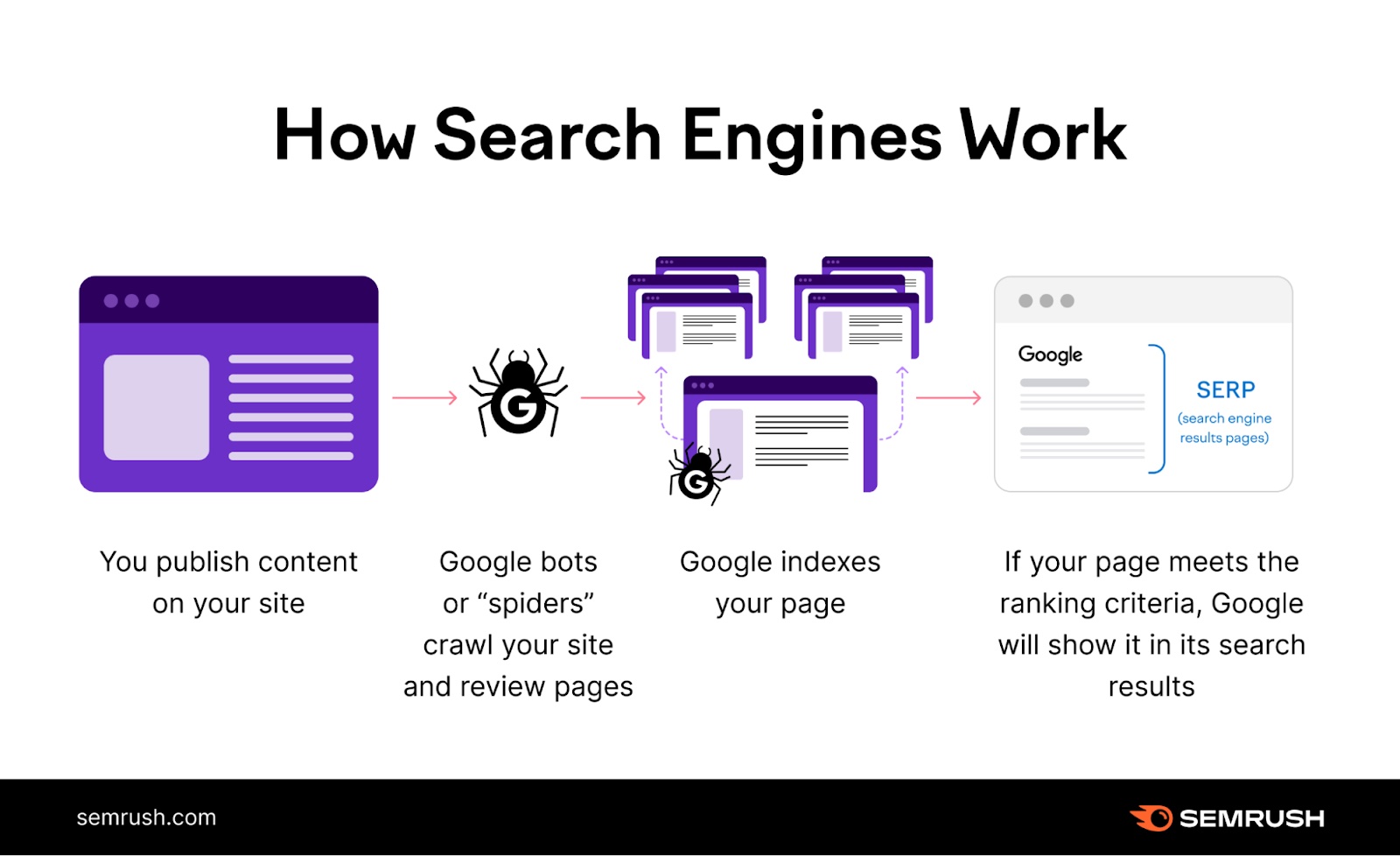

For example, Google’s web crawlers are key players in the search engine process:

- You publish or update content on your website

- Bots crawl your site’s new or updated pages

- Google indexes the pages crawlers find—though there are some issues that can prevent indexing in some cases

- Google (hopefully) presents your page in search results based on its relevance to a user’s query

But search engines aren’t the only partiers that use site crawlers. You can also deploy web crawlers yourself to gather information about webpages.

Publicly available crawlers are slightly different from search engine crawlers like Googlebot or Bingbot (the unique web crawlers that Google and Bing use). But they work in a similar way—they access a website and “read” it as a search engine crawler would.

And you can use information from these types of crawlers to improve your website. Or to better understand other websites.

How Do Web Crawlers Work?

Web crawlers scan three major elements on a webpage: content, code, and links.

By reading the content, bots can assess what a page is about. This information helps search engine algorithms determine which pages have the answers users are looking for when they make a search.

That’s why using SEO keywords strategically is so important. They help improve an algorithm’s ability to connect that page to related searches.

While reading a page’s content, web spiders are also crawling a page’s HTML code. (All websites are composed of HTML code that structures each webpage and its content.)

And you can use certain HTML code (like meta tags) to help crawlers better understand your page’s content and purpose.

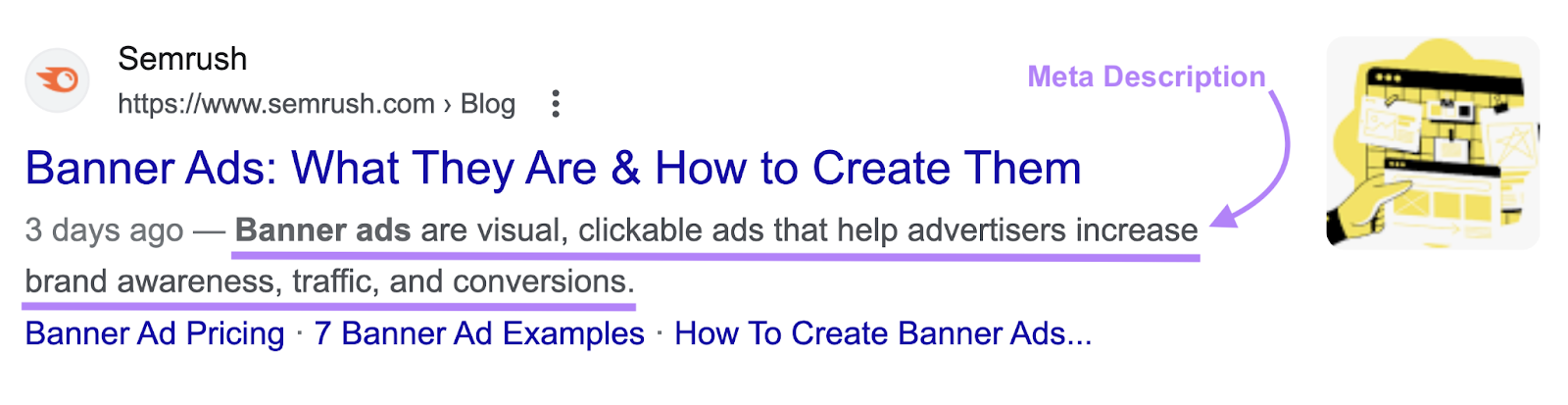

For example, you can influence how your page might appear in Google search results using a meta description tag.

Here’s a meta description:

And here’s the code for that meta description tag:

Leveraging meta tags is just another way to give search engine crawlers useful information about your page so it can get indexed appropriately.

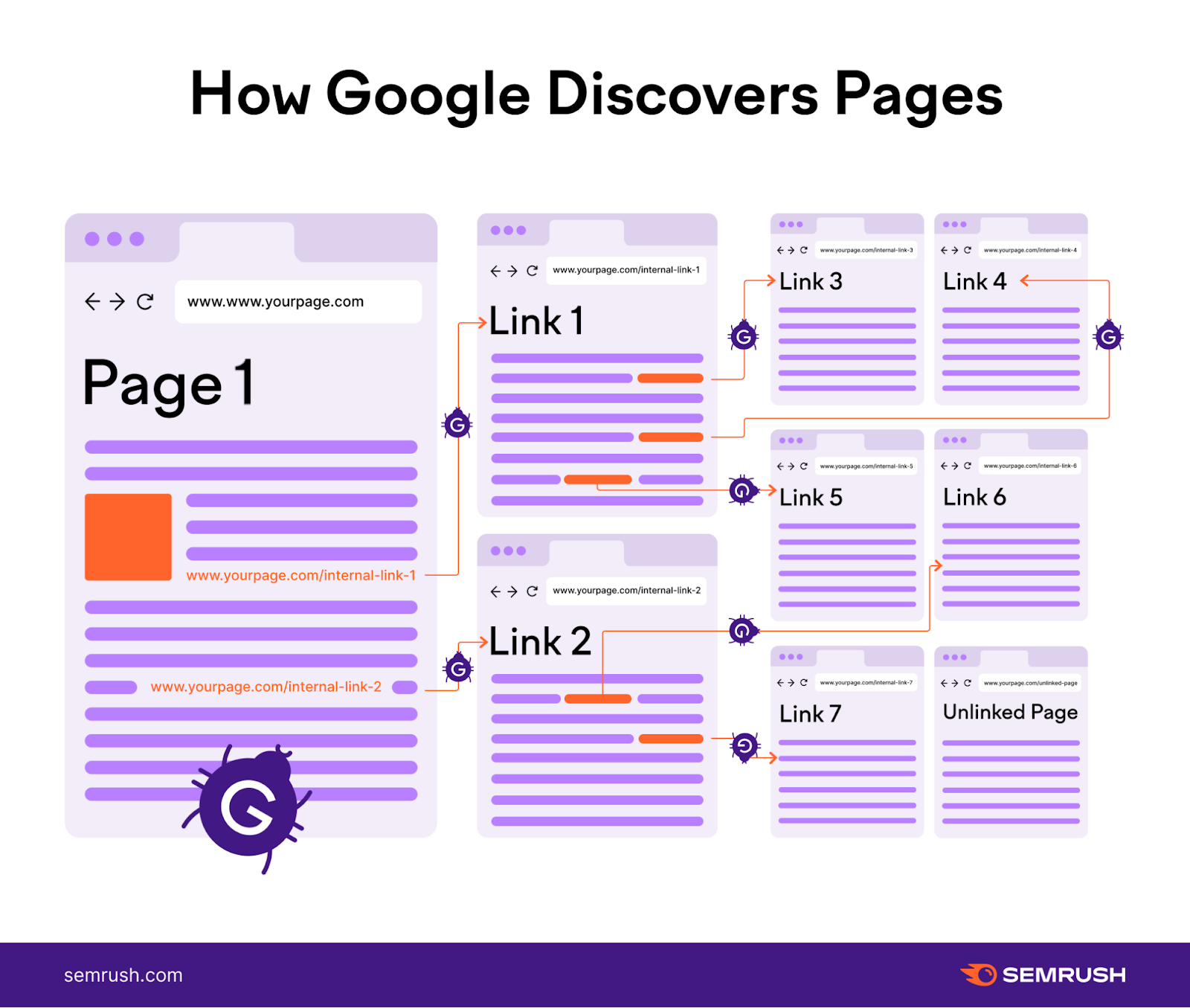

Crawlers need to scour billions of webpages. To accomplish this, they follow pathways. Those pathways are largely determined by internal links.

If Page A links to Page B within its content, the bot can follow the link from Page A to Page B. And then process Page B.

This is why internal linking is so important for SEO. It helps search engine crawlers find and index all the pages on your site.

Why You Should Crawl Your Own Website

Auditing your own site using a web crawler allows you to find crawlability and indexibility issues that might otherwise slip through the cracks.

Crawling your own site also allows you to see your site the way a search engine crawler would. To help you optimize it.

Here are just a few examples of important use cases for a personal site audit:

Ensuring Google Crawlers Can Easily Navigate Your Site

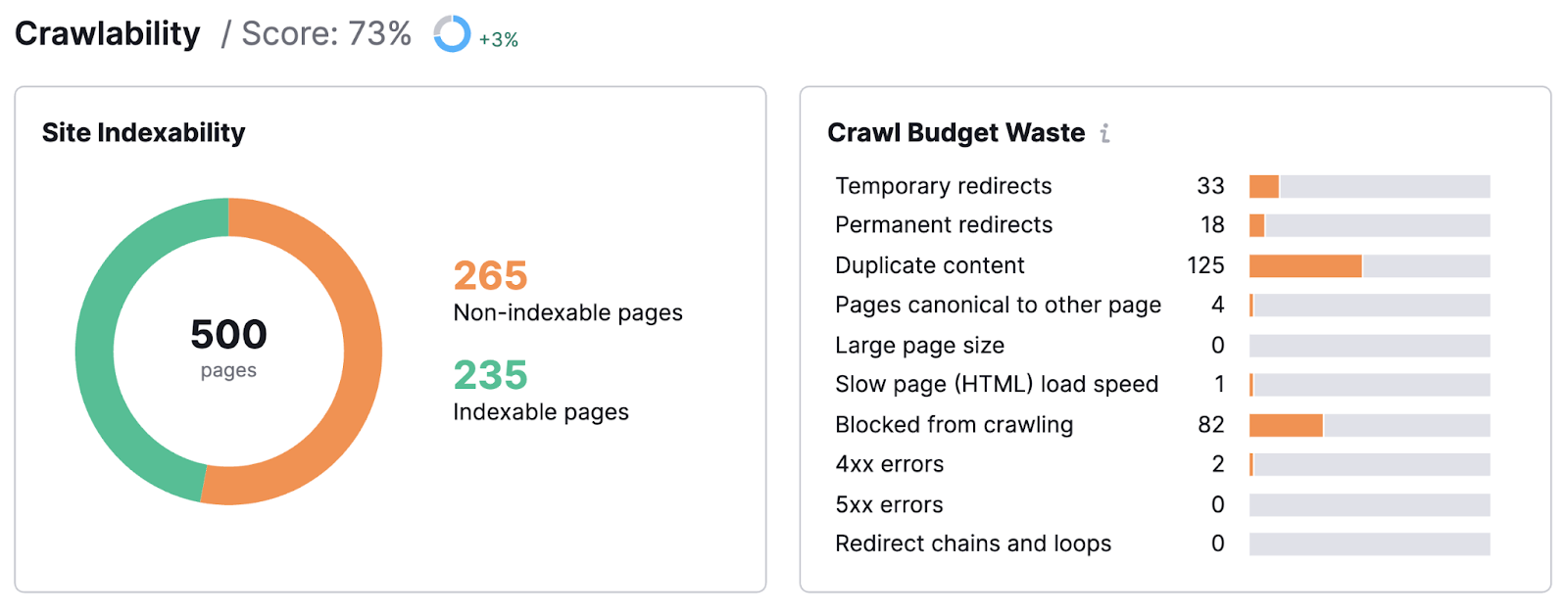

A site audit can tell you exactly how easy it is for Google bots to navigate your site. And process its content.

For example, you can find which types of issues prevent your site from being crawled effectively. Like temporary redirects, duplicate content, and more.

Your site audit may even uncover pages that Google isn’t able to index.

This might be due to any number of reasons. But whatever the cause, you need to fix it. Or risk losing time, money, and ranking power.

The good news is once you’ve identified problems, you can resolve them. And get back on the path to SEO success.

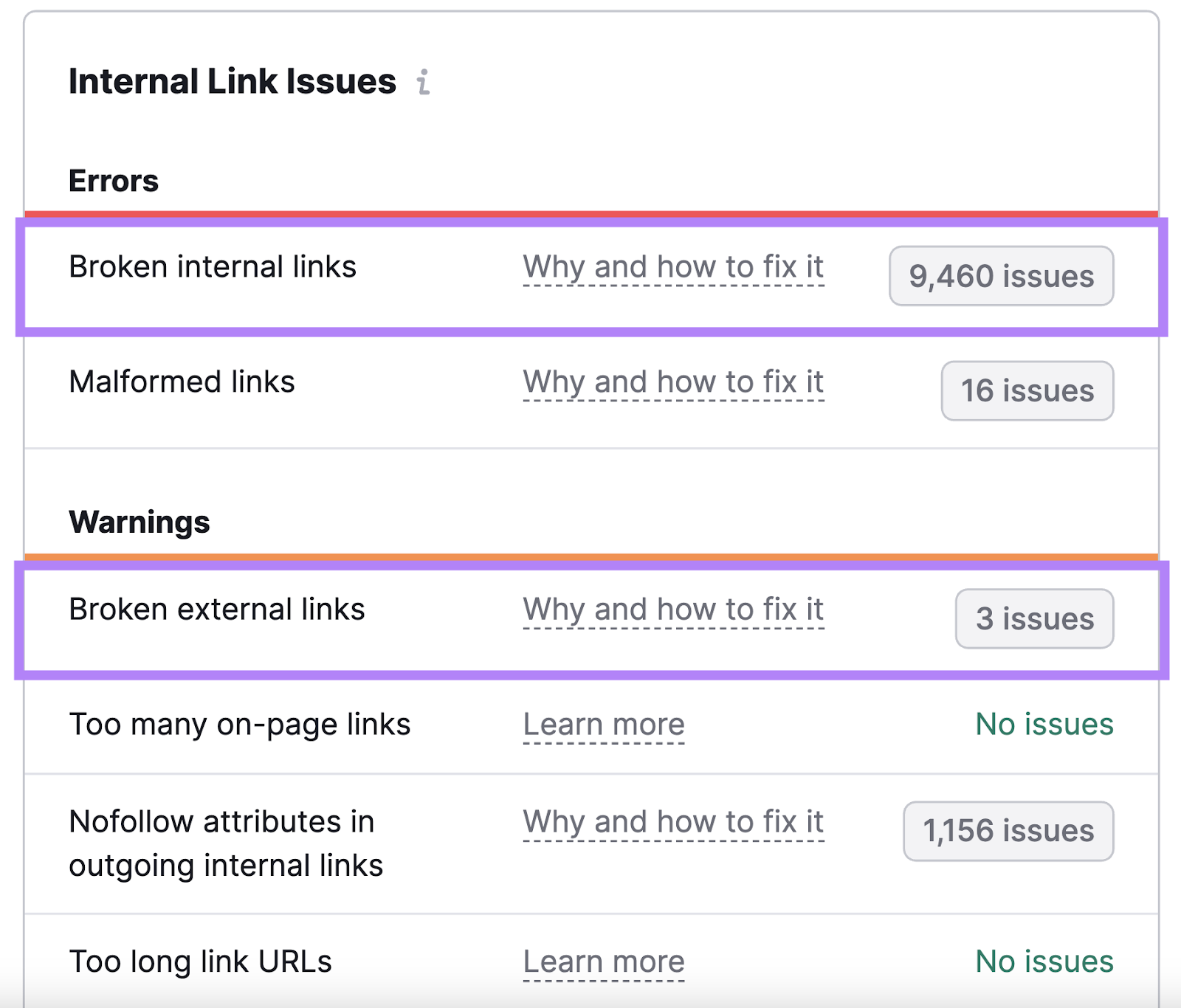

Identifying Broken Links to Improve Site Health and Link Equity

Broken links are one of the most common linking mistakes.

They’re a nuisance to users. And to Google’s web crawlers—because they make your site appear poorly maintained or coded.

Find broken links and fix them to ensure strong site health.

The fixes themselves can be straightforward: remove the link, replace it, or contact the owner of the website you’re linking to (if it’s an external link) and report the issue.

Finding Duplicate Content to Fix Chaotic Rankings

Duplicate content (identical or nearly identical content that can be found elsewhere on your site) can cause major SEO issues by confusing search engines.

It may cause the wrong version of a page to show up in the search results. Or, it may even look like you’re using bad-faith practices to manipulate Google.

A site audit can help you find duplicate content.

Then, you can fix it. So the right page can claim its spot in search results.

Crawlers vs. Scrapers: Comparing Tools

Content crawlers and content scrapers are often referred to interchangeably.

But crawlers access and index website content. While scrapers are used to extract data from webpages or even entire websites.

Some malicious actors use scrapers to rip off and republish other websites’ content. Which violates those sites’ copyrights and can steal from their SEO efforts.

That said, there are legitimate use cases for scrapers.

Like scraping data for collective analysis (e.g., scraping competitors’ product listings to assess the best way to describe, price, and present similar items). Or scraping and lawfully republishing content on your own website (like by asking for explicit permission from the original publisher).

Here are some examples of good tools that fall under both categories.

3 Scraper Tools

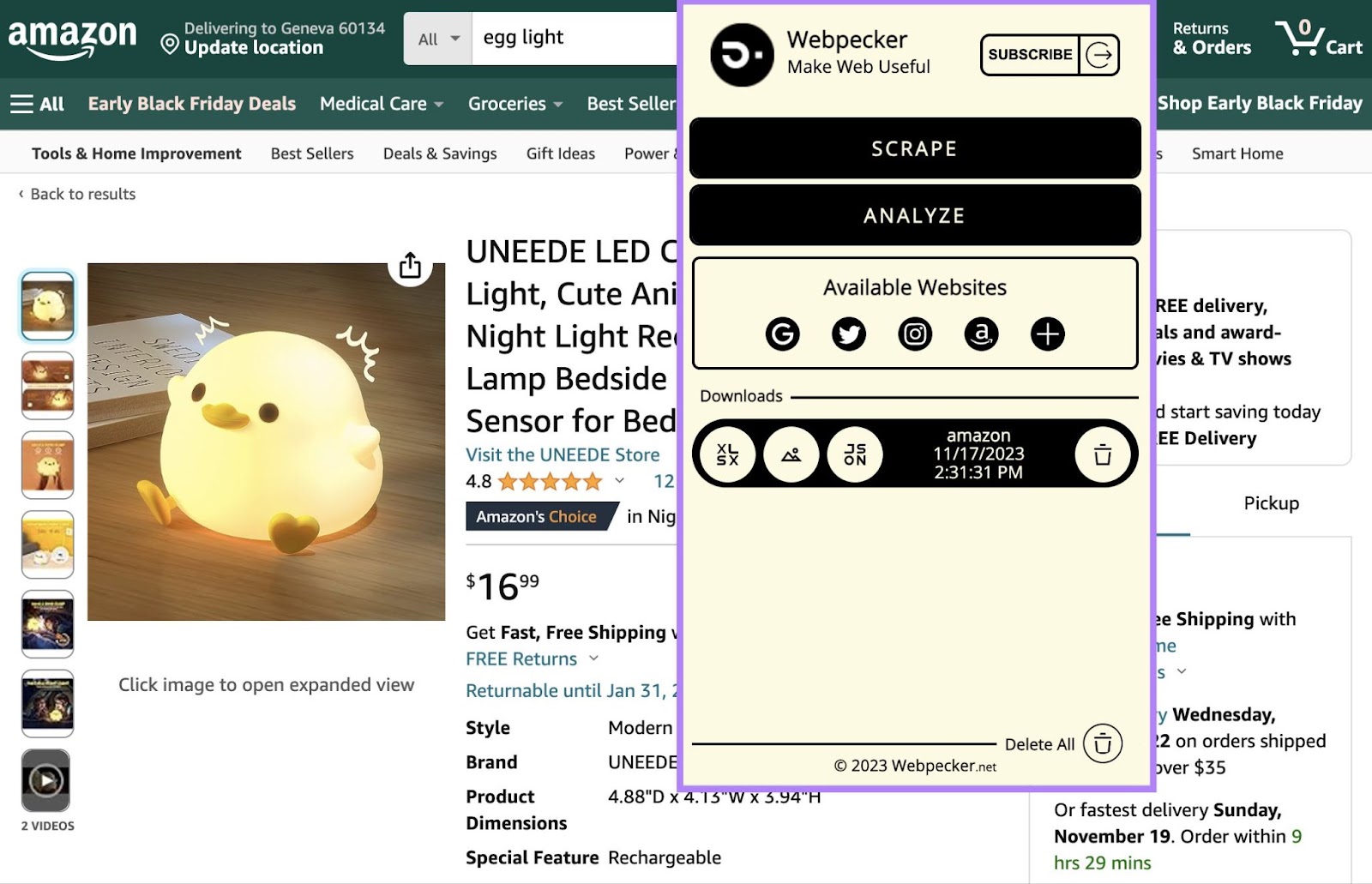

Webpecker

Webpecker is a Chrome extension that lets you scrape data from major search engines like Amazon and social networks. Then, you can download the data in XLSX, JSON, or ZIP formats.

For example, you can scrape the following from Amazon:

- Links

- Prices

- Images

- Image URLs

- Data URLs

- Titles

- Ratings

- Coupons

Or, you can scrape the following from Instagram:

- Links

- Images

- Image URLs

- Data URLs

- Alt text (the text that’s read aloud by screen readers and that displays when an image fails to load)

- Avatars

- Likes

- Comments

- Date and time

- Titles

- Mentions

- Hashtags

- Locations

Collecting this data allows you to analyze competitors and find inspiration for your own website or social media presence.

For instance, consider hashtags on Instagram.

Using them strategically can put your content in front of your target audience and increase user engagement. But with endless possibilities, choosing the right hashtags can be a challenge.

By compiling a list of hashtags your competitors use on high-performing posts, you can jumpstart your own hashtag success.

This kind of tool can be especially useful if you’re just starting out and aren’t sure how to approach product listings or social media postings.

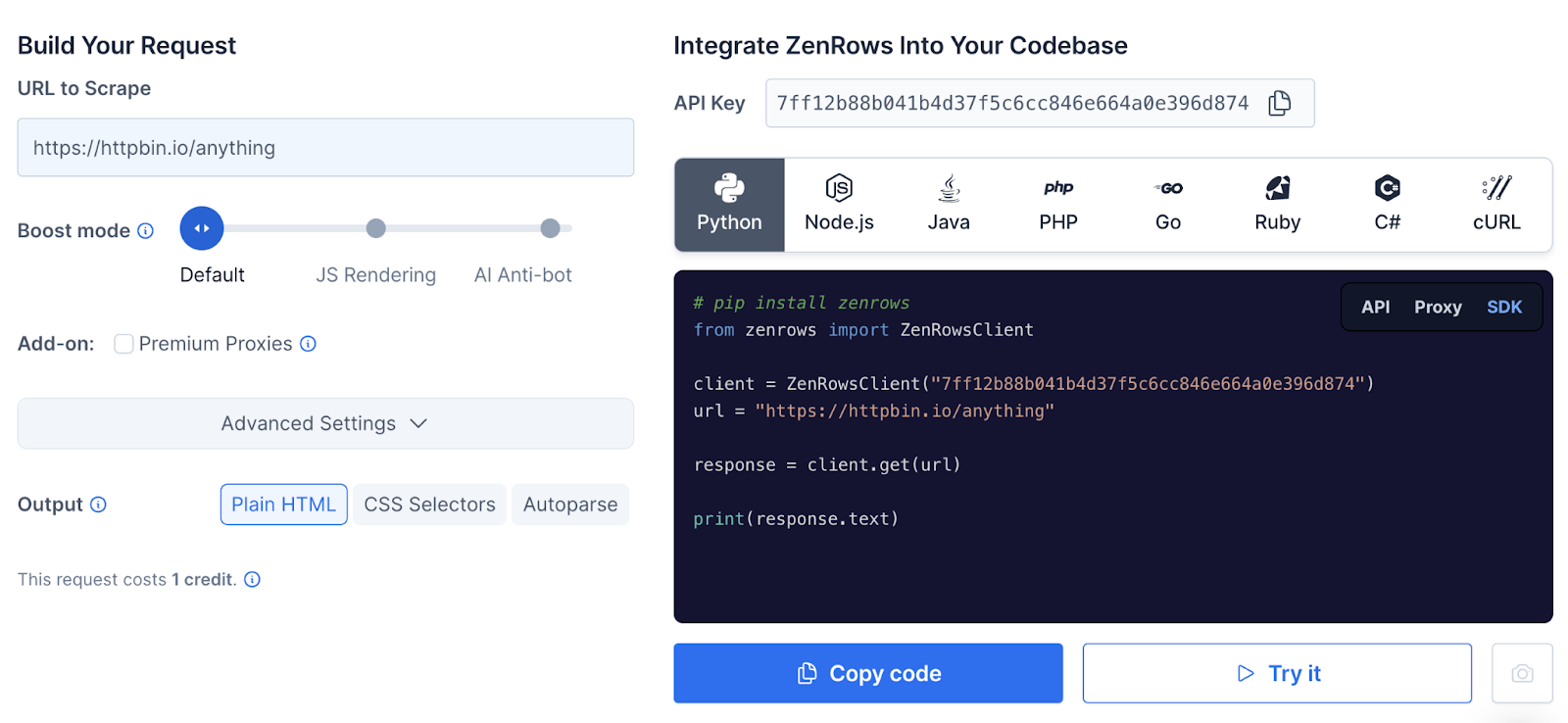

ZenRows

ZenRows is capable of scraping millions of webpages and bypassing barriers such as Completely Automated Public Turing tests to tell Computers and Humans Apart (CAPTCHAs).

ZenRows is best deployed by someone on your web development team. But once the parameters have been set, the tool says it can save thousands of development hours.

And it’s able to bypass “Access Denied” screens that typically block bots.

ZenRow’s auto-parsing tool lets you scrape pages or sites you’re interested in. And compiles the data into a JSON file for you to assess.

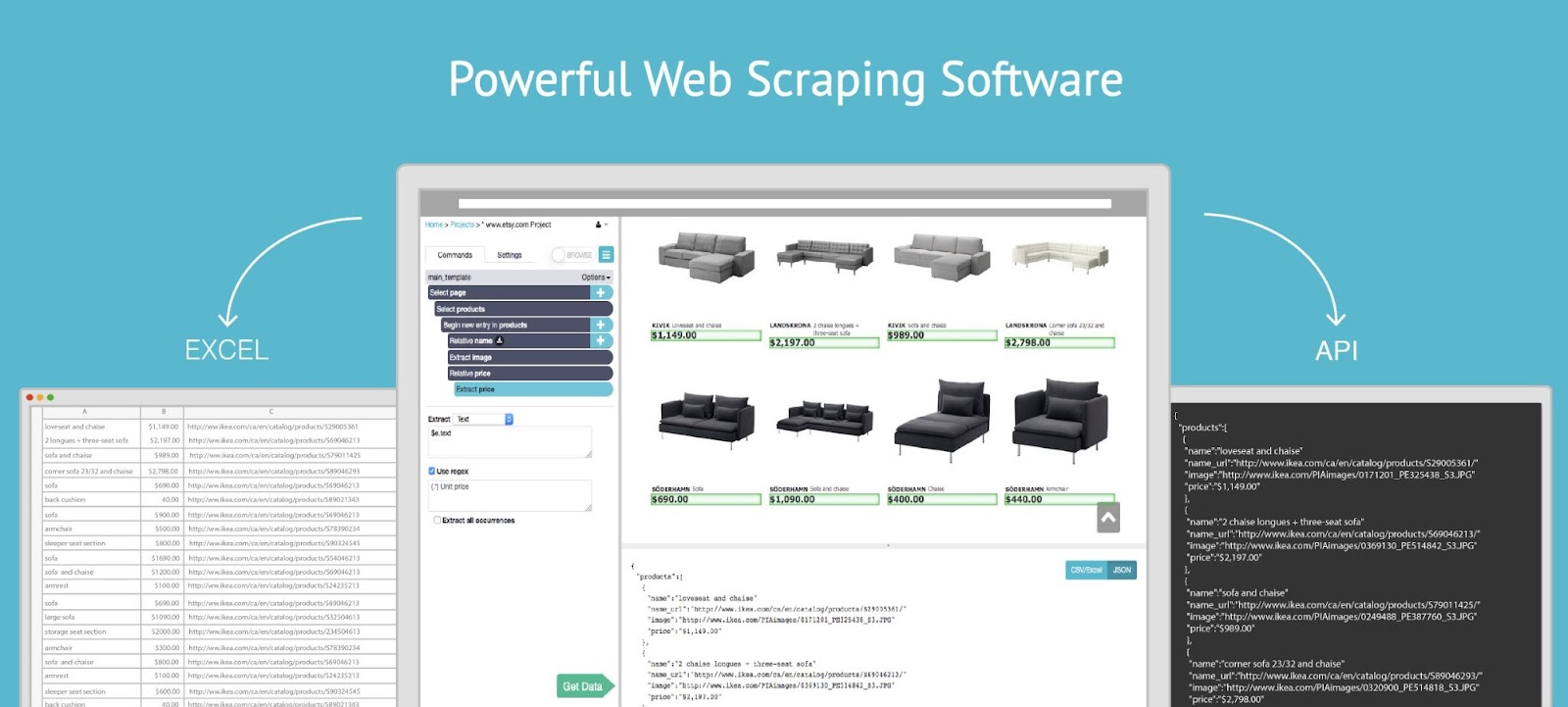

ParseHub

ParseHub is a no-code way to scrape any website for important data.

Image Source: ParseHub

It can collect difficult-to-access data from:

- Forms

- Drop-downs

- Infinite scroll pages

- Pop-ups

- JavaScript

- Asynchronous JavaScript and XML (AJAX)—a combination of programming tools used to exchange data

You can download the scraped data. Or, import it into Google Sheets or Tableau.

Using scrapers like this can help you study the competition. But don’t forget to make use of crawlers.

3 Web Crawler Tools

Backlink Analytics

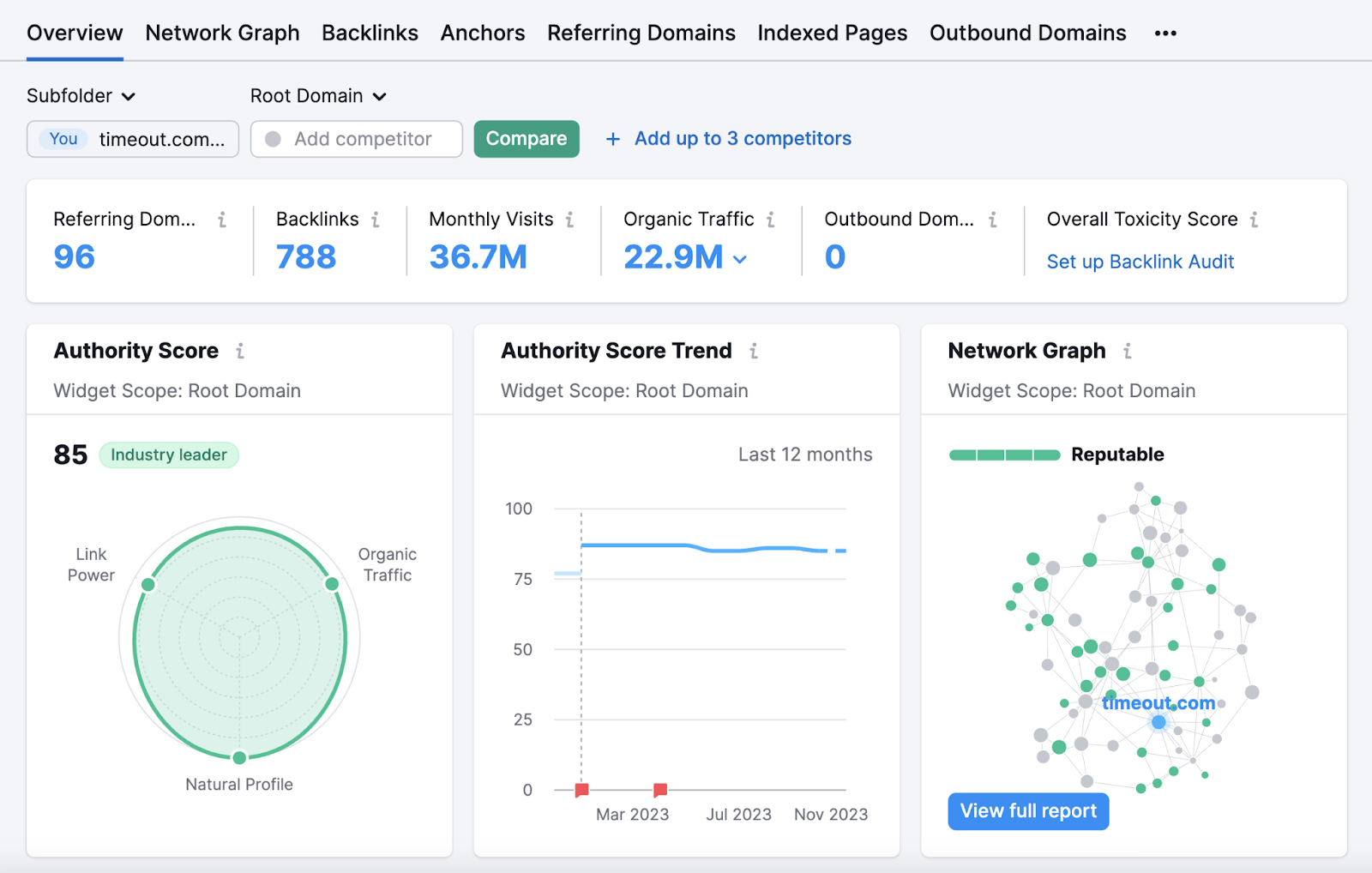

Backlink Analytics uses crawlers to study your and your competitors’ incoming links. Which allows you to analyze the backlink profiles of your competitors or other industry leaders.

Semrush’s backlinks database regularly updates with new information about links to and from crawled pages. So the information is always up to date.

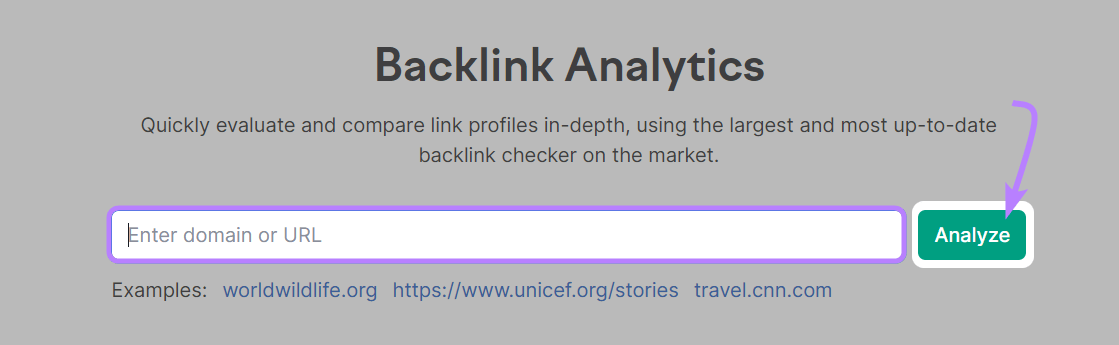

Open the tool, enter a domain name, and click “Analyze.”

You’ll then be taken to the “Overview” report. Where you can look into the domain’s total number of backlinks, total number of referring domains, estimated organic traffic, and more.

Backlink Audit

Semrush’s Backlink Audit tool lets you crawl your own site to get an in-depth look at how healthy your backlink profile is. To help you understand whether you could improve your ranking potential.

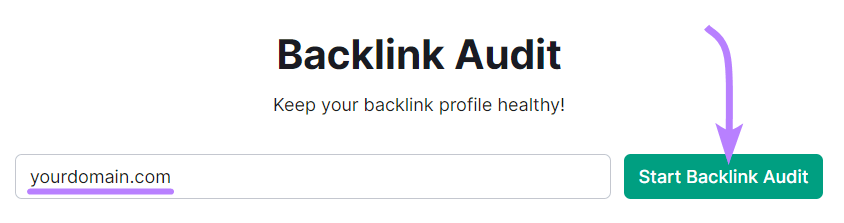

Open the tool, enter your domain, and click “Start Backlink Audit.”

Then, follow the Backlink Audit configuration guide to set up your project and start the audit.

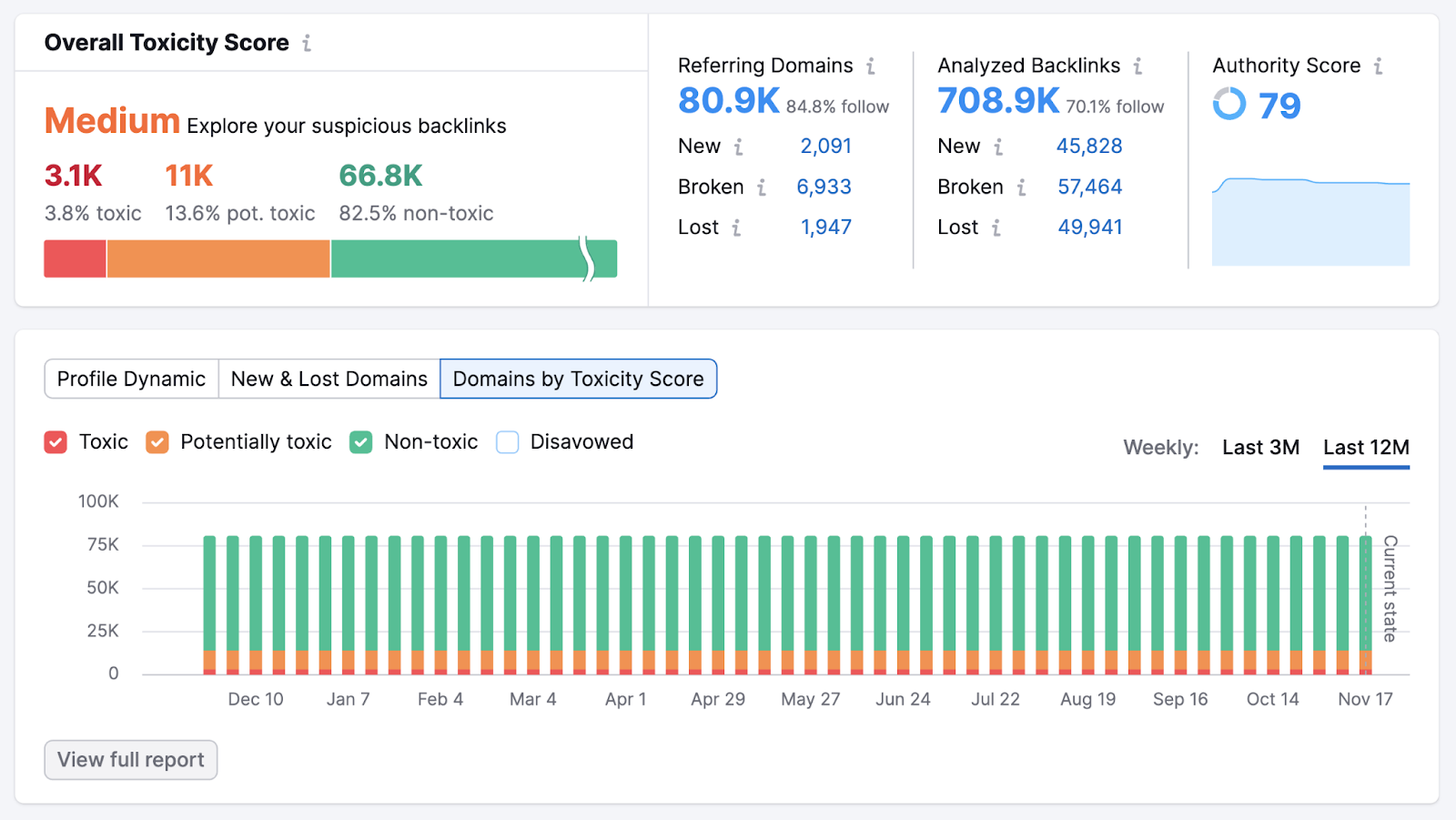

Once the tool is done gathering information, you’ll see the “Overview” report. Which gives you a holistic look at your backlink profile.

Look at the “Overall Toxicity Score” (a metric based on the number of low-quality domains linking to your site) section. A “Medium” or “High” score indicates you have room for improvement.

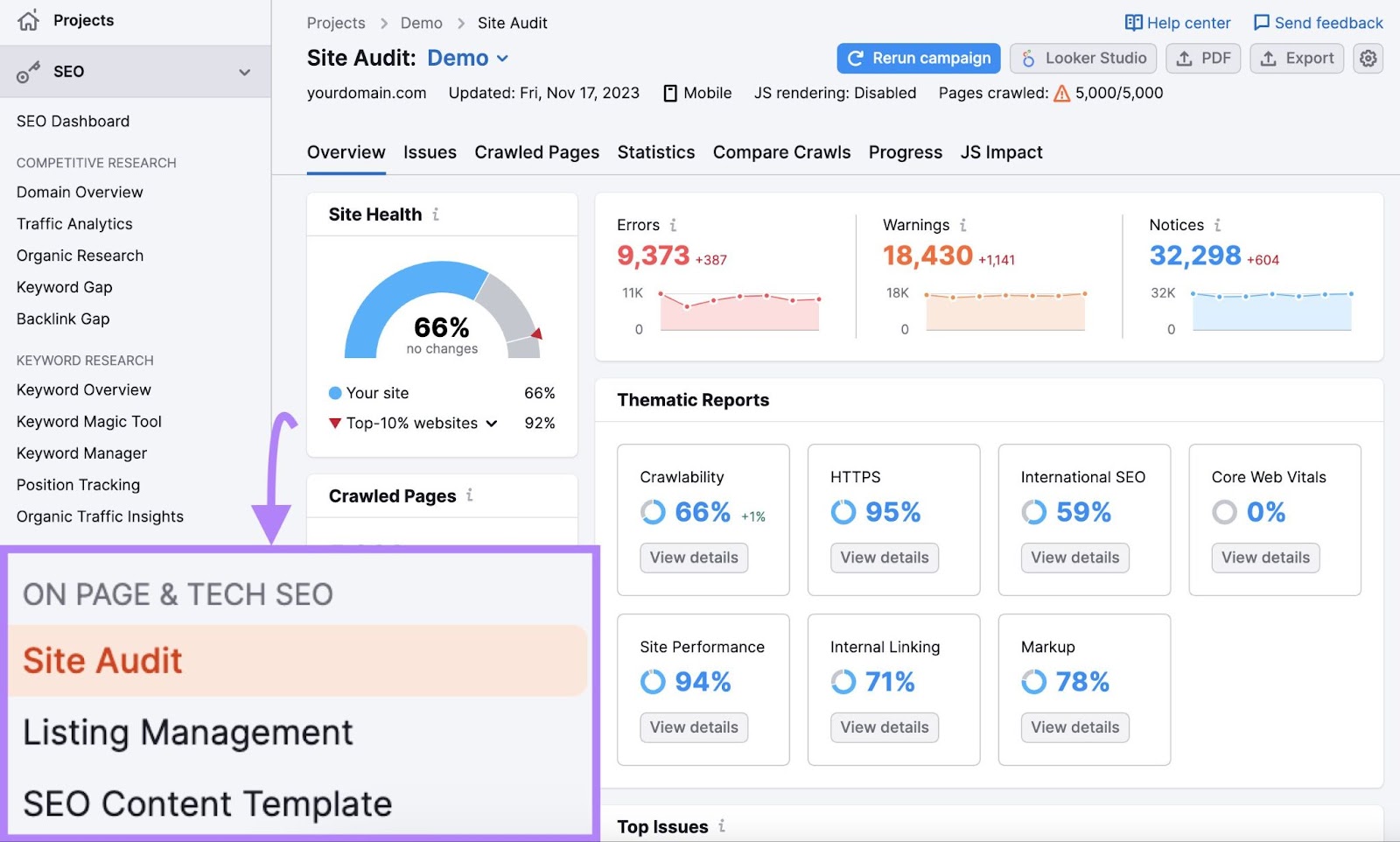

Site Audit

Site Audit uses crawlers to access your website and analyze its overall health. And gives you a report of the technical issues that could be affecting your site’s ability to rank well in Google’s search results.

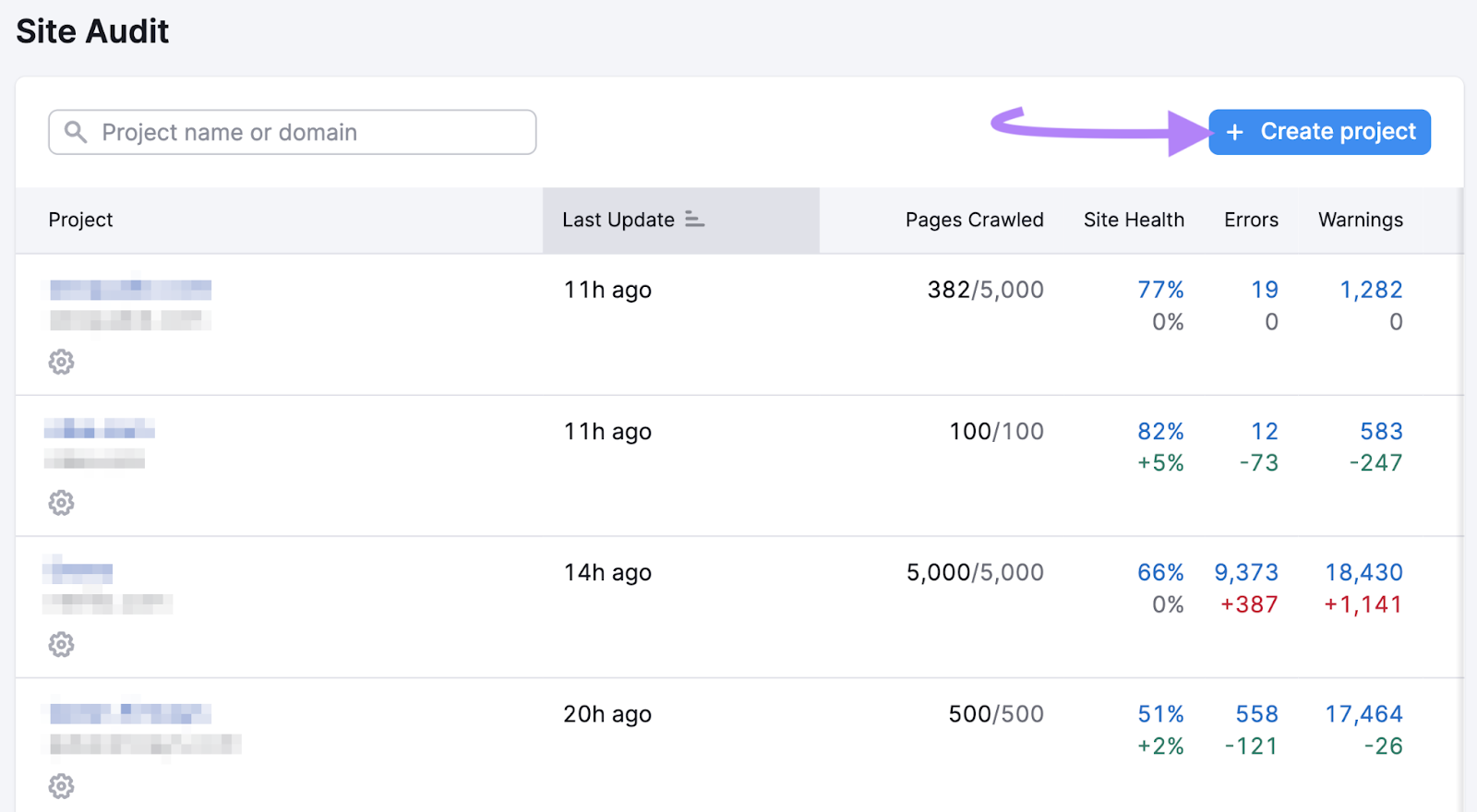

To crawl your site, open the tool and click “+ Create project.”

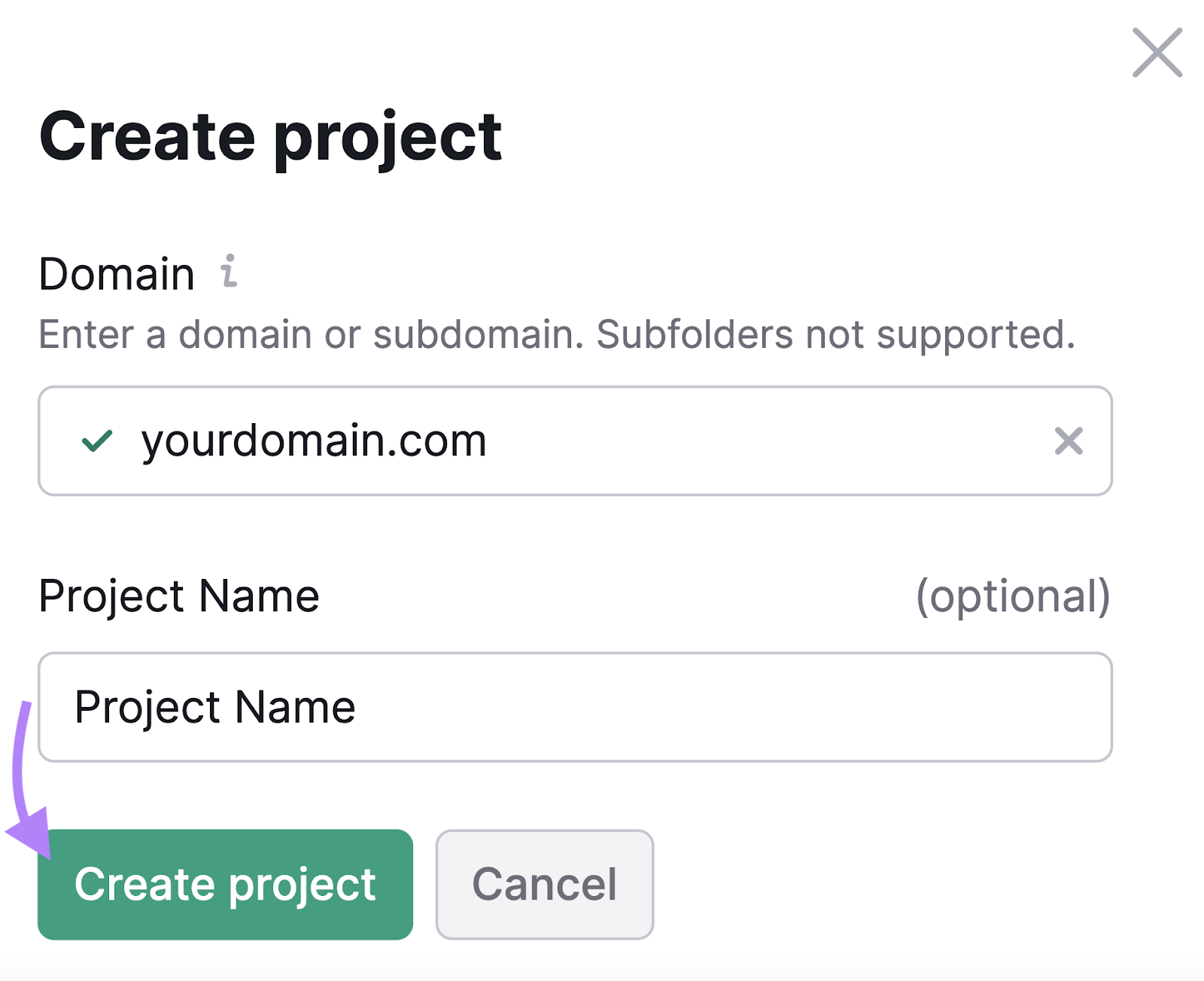

Enter your domain and an optional project name. Then, click “Create project.”

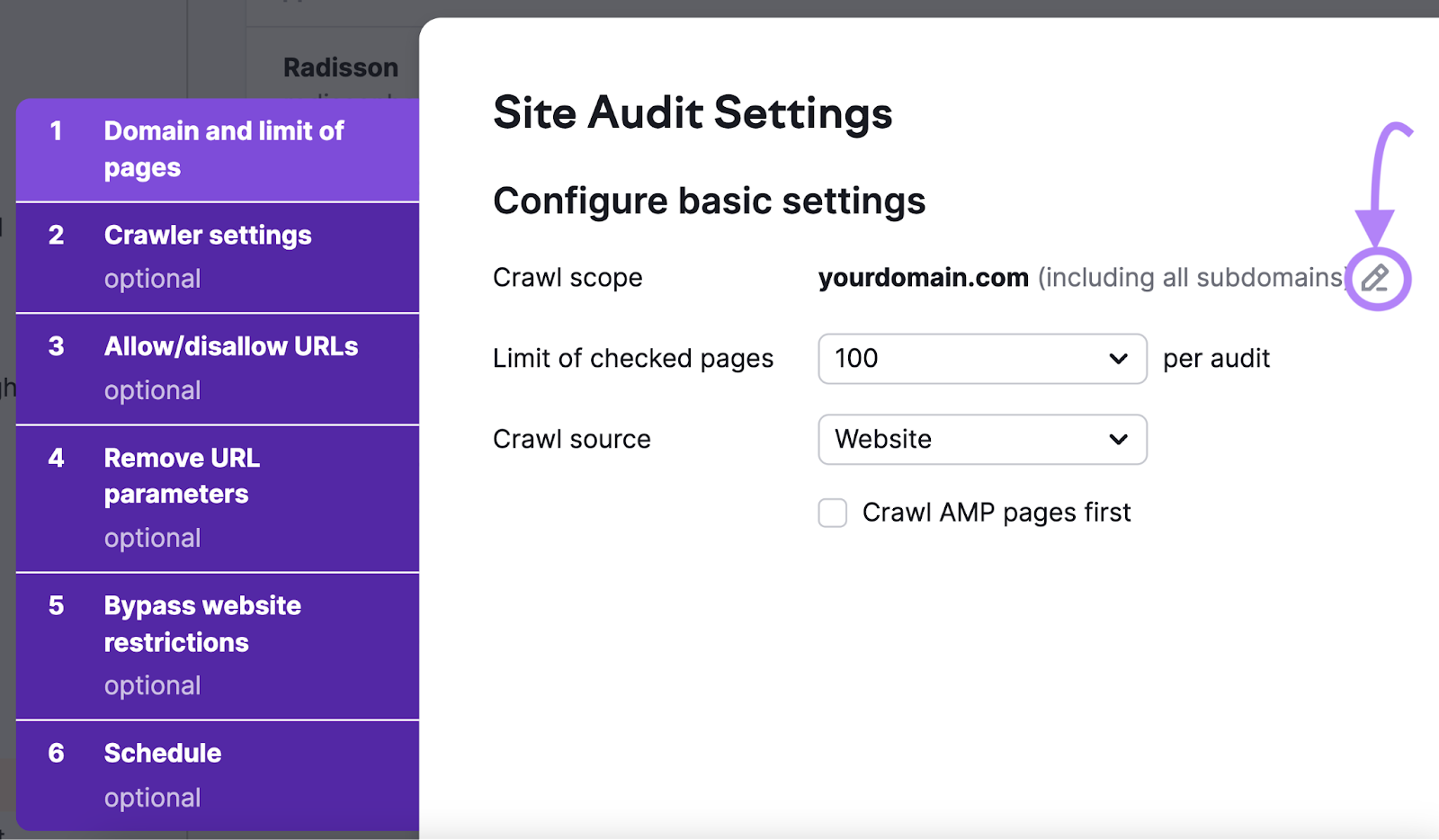

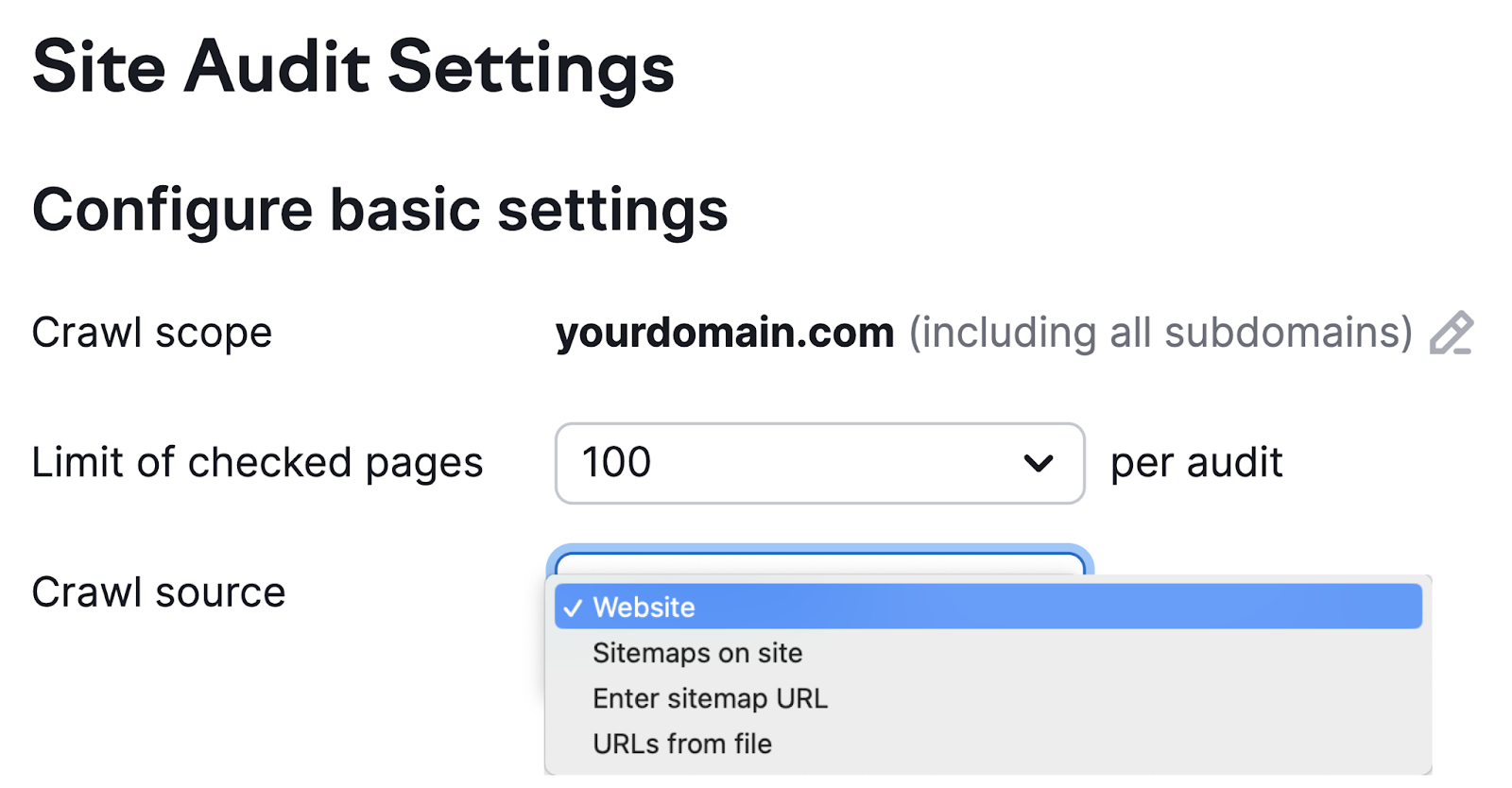

Now, it’s time to configure your basic settings.

First, define the scope of your crawl. The default setting is to crawl the domain you entered along with its subdomains and subfolders. To edit this, click the pencil icon and change your settings.

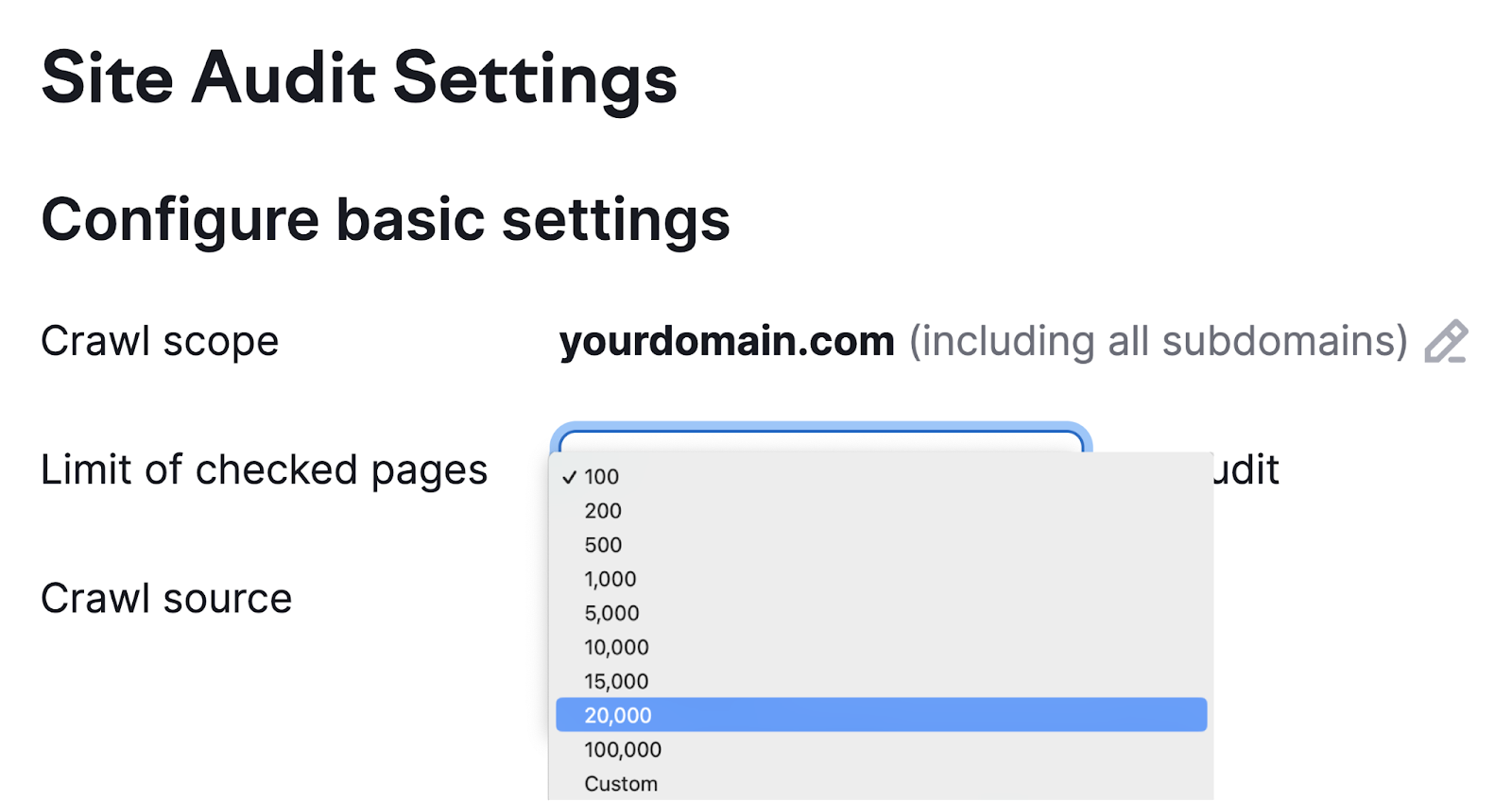

Next, set the maximum number of pages you want crawled per audit. The more pages you crawl, the more accurate your audit will be. But you also need to account for your own capacity and your subscription level.

Then, choose your crawl source.

There are four options:

- Website: This initiates an algorithm that travels around your site like a search engine crawler would. It’s a good choice if you’re interested in crawling the pages on your site that are most accessible from the homepage.

- Sitemaps on site: This initiates a crawl of the URLs found in the sitemap from your robots.txt file

- Enter sitemap URL: This source allows you to enter your own sitemap URL, making your audit more specific

- URLs from file: This allows you to get really specific about which pages you want to audit. You just need to have them saved as CSV or TXT files that you can upload directly to Semrush. This option is great for when you don’t need a general overview. For example, when you’ve made changes to specific pages and want to see how they perform.

The remaining settings on tabs two through six in the setup wizard are optional. But make sure to specify any parts of your website you don’t want to be crawled. And provide login credentials if your site is protected by a password.

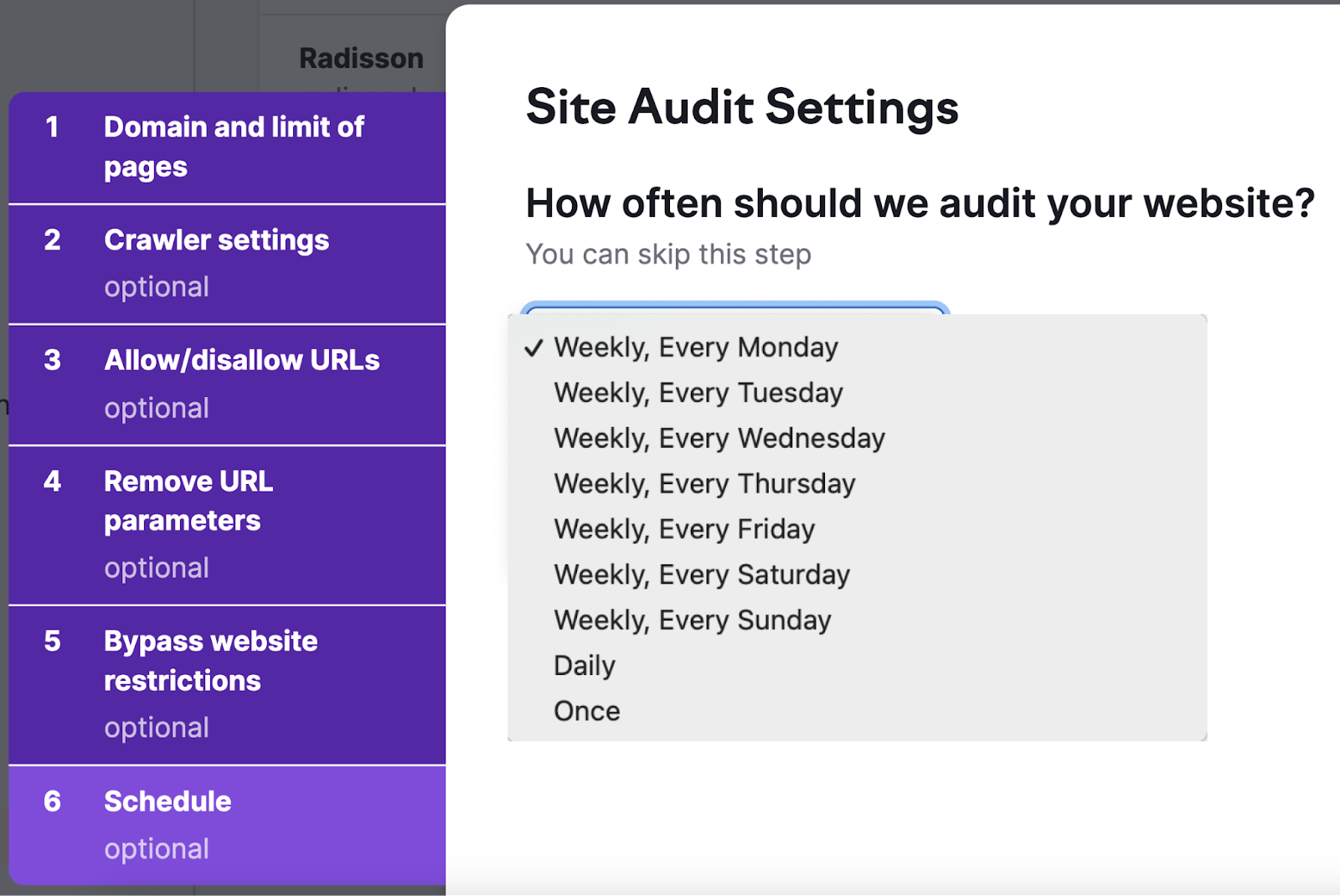

We also recommend scheduling regular site audits in the “Schedule” tab. Because auditing your site regularly helps you monitor your site’s health and gauge the impact of recent changes.

Scheduling options including weekly, daily, or just once.

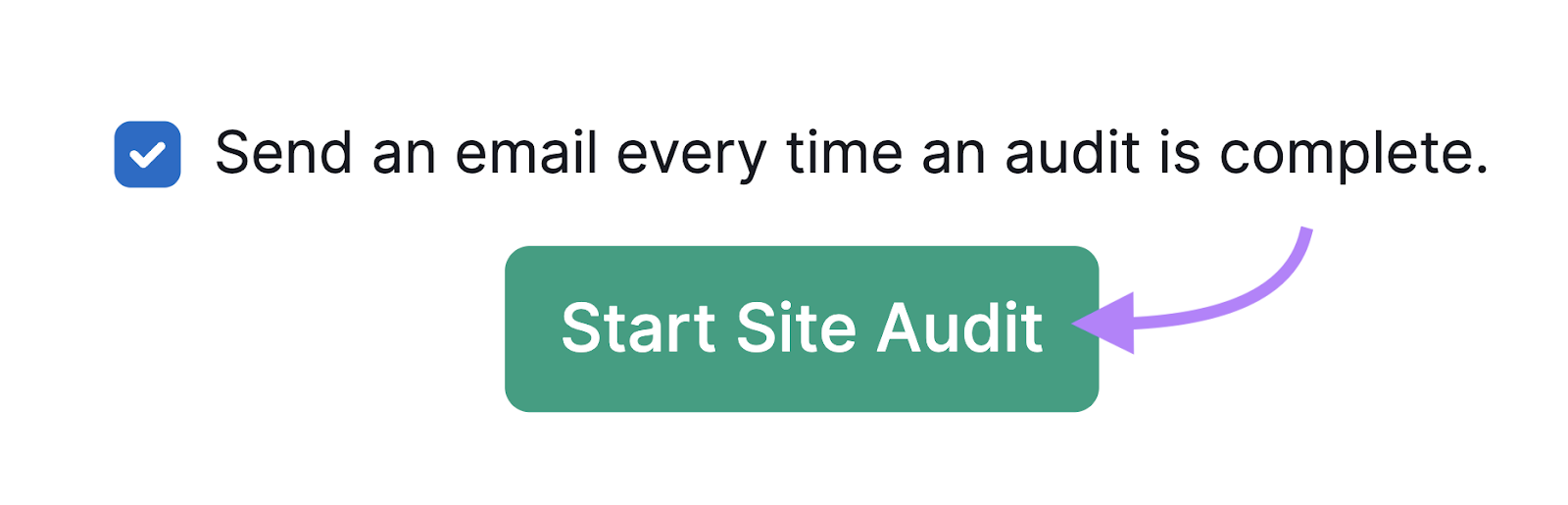

Finally, click “Start Site Audit” to get your crawl underway. And don’t forget to click the box next to “Send an email every time an audit is complete.” So you’re notified when your audit report is ready.

Now just wait for the email notification that your audit is complete. You can then start reviewing your results.

How to Evaluate Your Website Crawl Data

Once you’ve conducted a crawl, analyze the results. To find out what you can do to improve.

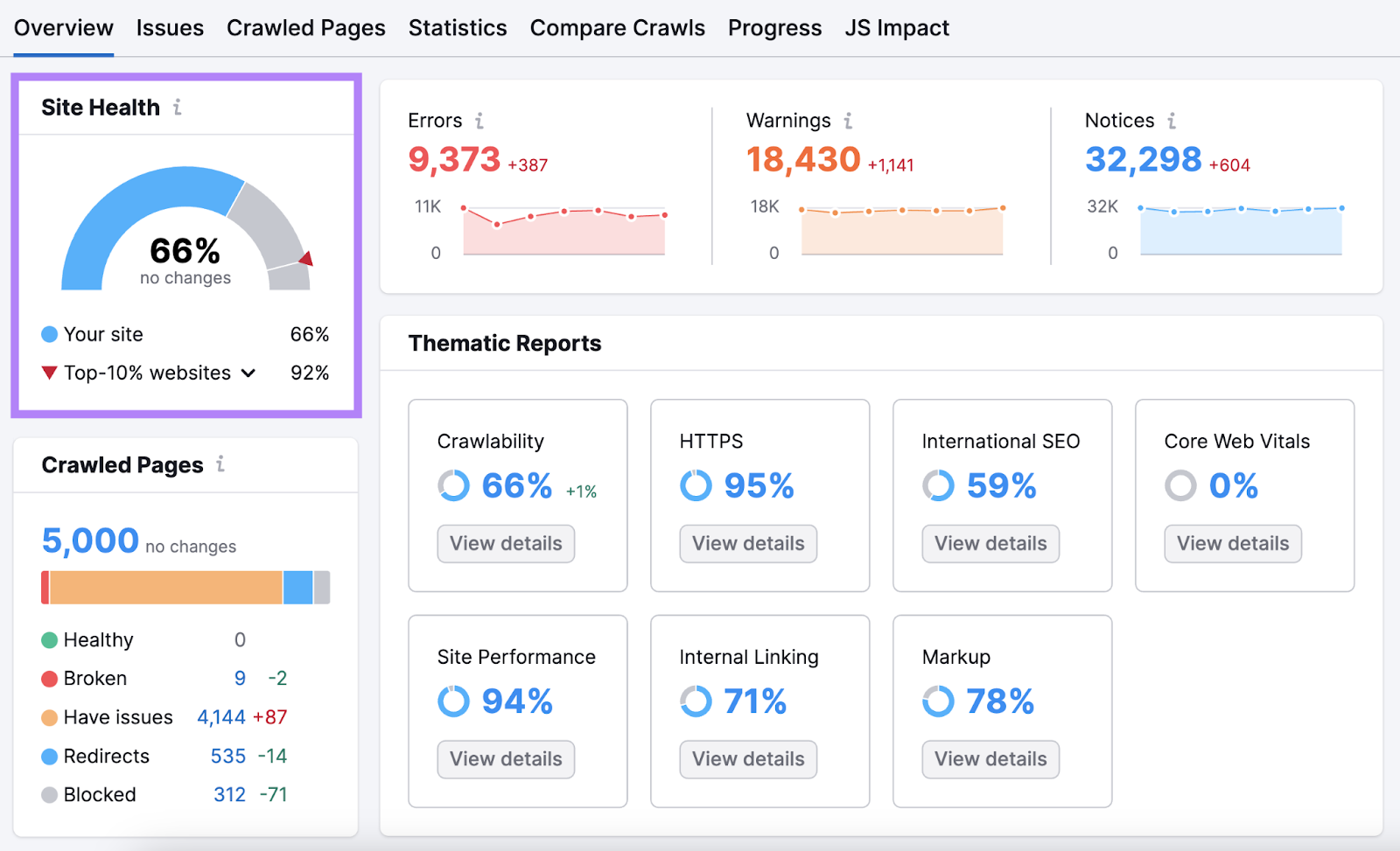

You can go to your project in Site Audit.

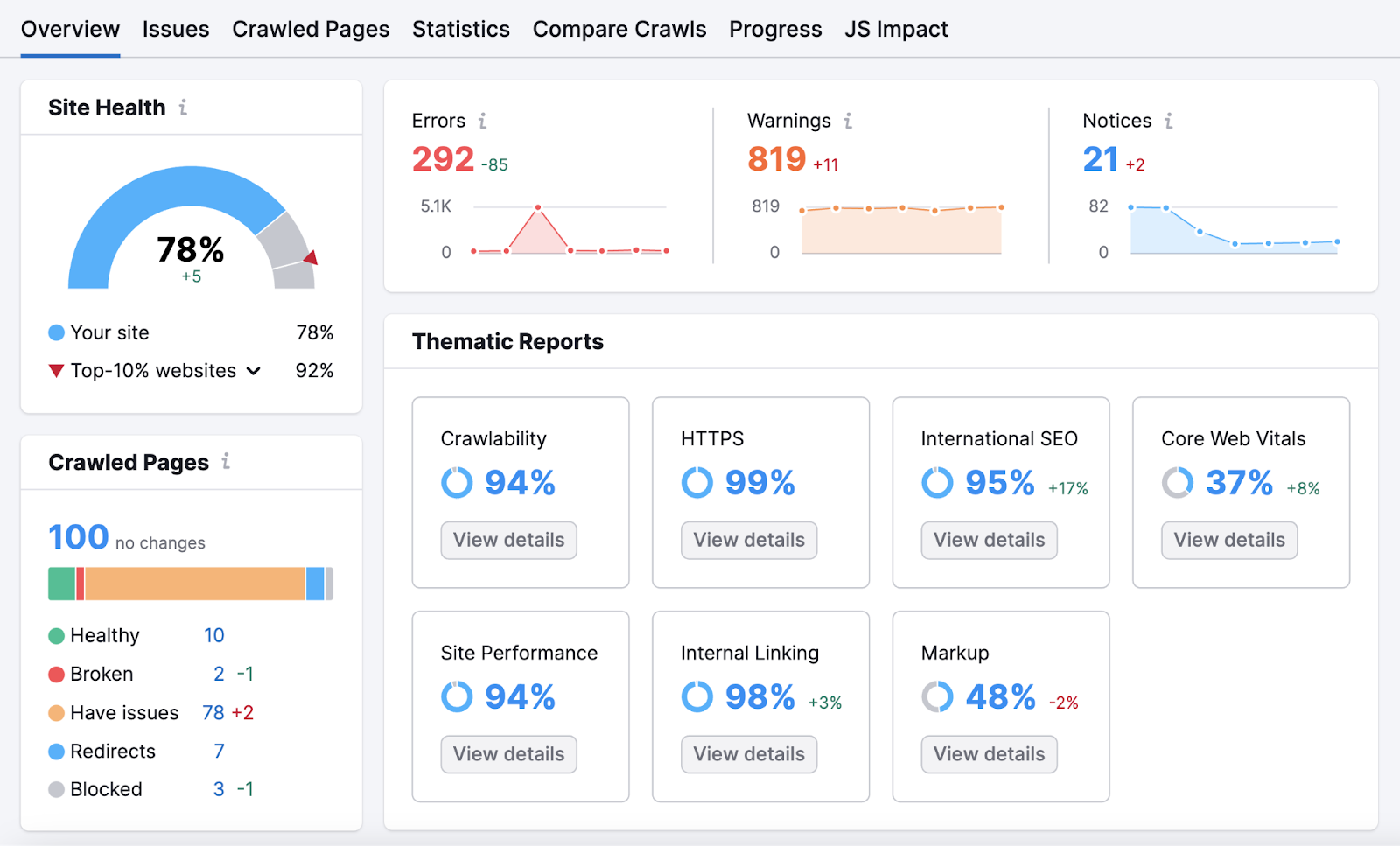

You’ll be taken to the “Overview” report. Where you’ll find your “Site Health” score. The higher your score, the more user-friendly and search-engine-optimized your site is.

Below that, you’ll see the number of crawled pages. And a distribution of pages by page status.

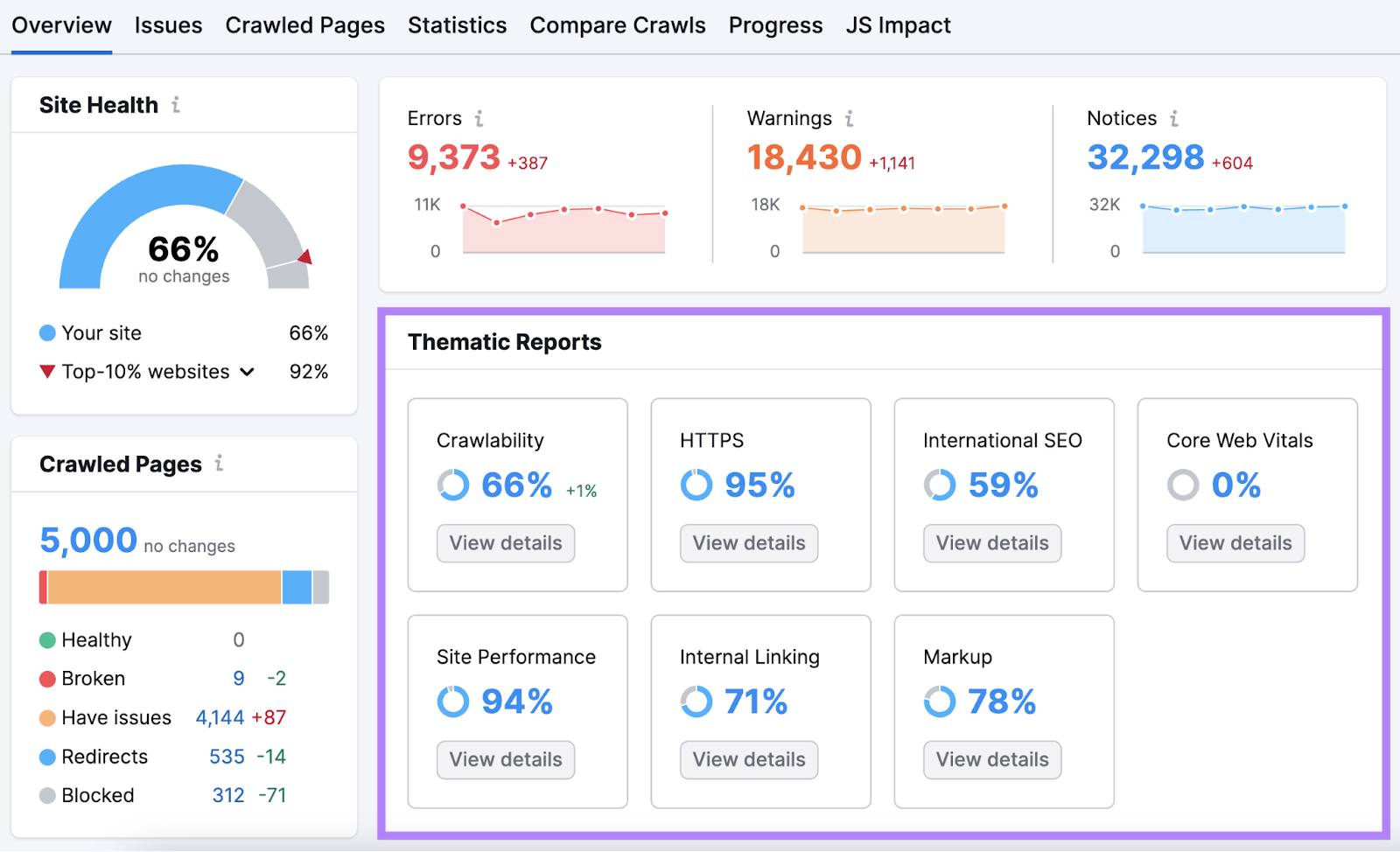

Scroll down to “Thematic Reports.” This section details room for improvement in areas like Core Web Vitals, internal linking, and crawlability.

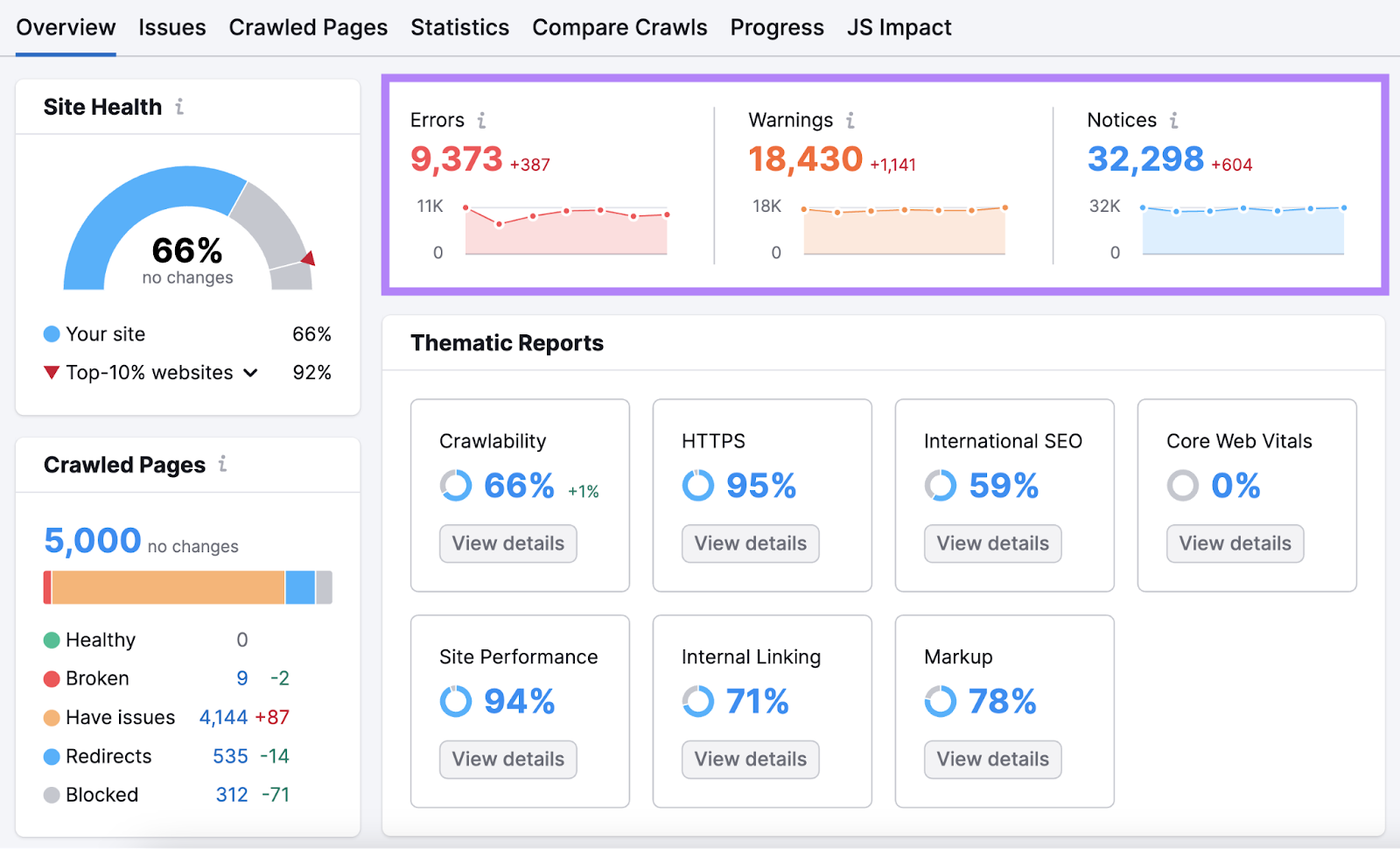

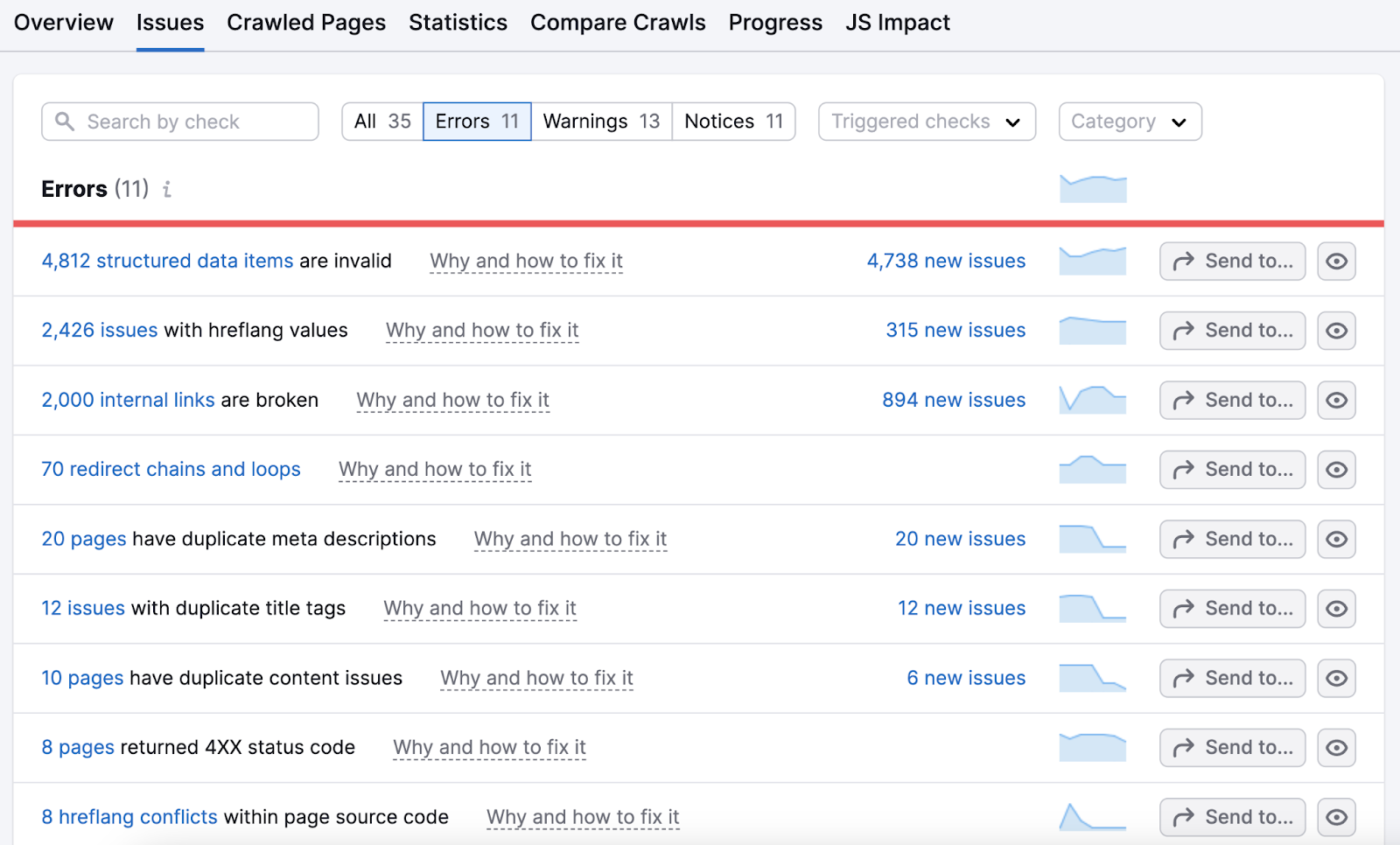

To the right, you’ll find “Errors,” “Warnings,” and “Notices.” Which are issues categorized by severity.

Click the number highlighted in “Errors” to get detailed breakdowns of the biggest problems. And suggestions for fixing them.

Work your way through this list. And then move on to address warnings and notices.

Keeps Crawling to Stay Ahead of the Competition

Search engines like Google never stop crawling webpages.

Including yours. And your competitors’.

Keep an edge over the competition by regularly crawling your website to stop problems in their tracks.

You can schedule automatic recrawls and reports with Site Audit. Or, manually run future web crawls to keep your website in top shape—from users’ and Google’s perspective.