Ever wonder why some of your pages don't appear in Google's search results?

Crawlability problems might be the reason.

In this guide, we'll explain what crawlability problems are, how they affect SEO, and how to fix them.

Let's begin.

What Are Crawlability Problems?

Crawlability problems prevent search engines from accessing your website's pages.

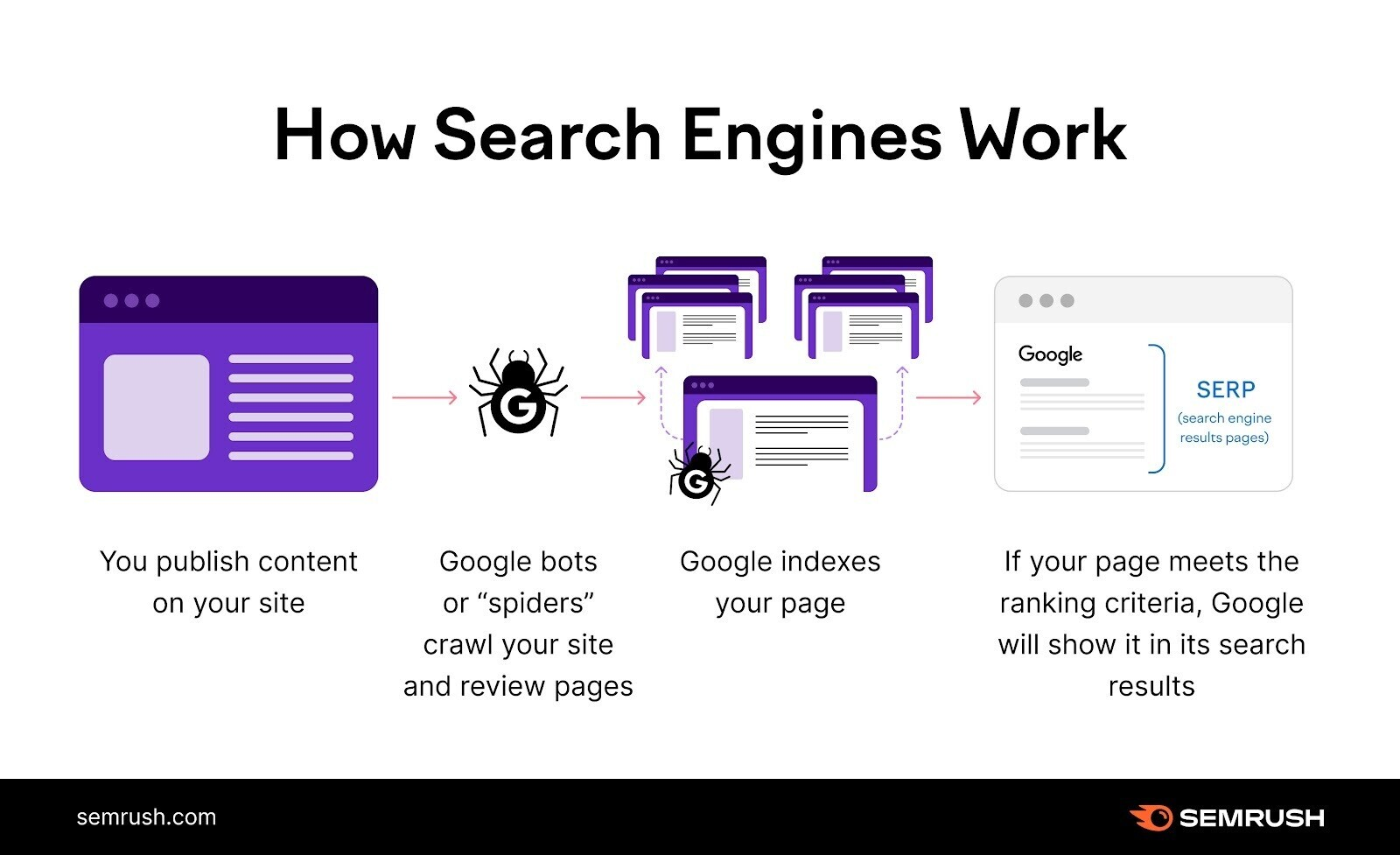

Search engines like Google use automated bots to read and analyze your pages. This process is called crawling.

If crawlability problems exist, these bots may encounter obstacles that hinder their ability to access your pages properly.

Common crawlability problems include:

- Nofollow links (which tell Google not to follow the link or pass ranking strength to that page)

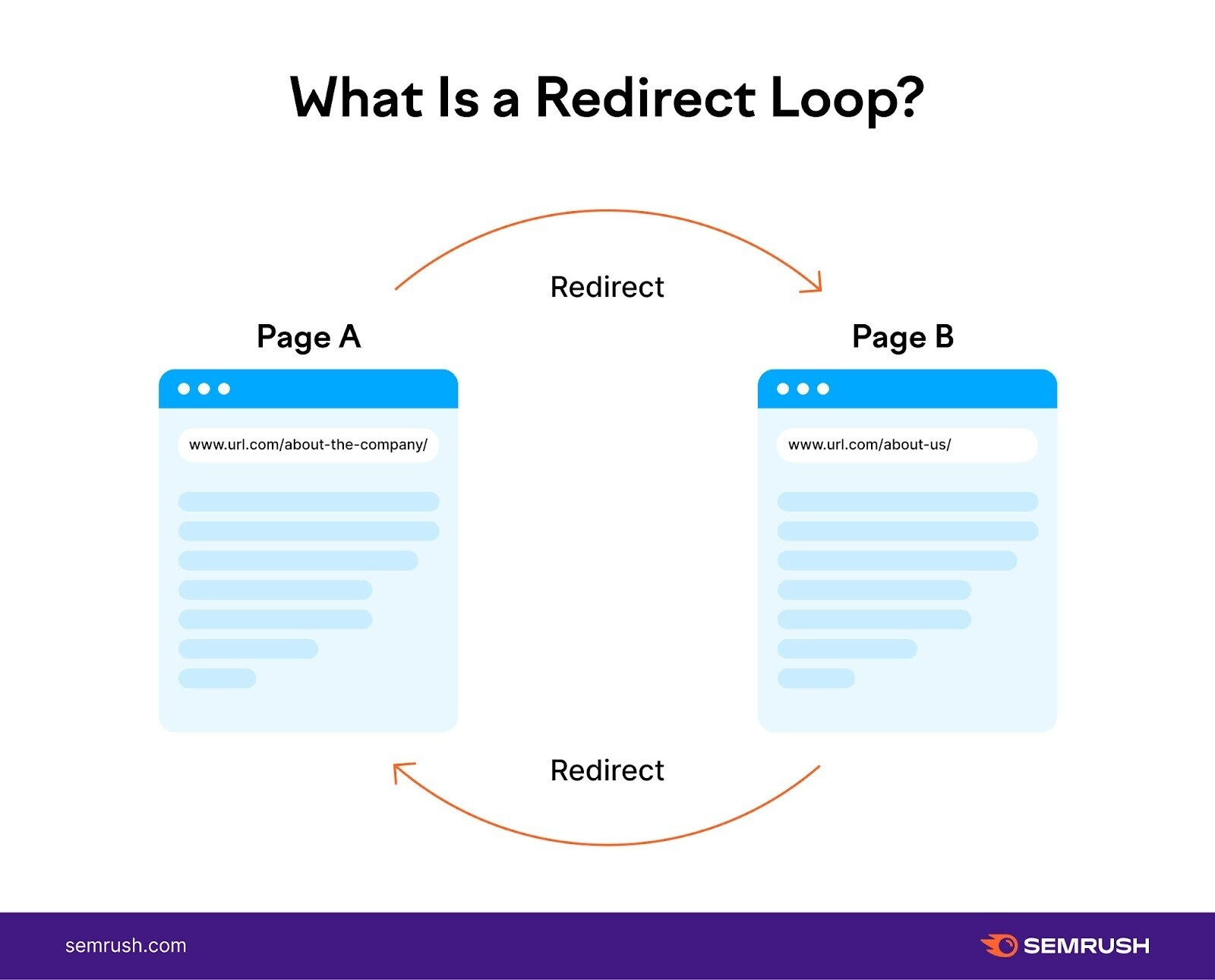

- Redirect loops (when two pages redirect to each other, creating an infinite loop)

- Bad site structure

- Slow site speed

How Do Crawlability Issues Affect SEO?

Crawlability problems can significantly harm your SEO performance by making some or all of your pages invisible to search engines.

If search engines can’t find your pages, they can’t index them—that is, they can’t save them to a database to later display in relevant search results.

This leads to a potential loss of organic traffic and conversions.

Your pages must be both crawlable and indexable to rank in search engines.

15 Crawlability Problems & How to Fix Them

1. Pages Blocked In Robots.txt

Search engines first check your robots.txt file to determine which pages they should or shouldn’t crawl.

If your robots.txt file looks like this, your entire website is blocked from crawling:

User-agent: *

Disallow: /To fix this problem, replace the “Disallow” directive with “Allow,” enabling search engines to access your entire website:

User-agent: *

Allow: /In some cases, only specific pages or sections are blocked. For example:

User-agent: *

Disallow: /products/Here, all pages in the “/products/” subfolder are blocked from crawling.

To solve this problem, remove the specified subfolder or page from the “Disallow” directive.

An empty “Disallow” directive tells search engines there are no pages to disallow:

User-agent: *

Disallow:Alternatively, use the “Allow” directive instead of “Disallow” to instruct search engines to crawl your entire site, as shown earlier.

2. Nofollow Links

The nofollow tag tells search engines not to crawl the links on a webpage.

The tag looks like this:

<meta name="robots" content="nofollow">If this tag is present on your pages, search engines might not crawl the pages you link to, creating crawlability problems on your site.

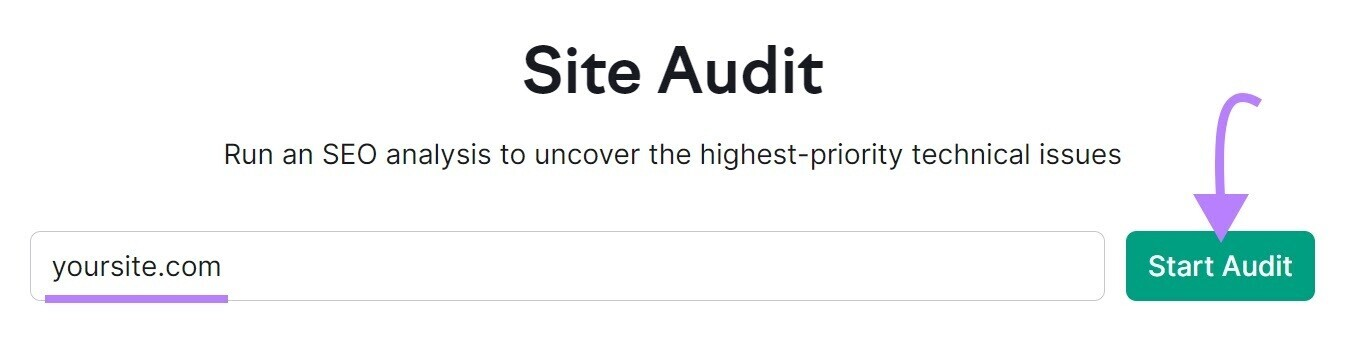

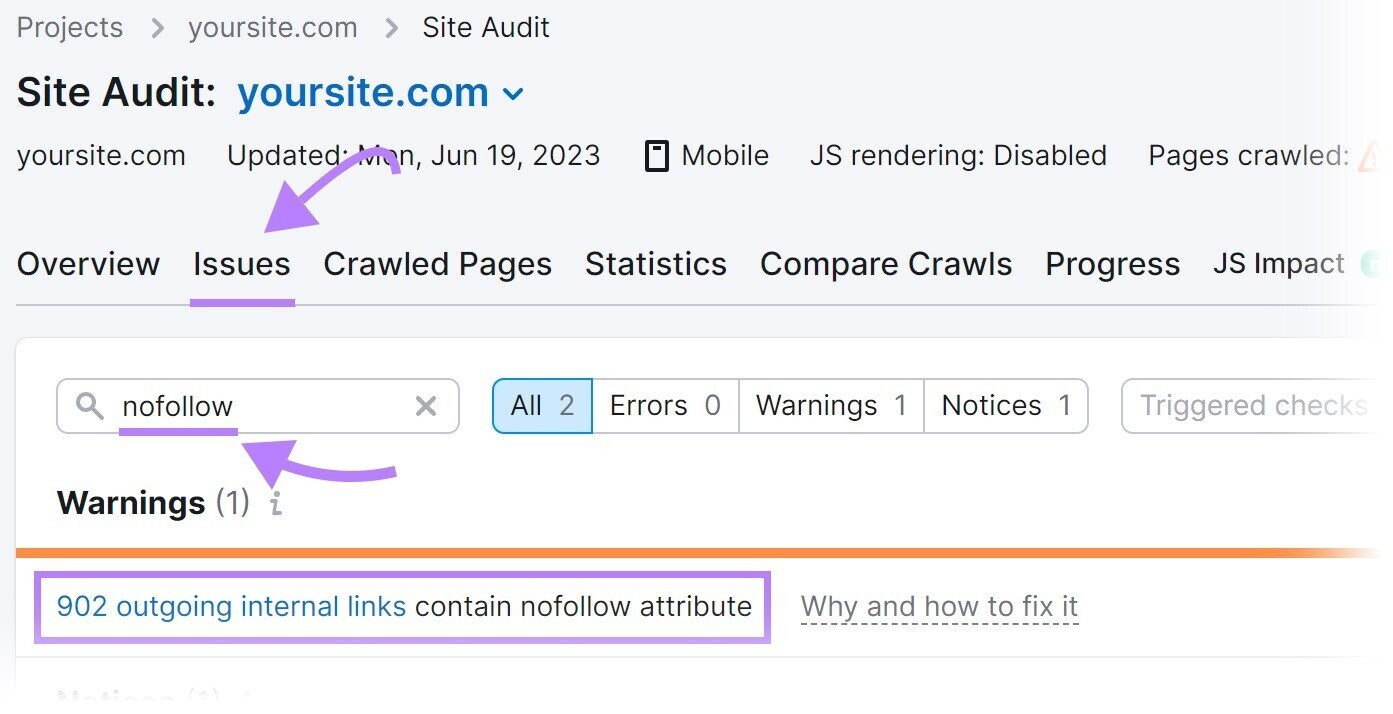

To check for nofollow links using Semrush's Site Audit tool:

1. Open the tool, enter your website, and click “Start Audit.”

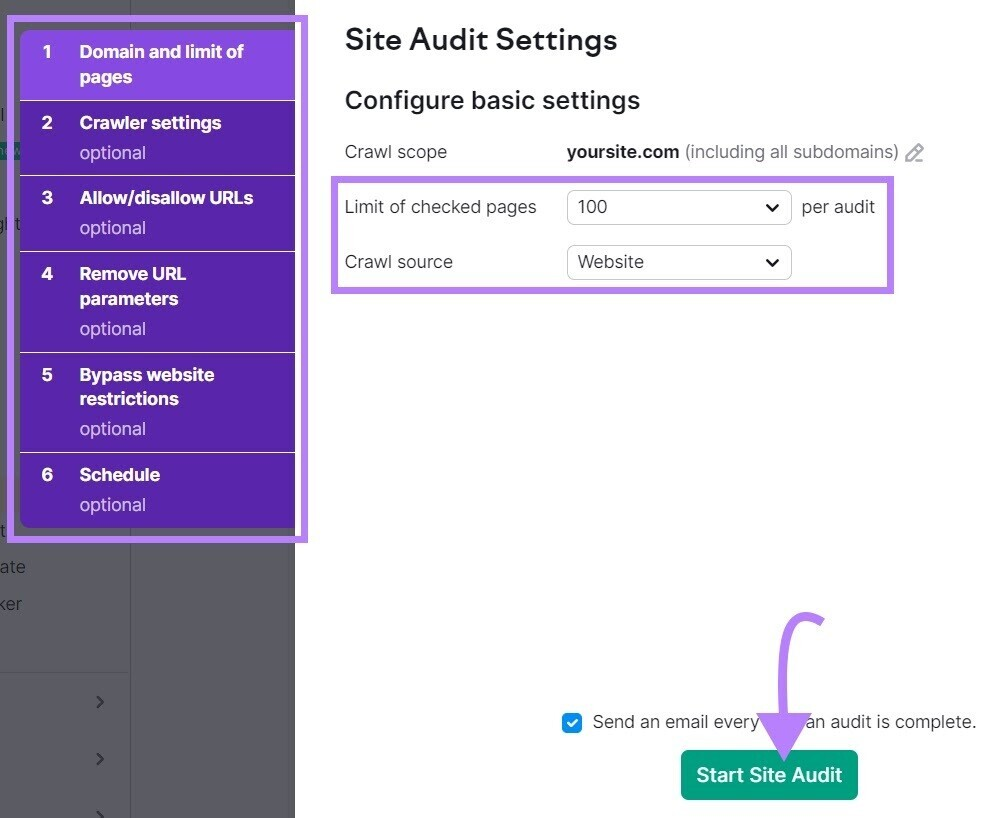

2. The Site Audit Settings window will appear. Configure the basic settings and click “Start Site Audit.”

3. After the audit completes, go to the “Issues” tab and search for "nofollow."

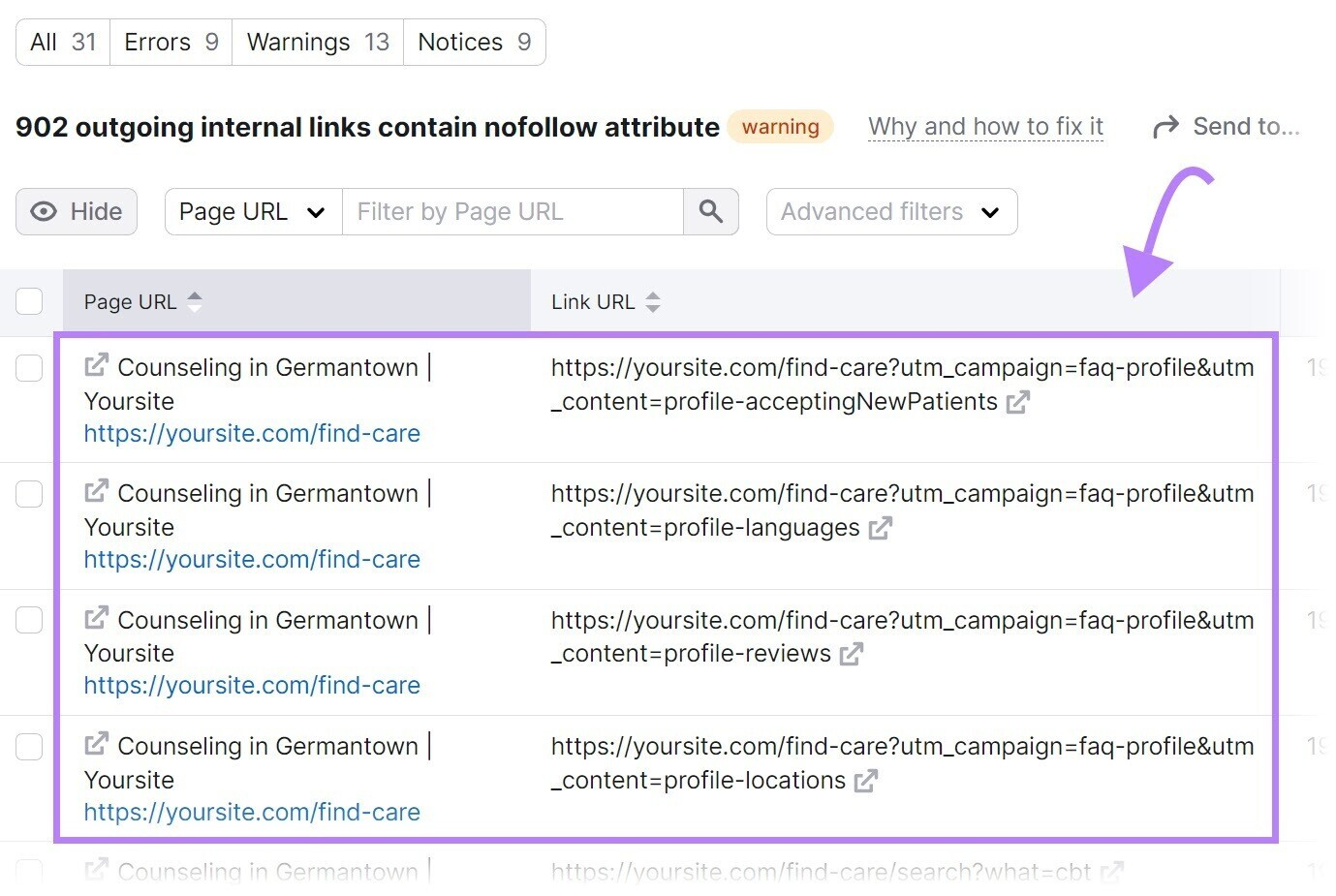

4. If nofollow links are detected, click “# outgoing internal links contain nofollow attribute” to see a list of pages with the nofollow tag.

5. Review the pages and remove the nofollow tags if they shouldn’t be there.

3. Bad Site Architecture

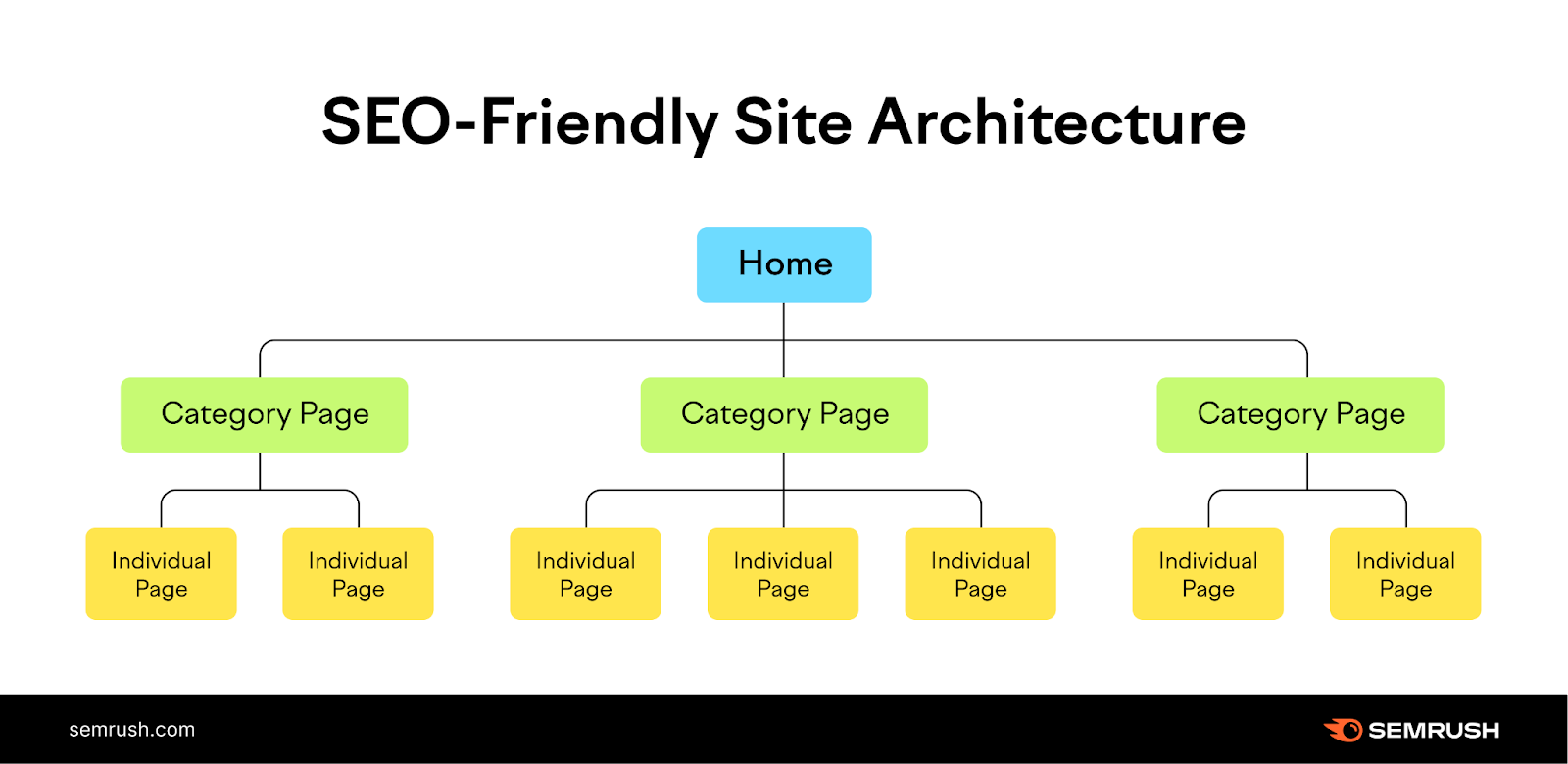

Site architecture refers to how your pages are organized across your website.

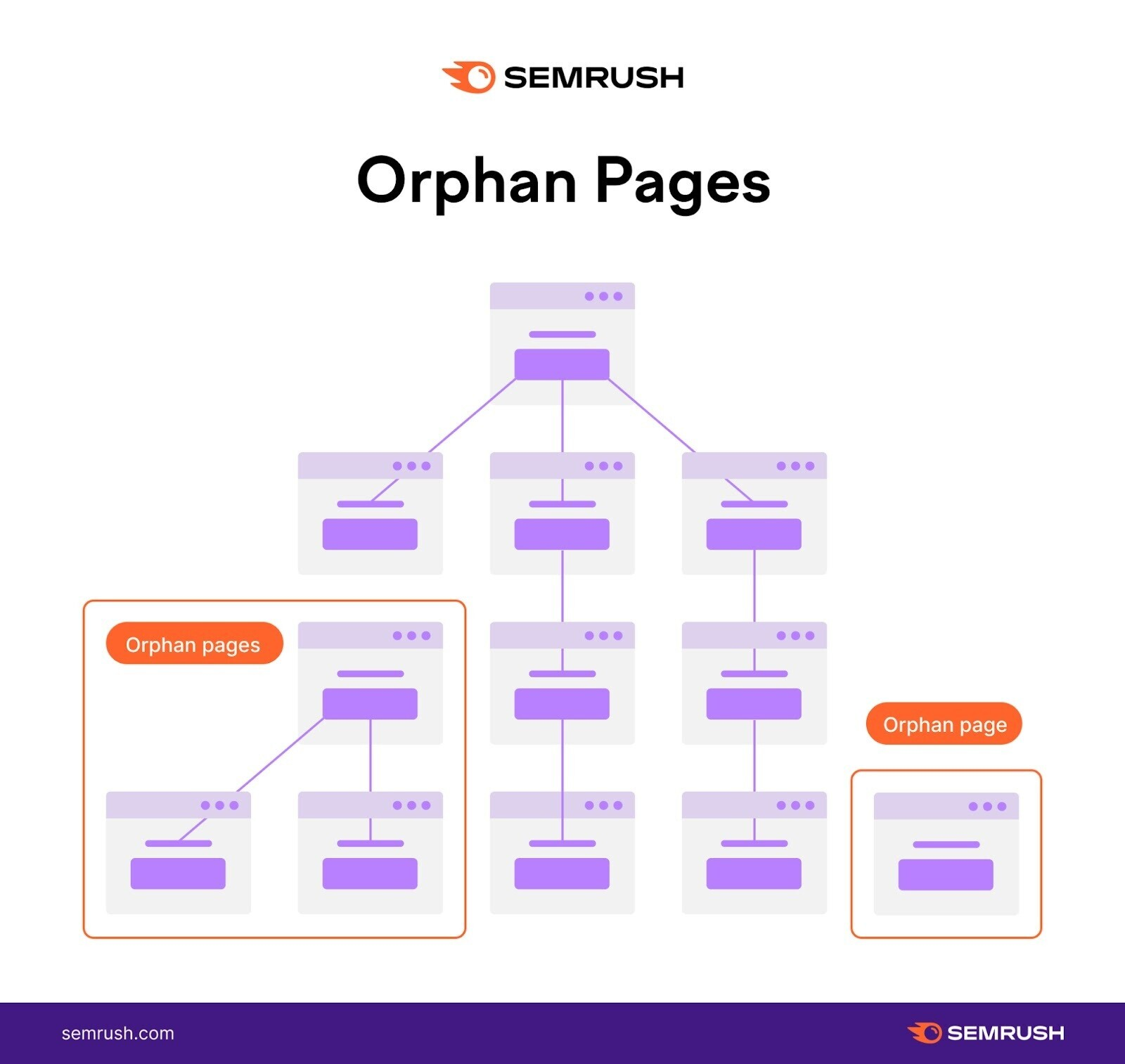

A good site architecture ensures every page is just a few clicks away from the homepage and that there are no orphan pages (pages with no internal links pointing to them).

This helps search engines easily access all pages.

However, a bad site architecture can create crawlability issues.

Consider the example site structure shown below. It has orphan pages.

Because there’s no linked path from the homepage to these pages, search engines may not find them when crawling the site.

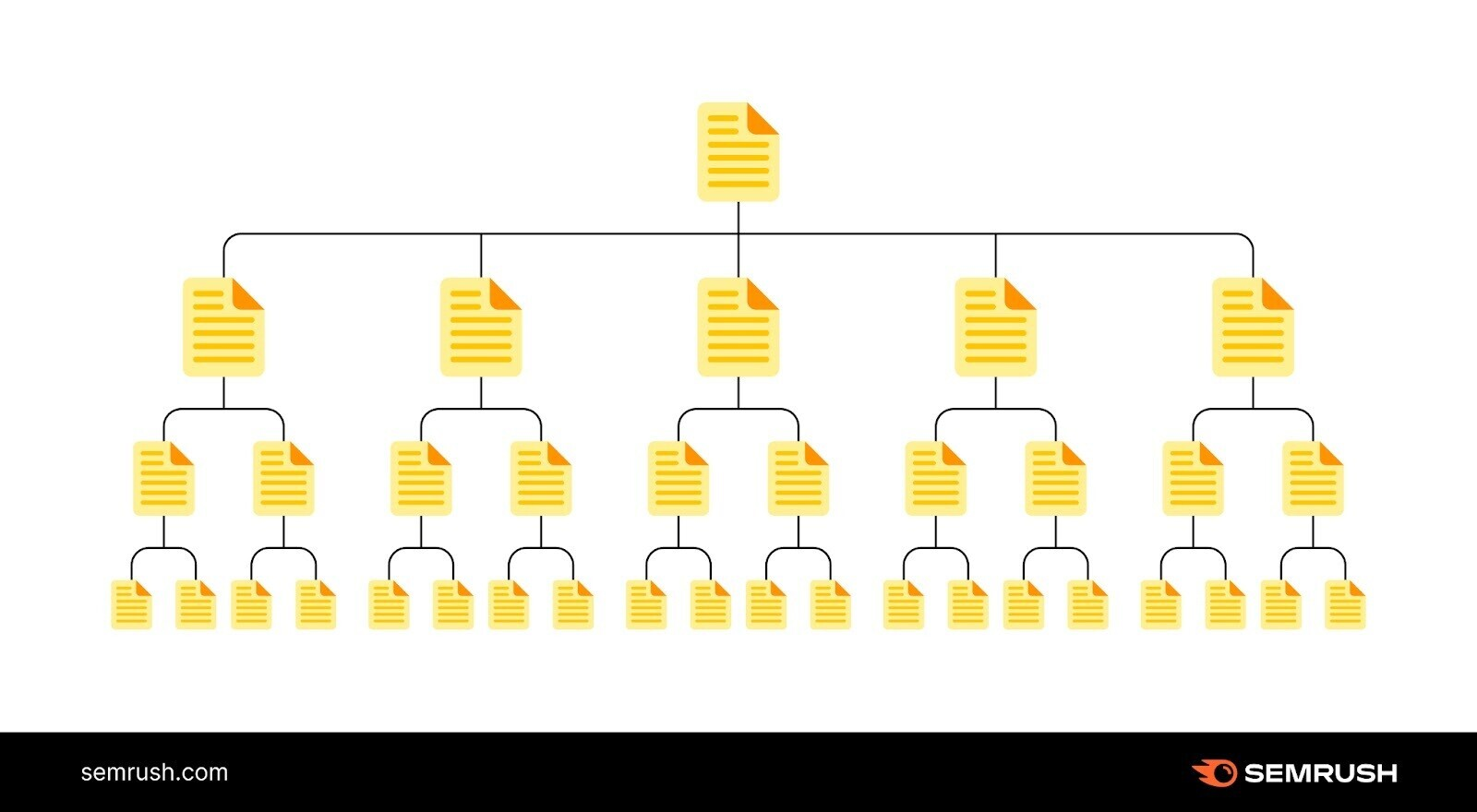

The solution is straightforward: Create a site structure that logically organizes your pages in a hierarchy through internal links.

Like this:

In the example above, the homepage links to category pages, which then link to individual pages on your site.

This provides a clear path for crawlers to find all your important pages.

4. Lack of Internal Links

Pages without internal links can create crawlability problems.

Search engines have trouble discovering pages that lack internal links.

To avoid these issues, identify your orphan pages and add internal links to them.

How can you find orphan pages?

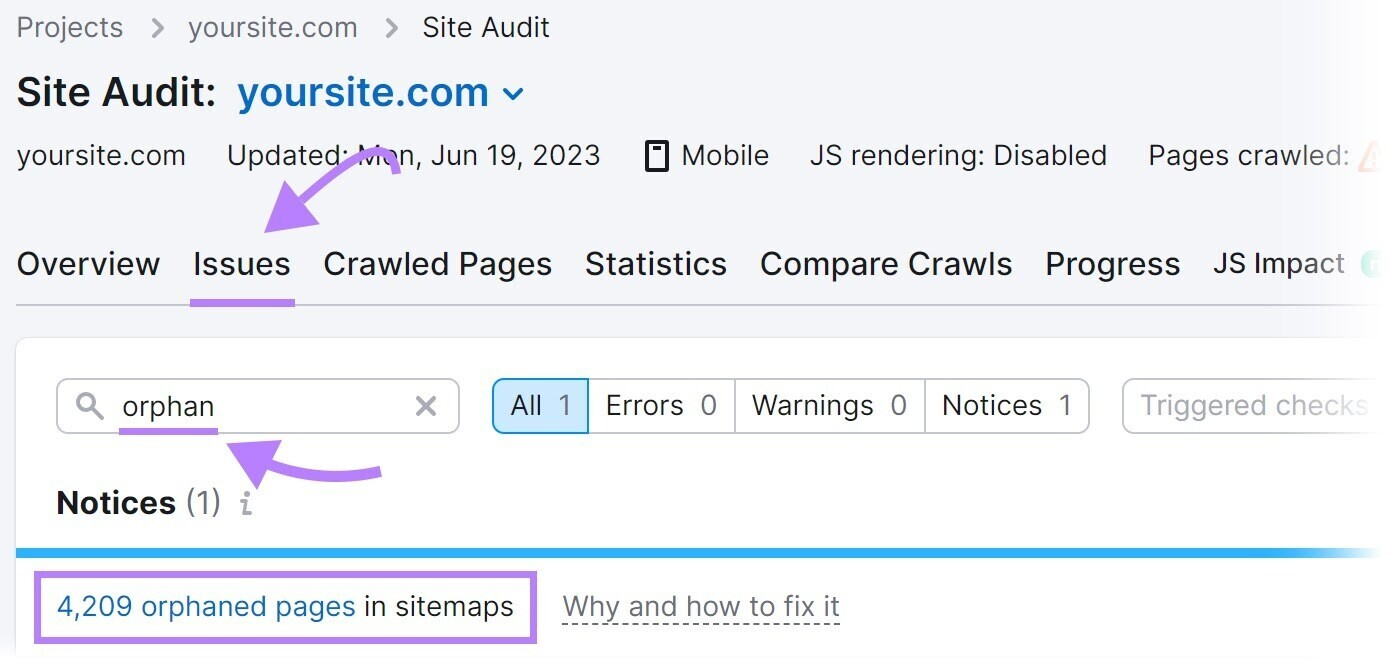

Use Semrush's Site Audit tool.

Configure the tool to run an audit. Then, go to the "Issues" tab and search for "orphan."

The tool will display any orphan pages on your site.

To fix this problem, add internal links to orphan pages from other relevant pages on your site.

5. Bad Sitemap Management

A sitemap lists the pages on your site that you want search engines to crawl, index, and rank.

If your sitemap excludes any pages you want found, those pages might go unnoticed, leading to crawlability issues.

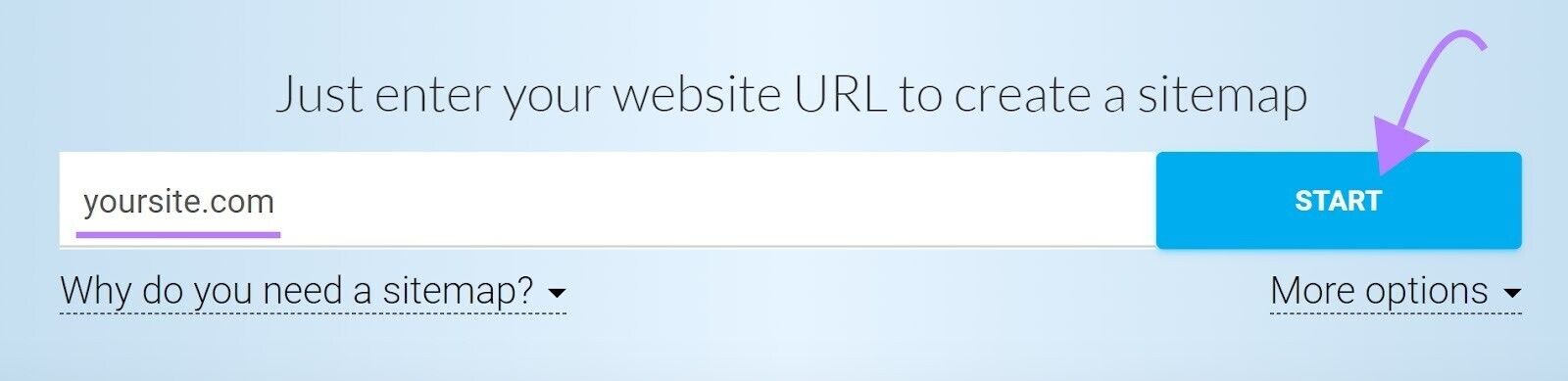

Use a tool like XML Sitemaps Generator to include all pages meant to be crawled.

To generate a sitemap, enter your website URL into the tool. It will automatically create the sitemap for you.

Save the file as "sitemap.xml" and upload it to the root directory of your website.

For example, if your website is www.example.com, your sitemap URL should be www.example.com/sitemap.xml.

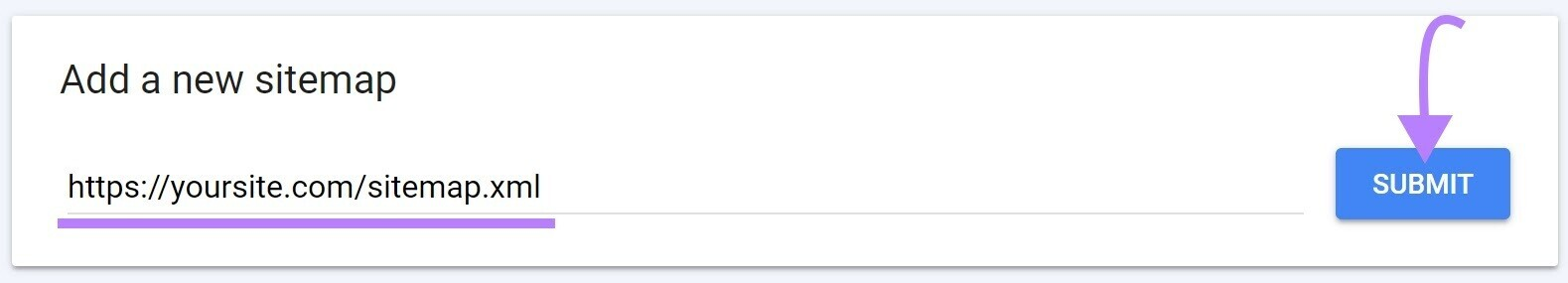

Finally, submit your sitemap to Google through your Google Search Console account.

Access your account, click "Sitemaps" in the left-hand menu, enter your sitemap URL, and click "Submit."

6. ‘Noindex’ Tags

A “noindex” meta robots tag instructs search engines not to index a page.

The tag looks like this:

<meta name="robots" content="noindex">While the noindex tag is intended to control indexing, it can create crawlability issues if left on your pages for a long time.

Google treats long-term "noindex" tags as nofollow tags, as confirmed by Google's John Mueller.

Over time, Google will stop crawling the links on those pages entirely.

If your pages aren’t getting crawled, long-term noindex tags might be the culprit.

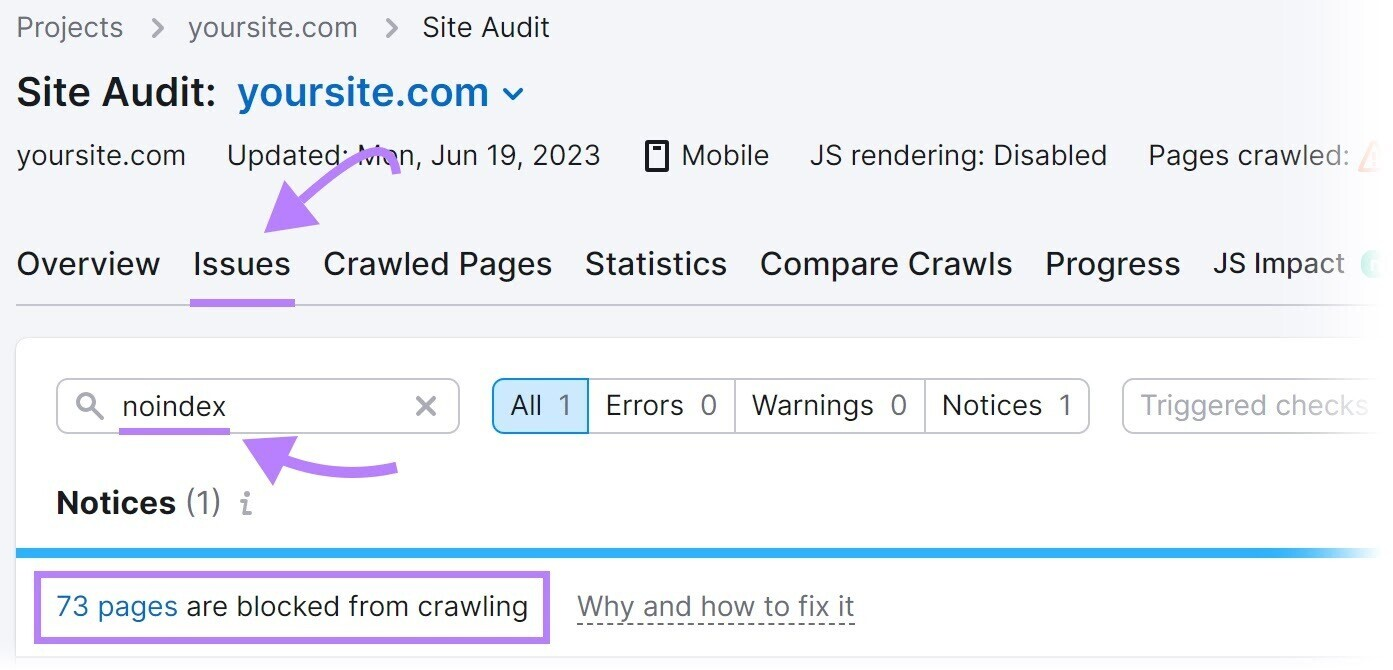

Identify these pages using Semrush's Site Audit tool. Set up a project in the tool to run a crawl. Once complete, go to the "Issues" tab and search for "noindex."

The tool will list pages on your site with a "noindex" tag.

Review these pages and remove the "noindex" tag where appropriate.

7. Slow Site Speed

Search engine bots have limited time and resources to crawl your site, known as a crawl budget.

Slow site speed causes pages to load slowly, reducing the number of pages bots can crawl in a session. As a result, important pages might be excluded.

To solve this problem, improve your website's overall performance and speed.

Start with our guide to page speed optimization.

8. Internal Broken Links

Internal broken links are hyperlinks that point to dead pages on your site.

They return a 404 error page.

Broken links can significantly impact website crawlability because they prevent search engine bots from accessing the linked pages.

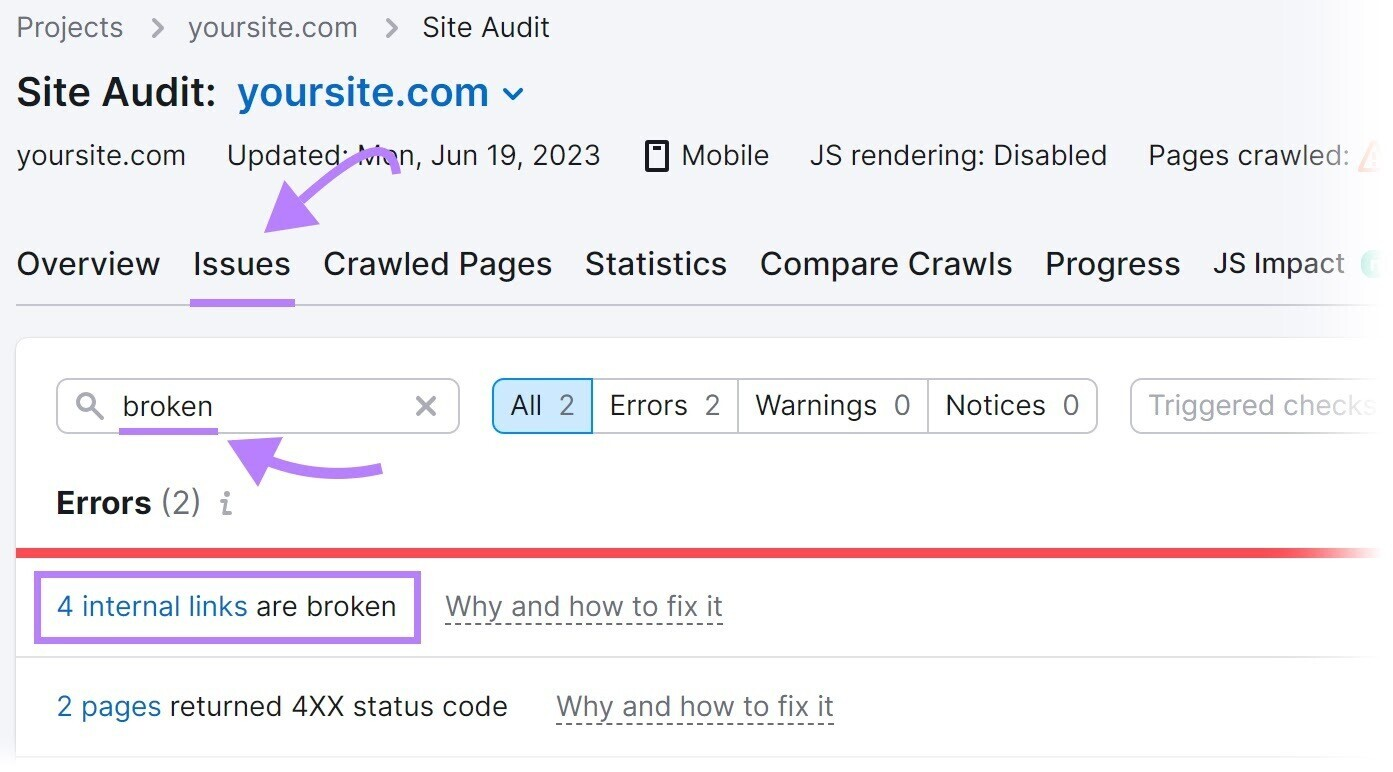

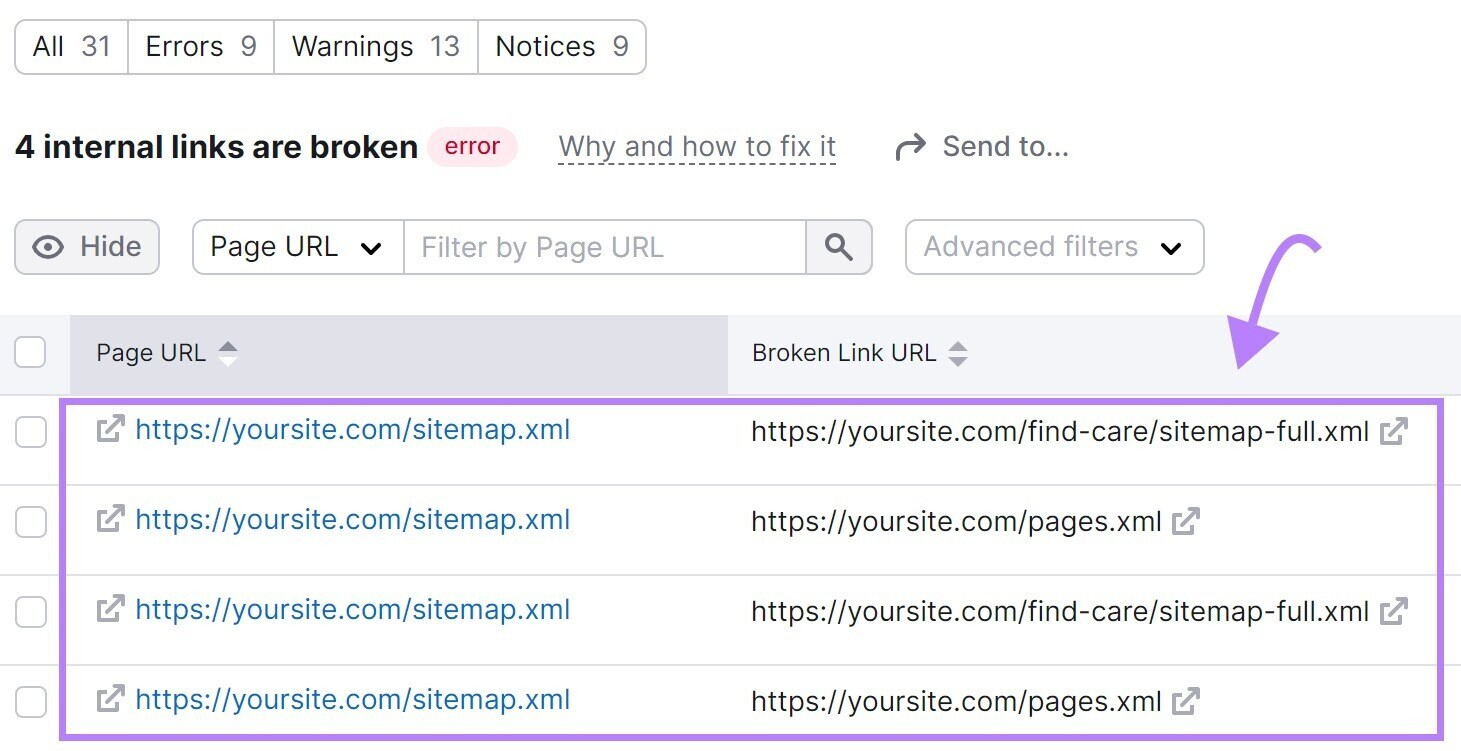

To find broken links on your site, use the Site Audit tool.

Navigate to the "Issues" tab and search for "broken."

Click “# internal links are broken,” and you’ll see a report listing all your broken links.

To fix these broken links, substitute a different link, restore the missing page, or add a 301 redirect to another relevant page on your site.

9. Server-Side Errors

Server-side errors, such as 500 HTTP status codes, disrupt the crawling process because the server couldn't fulfill the request.

This makes it difficult for bots to crawl your website's content.

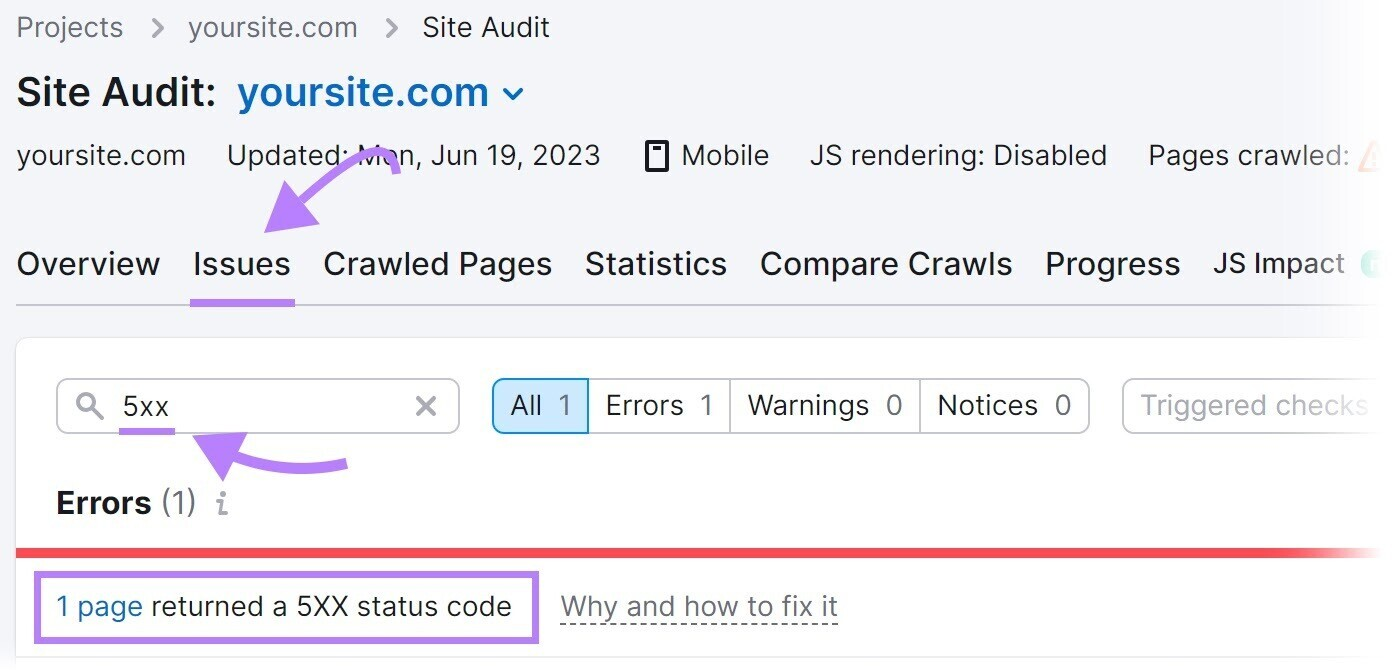

To identify and fix server-side errors, use Semrush's Site Audit tool. Navigate to the "Issues" tab and search for "5xx."

If errors are present, click on "# pages returned a 5XX status code" to view a complete list of affected pages.

Then, send this list to your developer to configure the server properly.

10. Redirect Loops

A redirect loop occurs when one page redirects to another page, which then redirects back to the original page, creating a continuous loop.

Redirect loops prevent search engine bots from reaching the final destination because they become trapped in an endless cycle of redirects between two or more pages, wasting valuable crawl budget time that could be spent on important pages.

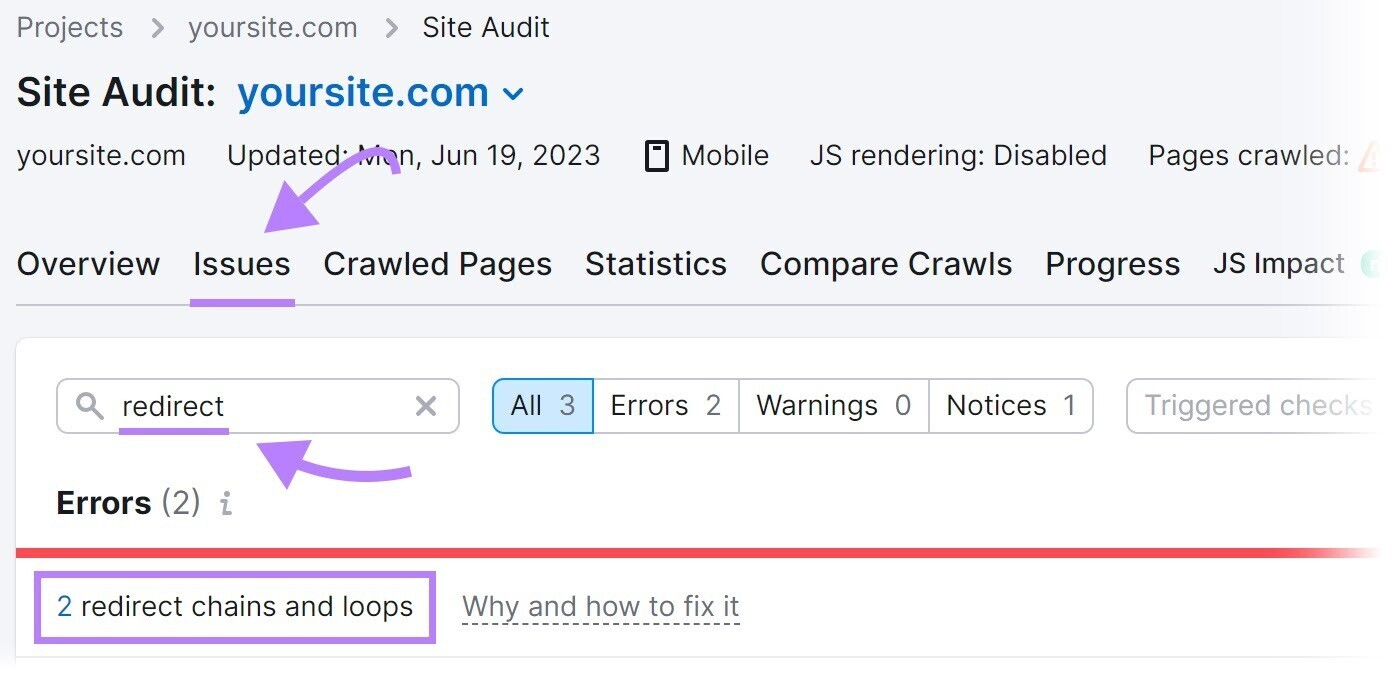

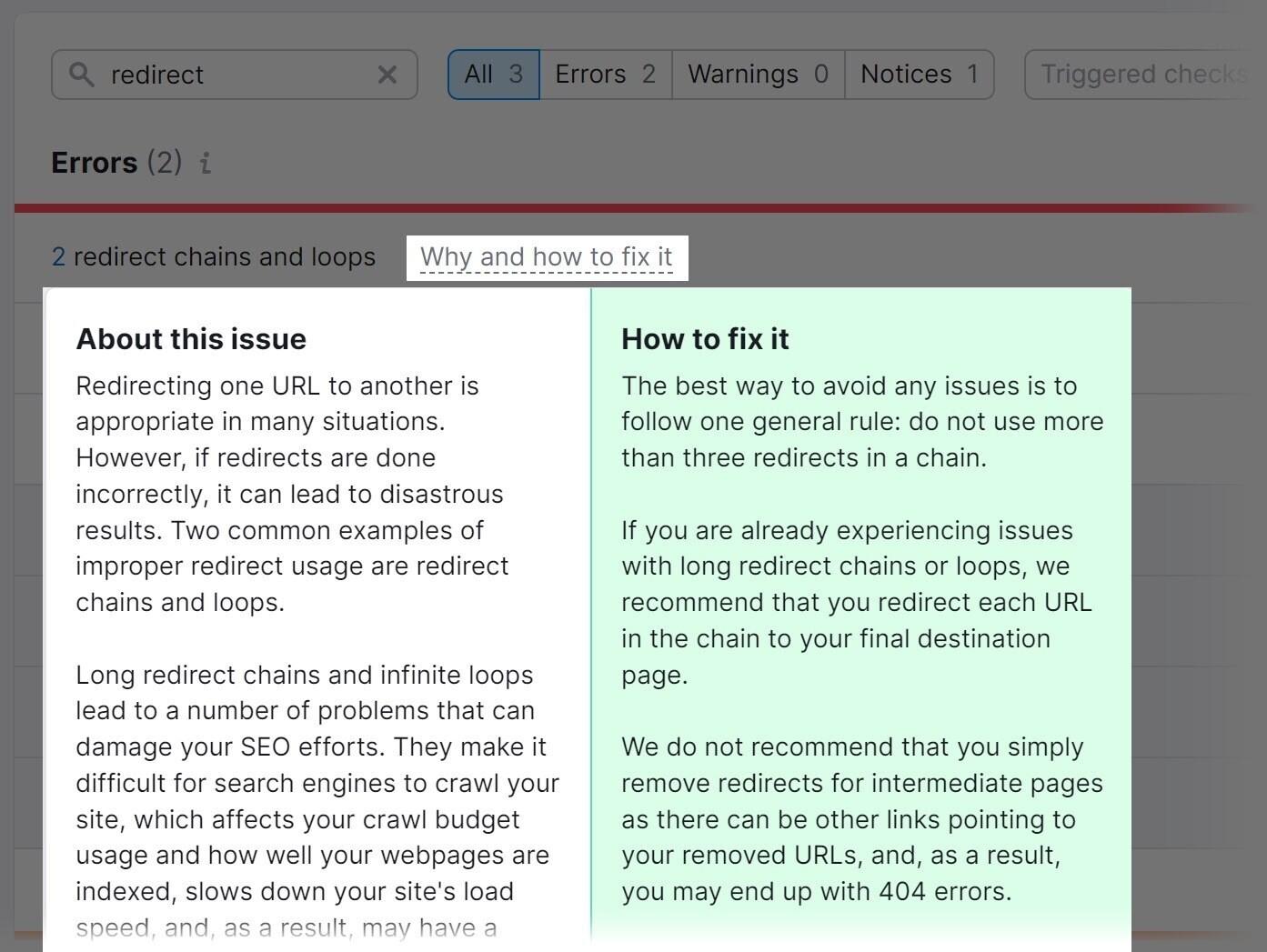

To identify and fix redirect loops on your site, use the Site Audit tool.

Navigate to the "Issues" tab and search for "redirect."

The tool will display any redirect loops and offer advice on how to address them when you click on "Why and how to fix it."

11. Access Restrictions

Pages with access restrictions, such as those behind login forms or paywalls, can prevent search engine bots from crawling them.

As a result, these pages may not appear in search results, limiting their visibility to users.

In some cases, restricting access to certain pages makes sense.

For example, membership-based websites or subscription platforms often restrict pages to paying members or registered users.

This allows the site to provide exclusive content, special offers, or personalized experiences, creating a sense of value and incentivizing users to subscribe or become members.

However, if significant portions of your website are restricted, this becomes a crawlability mistake.

Assess the need for restricted access for each page, and keep restrictions only on pages that truly require them.

Remove restrictions from those that don’t.

12. URL Parameters

URL parameters, also known as query strings, are parts of a URL that follow a question mark (?) and help with tracking and organization.

For example: example.com/shoes?color=blue.

How can URL parameters impact your website's crawlability?

URL parameters can create an almost infinite number of URL variations.

This often occurs on ecommerce category pages; when you apply filters like size, color, or brand, the URL changes to reflect these selections.

If your website has a large catalog, you may end up with thousands or even millions of URLs.

If these parameterized URLs aren’t managed well, Google may waste crawl budget on them, which may result in some of your important pages not being crawled.

You need to decide which URL parameters are helpful for search and should be crawled.

You can do this by understanding whether people are searching for the specific content generated when a parameter is applied.

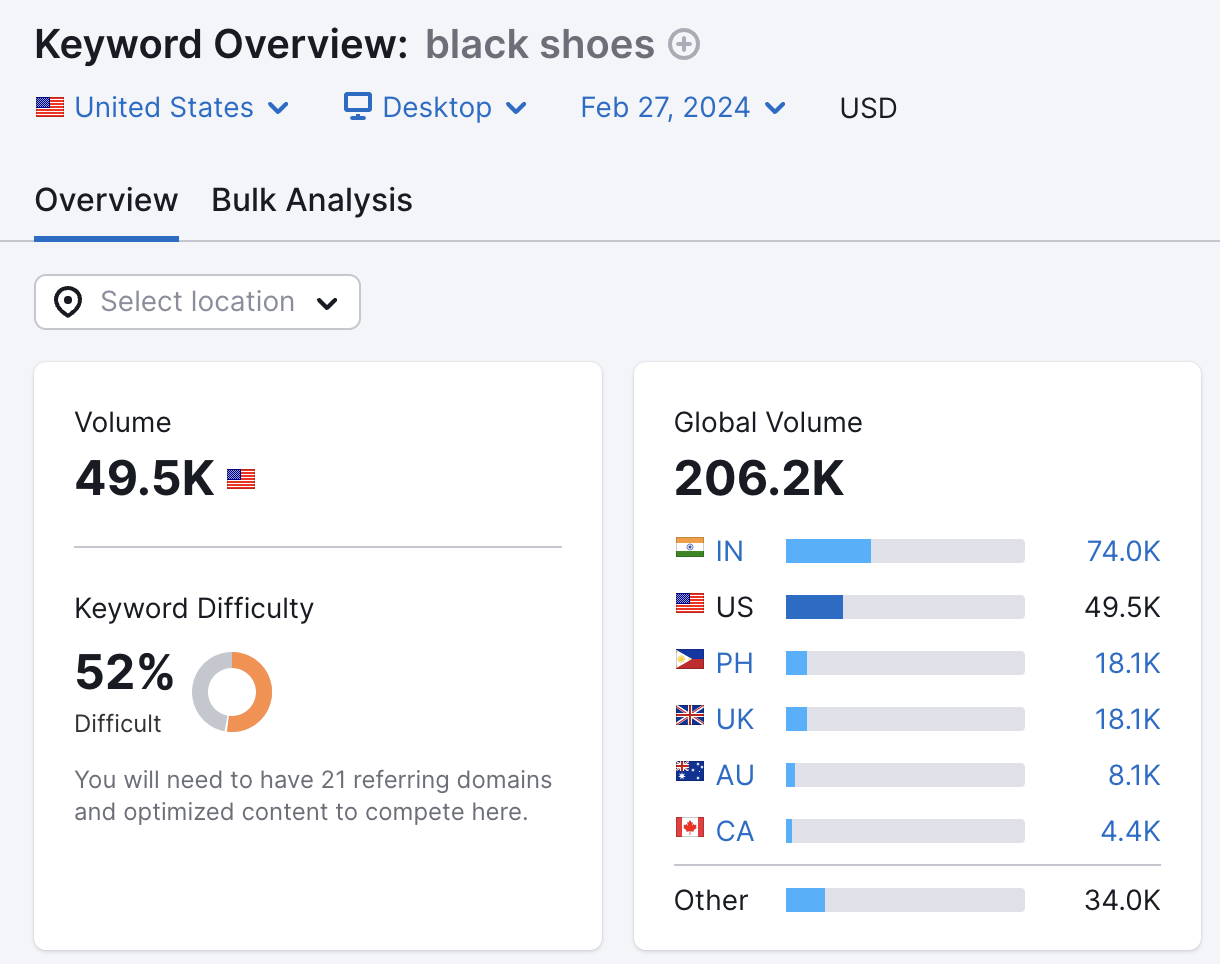

For example, when shopping online, people often search by color, such as "black shoes."

This means the "color" parameter is helpful, and a URL like example.com/shoes?color=black should be crawled.

However, some parameters aren’t helpful for search and shouldn’t be crawled.

For example, the "rating" parameter filters products by customer ratings, such as example.com/shoes?rating=5.

Few people search for shoes by customer rating.

Therefore, you should prevent URLs that aren’t helpful for search from being crawled, either by using a robots.txt file or by adding the nofollow tag to internal links pointing to those parameterized URLs.

This will ensure your crawl budget is spent efficiently and on the right pages.

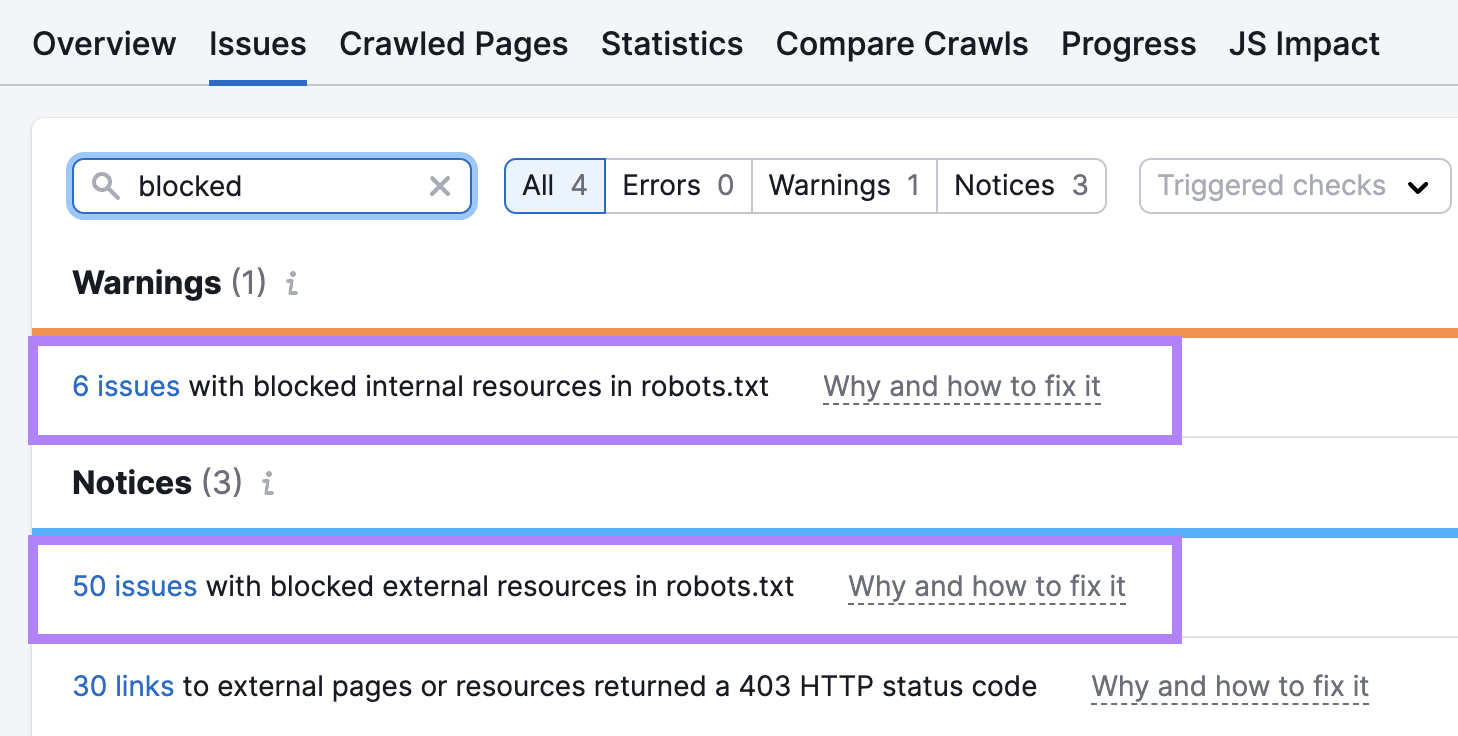

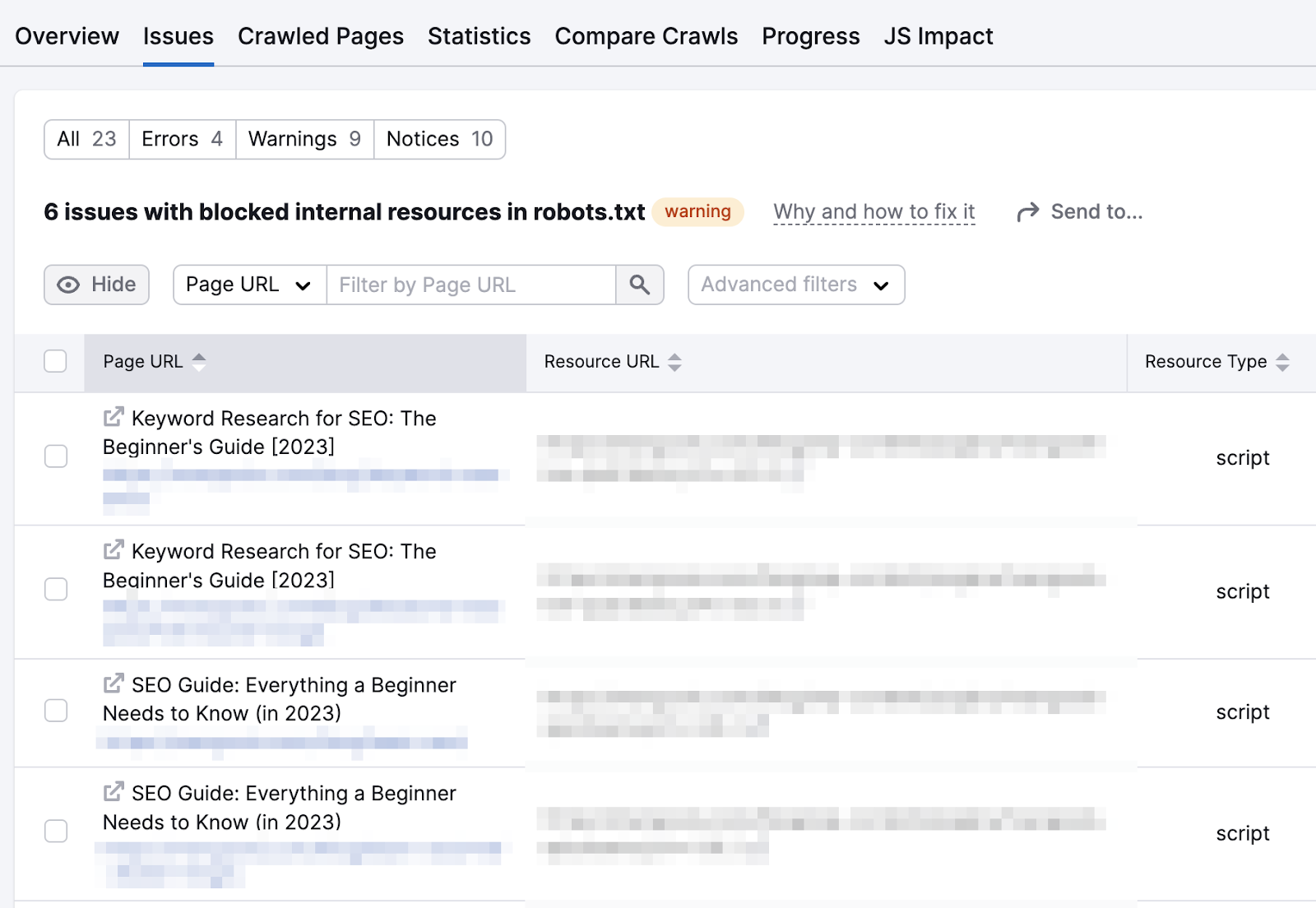

13. JavaScript Resources Blocked in Robots.txt

Many modern websites use JavaScript, which is contained in .js files.

Blocking access to these .js files via robots.txt can create crawlability issues, especially if you block essential JavaScript files.

For example, if you block a JavaScript file that loads the main content of a page, crawlers may not be able to see that content.

Review your robots.txt file to ensure you’re not blocking important JavaScript files.

Alternatively, use Semrush's Site Audit tool. Navigate to the "Issues" tab and search for "blocked."

If issues are detected, click on the blue links.

You’ll see the exact resources that are blocked.

At this point, consult your developer.

They can identify which JavaScript files are critical for your website's functionality and content visibility, and shouldn’t be blocked.

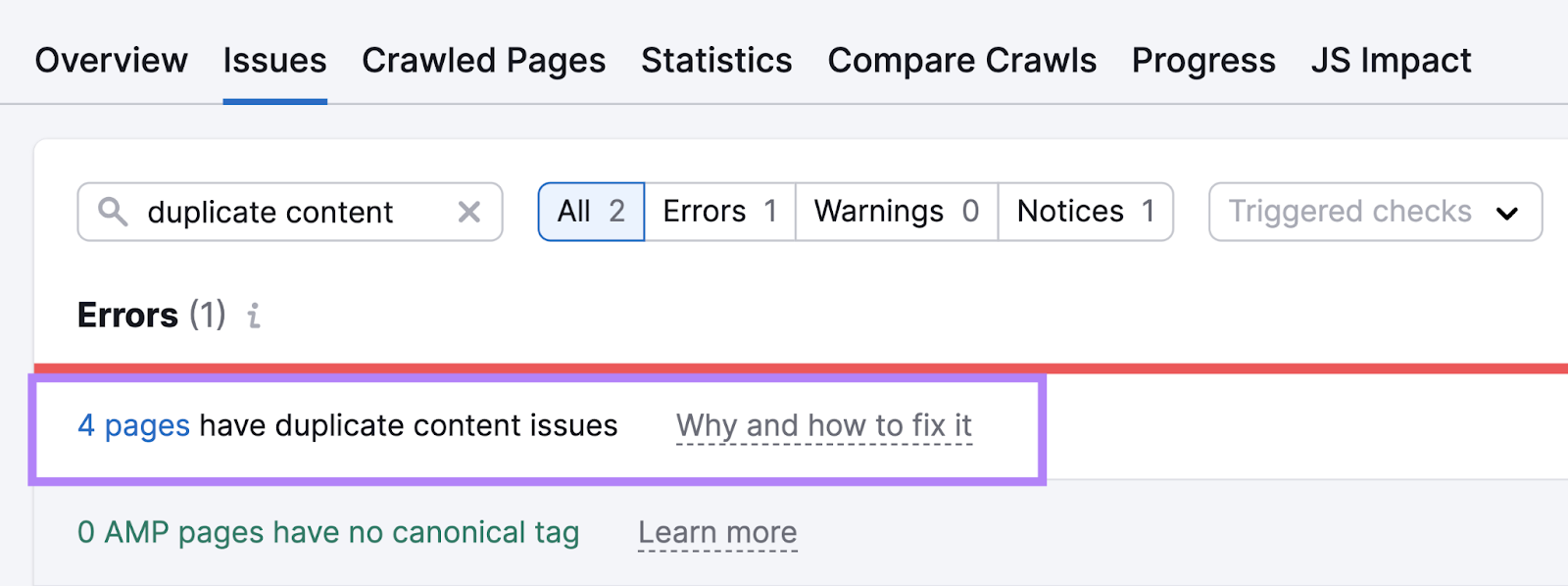

14. Duplicate Content

Duplicate content refers to identical or nearly identical content that appears on multiple pages of your website.

Let’s say you publish a blog post that is accessible via multiple URLs:

- example.com/blog/your-post

- example.com/news/your-post

- example.com/articles/your-post

Even though the content is the same, the different URLs cause search engines to crawl all of them.

This wastes crawl budget that could be better spent on other important pages.

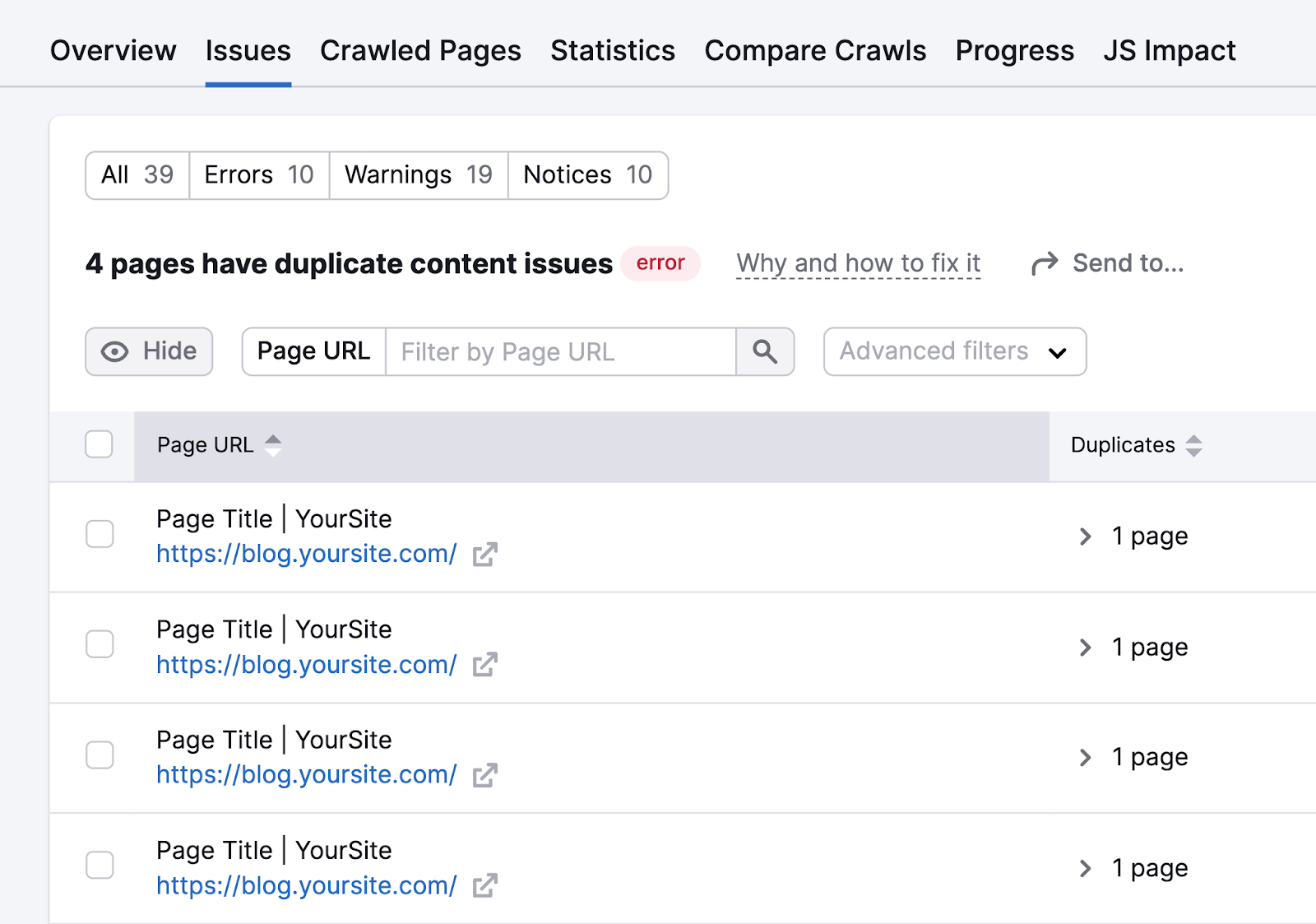

Use Semrush's Site Audit tool to identify and eliminate these issues.

Go to the "Issues" tab and search for "duplicate content." The tool will show if any errors are detected.

Click on the "# pages have duplicate content issues" link to see a list of all affected pages.

If the duplicates are mistakes, redirect those pages to the main URL you want to keep.

If the duplicates are necessary—for example, if you have intentionally placed the same content in multiple sections to address different audiences—you can implement canonical tags.

Canonical tags help search engines identify the main page you want to be indexed.

15. Poor Mobile Experience

Google uses mobile-first indexing, which means it looks at the mobile version of your site over the desktop version when crawling and indexing.

If your site takes a long time to load on mobile devices, it can affect your crawlability. Google may need to allocate more time and resources to crawl your entire site.

If your site is not responsive—that is, it does not adapt to different screen sizes or work properly on mobile devices—Google may find it harder to understand your content and access other pages.

To address this issue, review your site to see how it works on mobile devices.

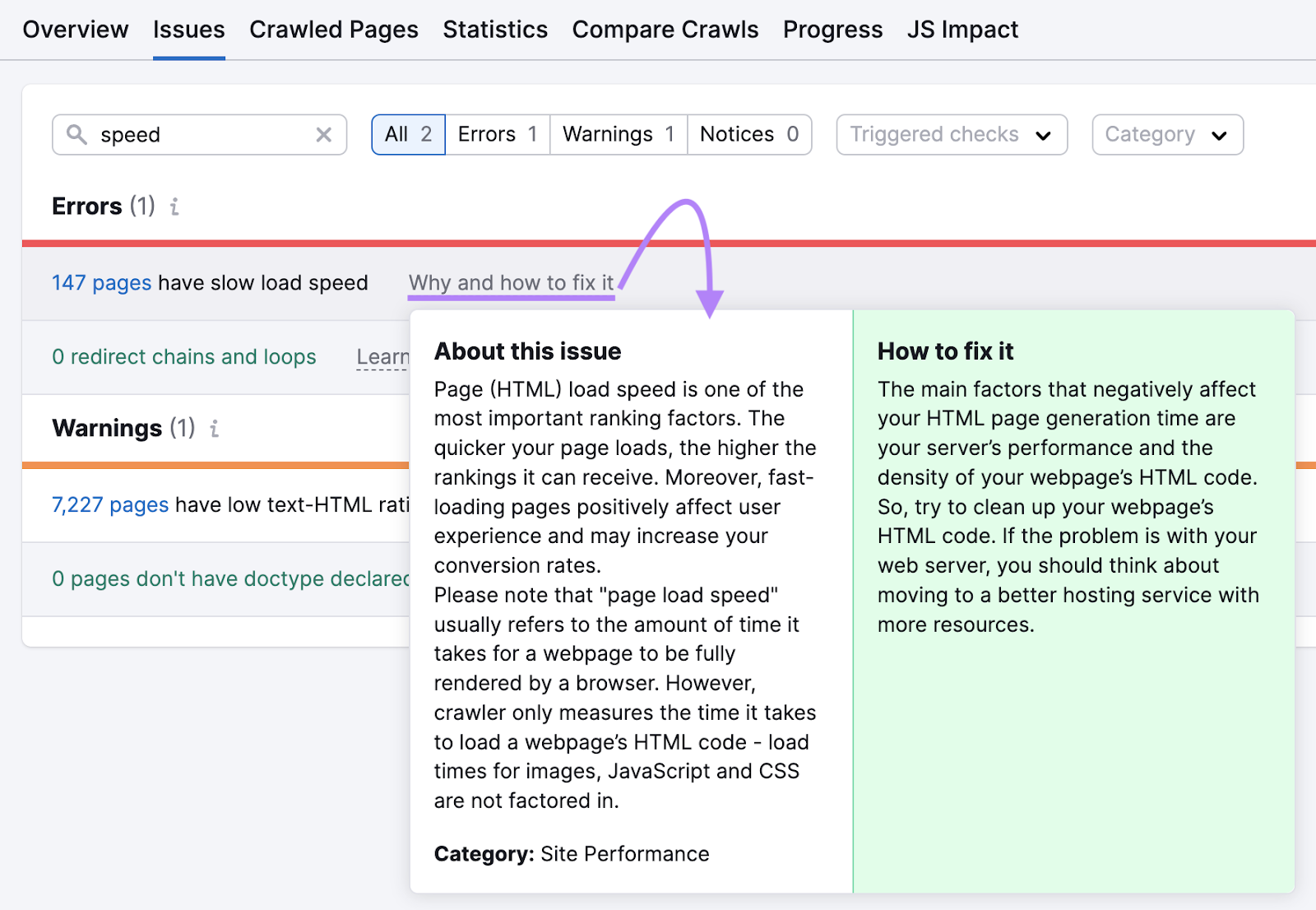

Run a free SEO check for a quick snapshot of your page speed. To find slow-loading pages across your entire site, use the Semrush Site Audit tool.

Navigate to the "Issues" tab and search for "speed."

The tool will show any errors if you have affected pages and offer advice on how to improve their speed.

Stay Ahead of Crawlability Issues

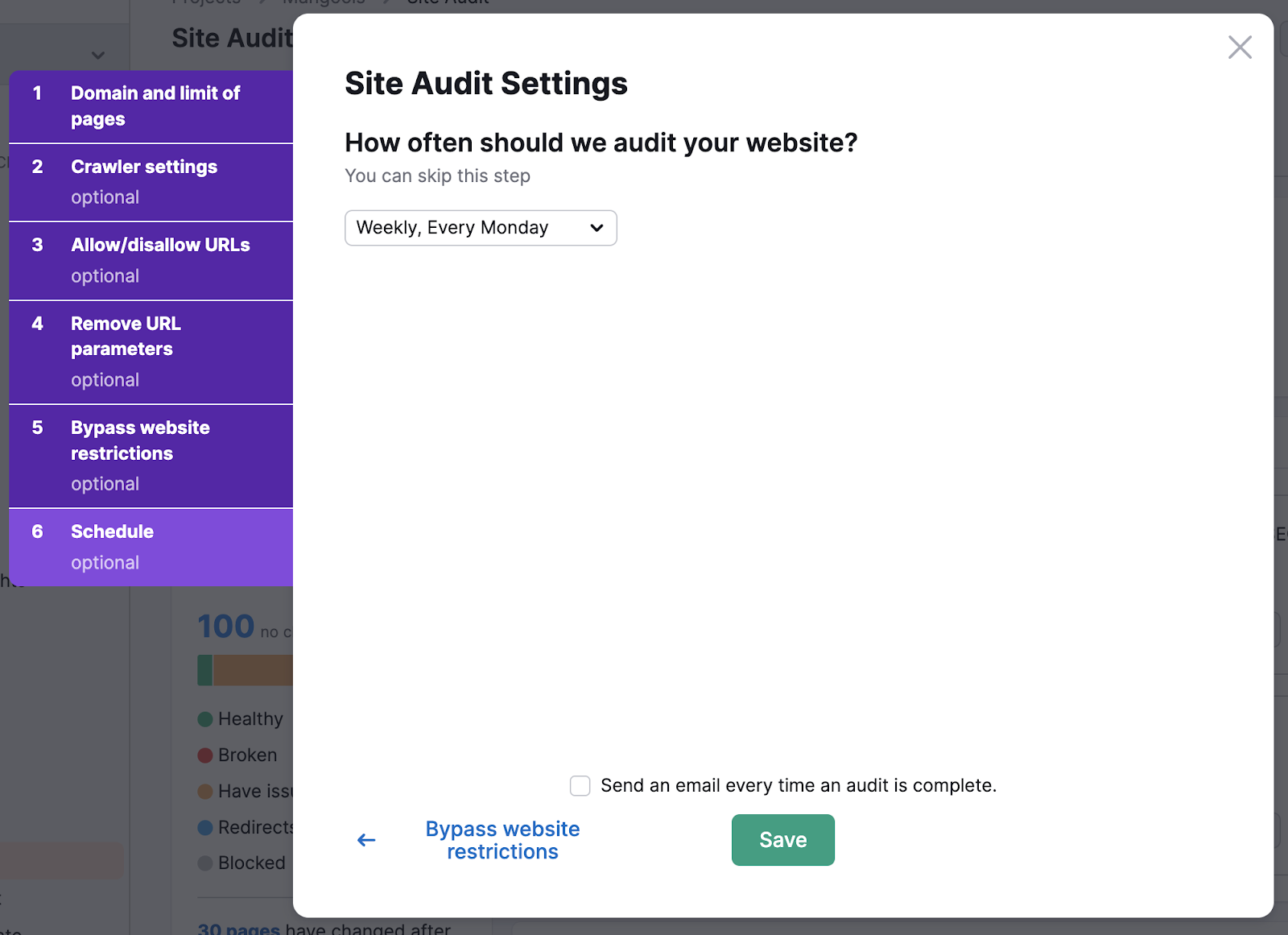

Crawlability problems aren’t a one-time fix.

Even if you solve them now, they might recur in the future, especially if your website is large and undergoes frequent changes. Regularly monitoring your site's crawlability is essential.

Use the free website audit for a quick crawlability check on your homepage. Or, schedule automatic weekly audits across your entire site using the Semrush Site Audit tool.

Navigate to the audit settings for your site and turn on weekly audits.

This way, you can ensure that any crawlability issues are promptly identified.