The word “split-testing” isn't alien to most marketing departments. Email marketers use it to test subject lines; designers use it to see which page layout influences people to buy.

SEO teams, on the other hand, don’t seem to be using split-testing to its full potential.

How Does SEO Split-Testing Work?

An SEO split-test works by dividing a cohort of templatized pages on your website into two groups: Control and Variant. The Variant group has one on-page element changed, added or removed (like the words “free shipping” in a meta description.) Once the test is over, you compare the difference in SEO metrics — like organic traffic, rankings, and CTR — to see whether the change was successful.

Who is a Good Fit for SEO Split-Testing?

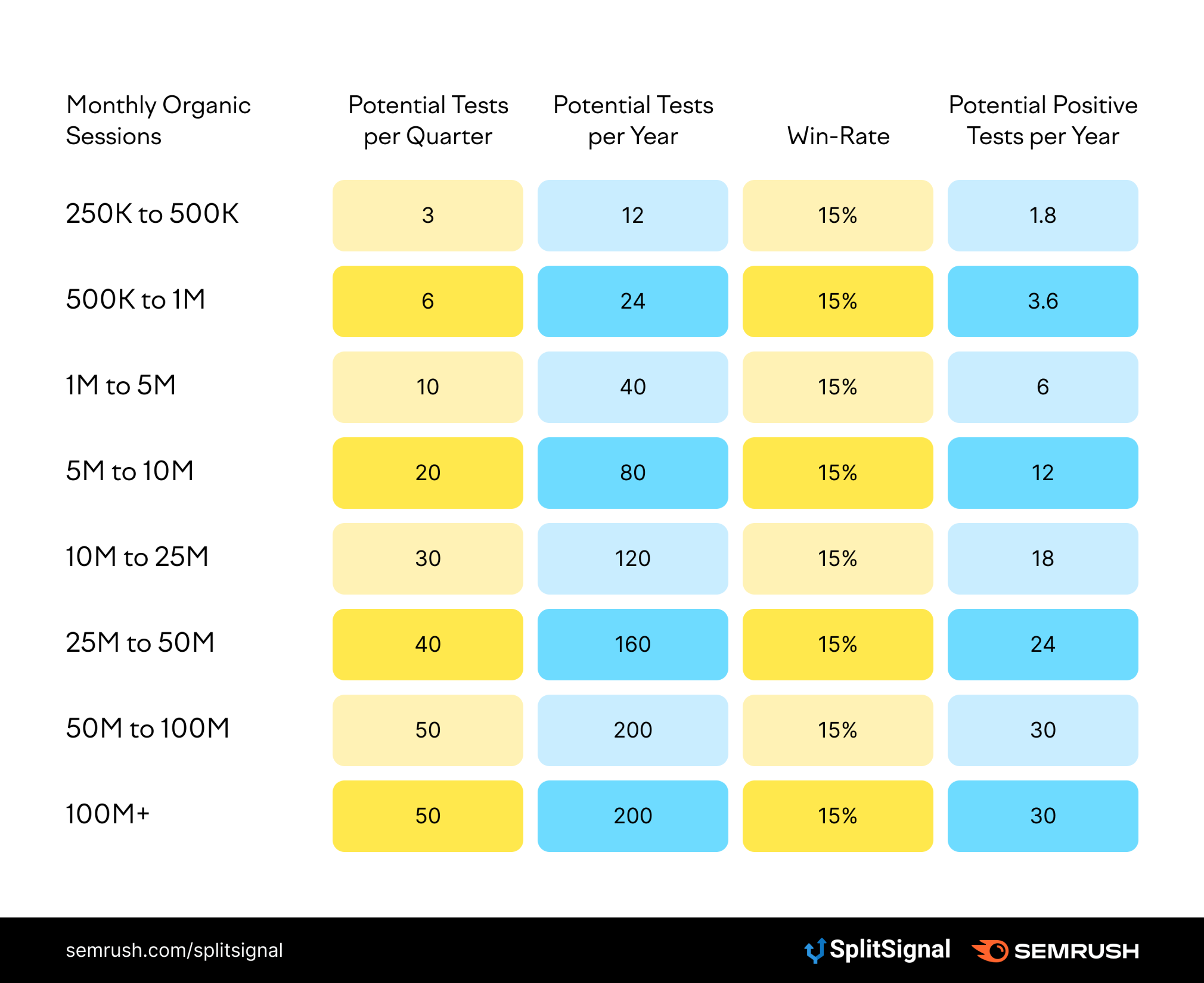

Unlike traditional CRO testing, statistical SEO split-testing is only for large, enterprise-level companies and websites. In general, the minimum threshold for running a statistically significant SEO split-test requires at least 250,000 monthly organic sessions. Here’s a quick table that helps explain whether or not split-testing is worth your time:

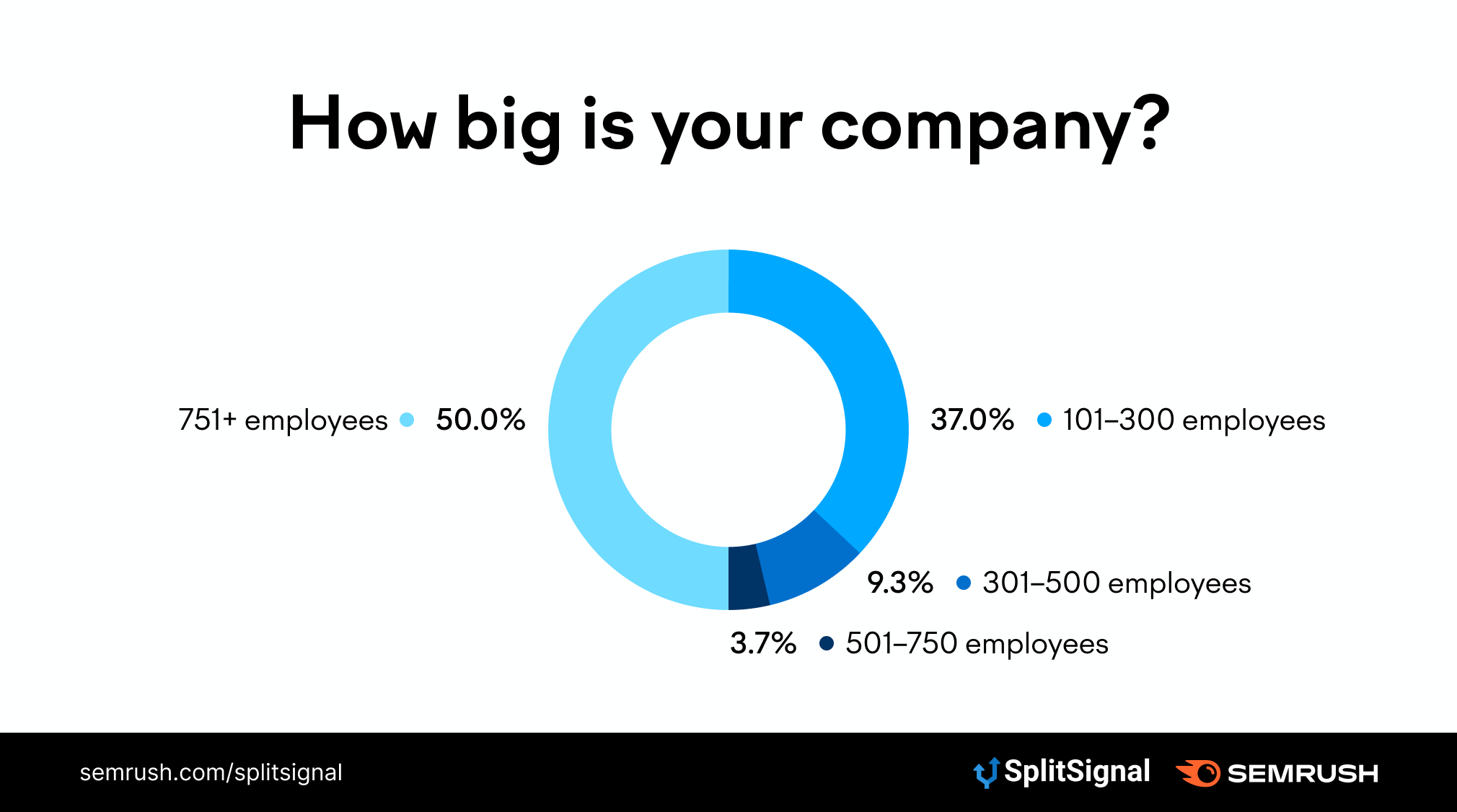

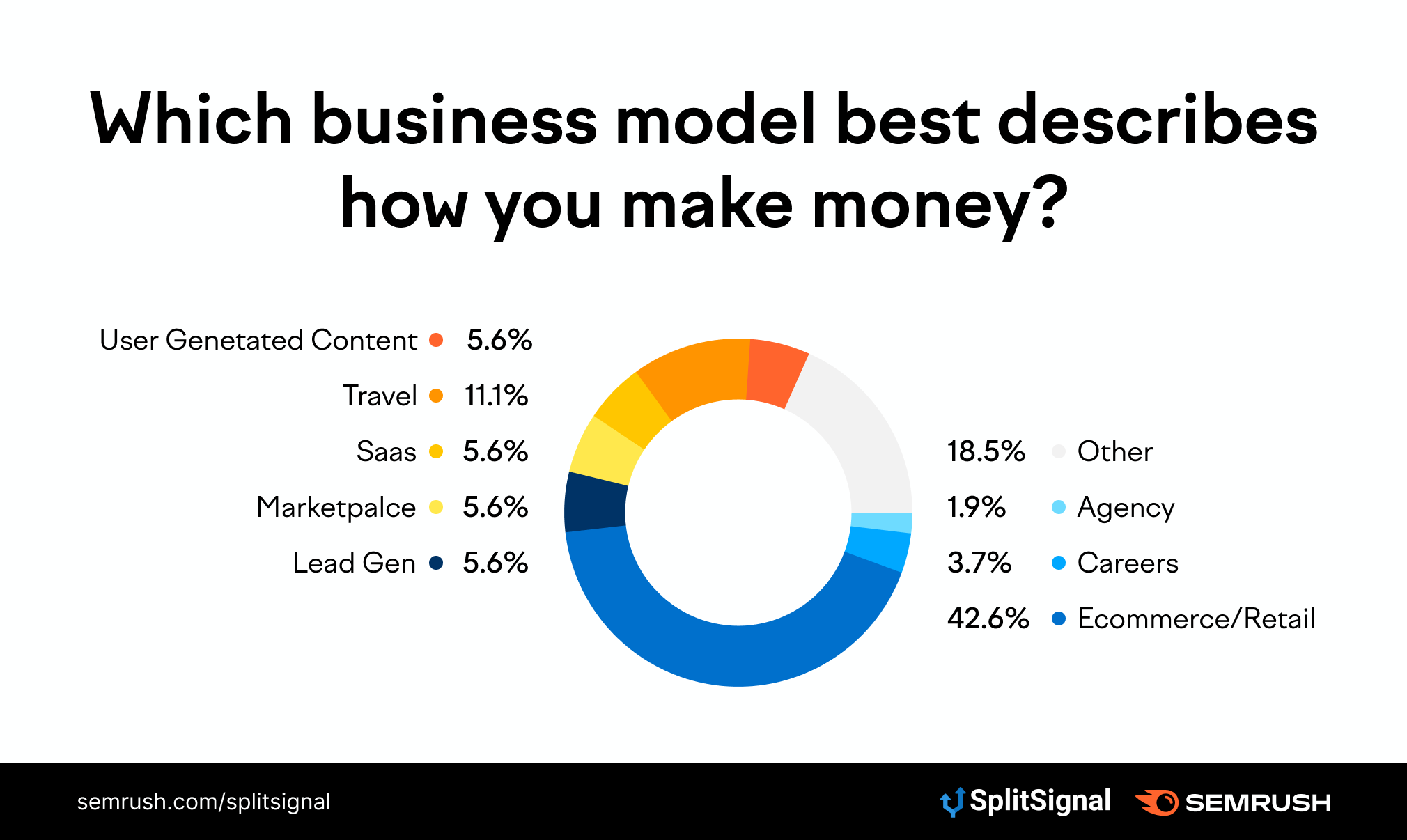

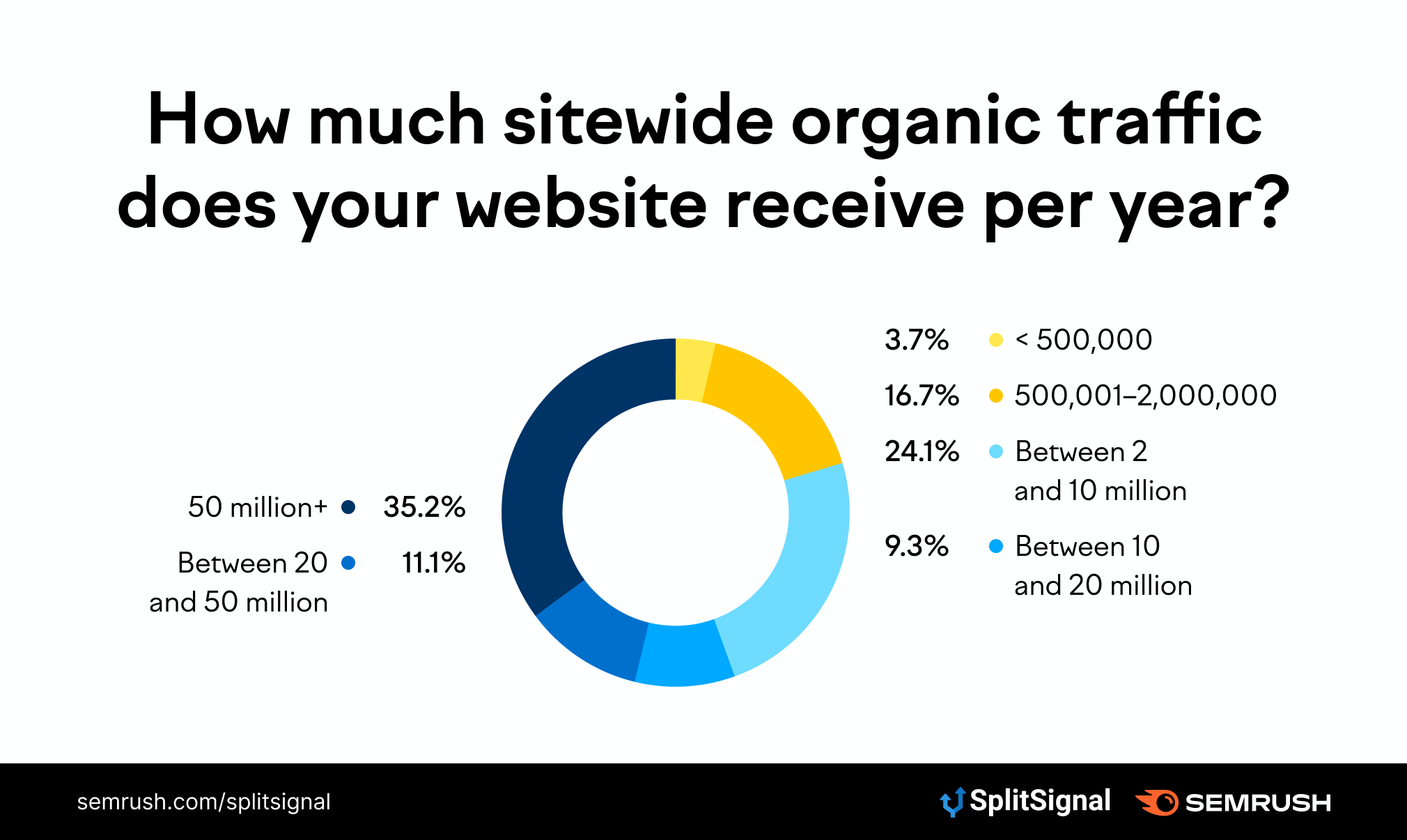

We polled 54 search engine optimizers working at companies with 100+ employees and asked what their current SEO testing strategy looks like.

The goal? To figure out which SEO experiments modern teams are running — including the methodologies currently being used, the challenges associated with statistical SEO testing, and the typical results of an experiment.

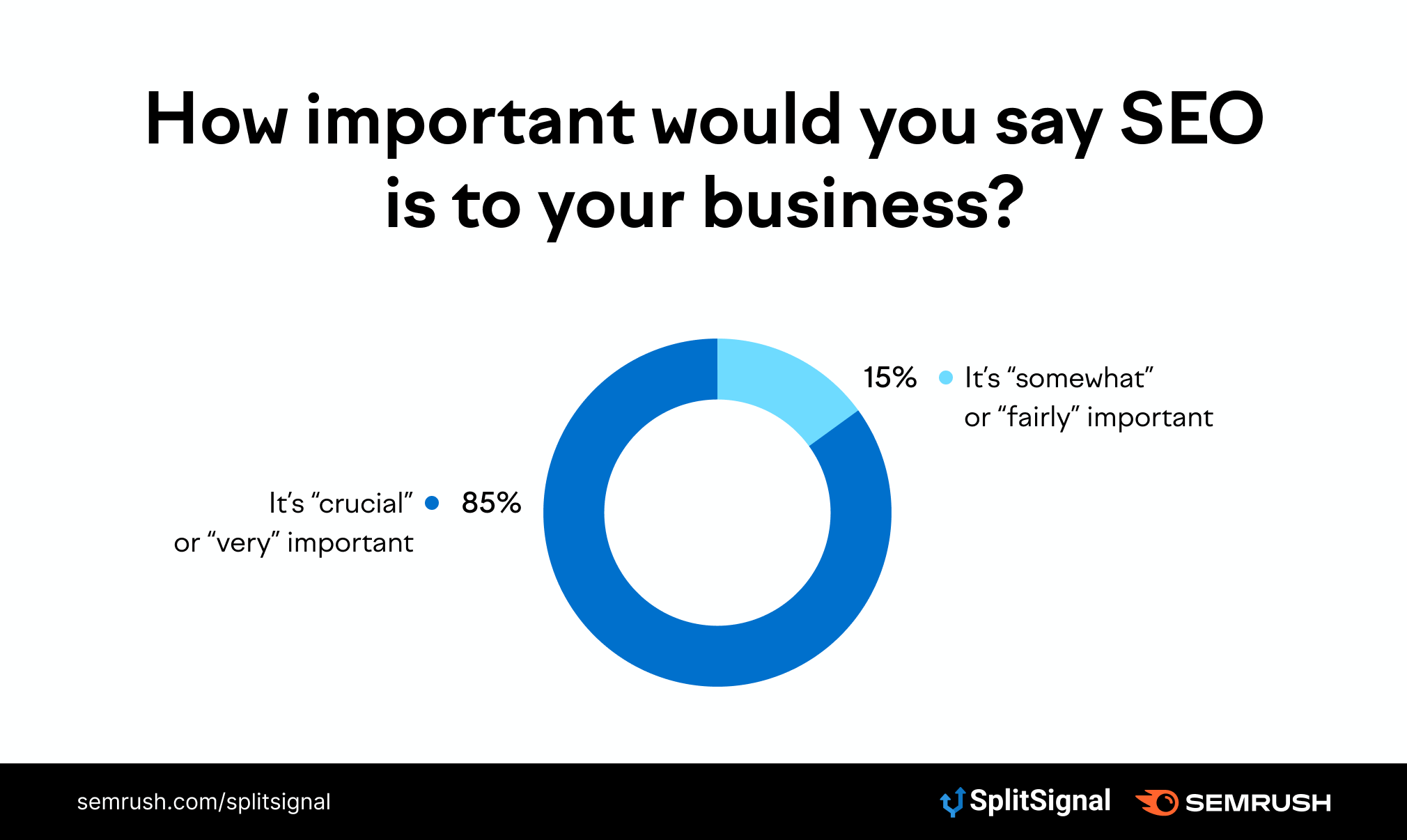

SEO is a valuable marketing tool for companies of all sizes. Some 48% of online shopping journeys start with a search engine — hence why it’ll come as no surprise to learn that the vast majority of marketers believe SEO to be “very” or “crucially” important to their business.

However, there’s a disconnect between the importance of SEO and marketers’ willingness to test it. Just 65% of respondents say they actively test their SEO strategy.

Proving value to leaders and stakeholders is the biggest SEO challenge

SEO has a reputation for being notoriously hard to track. Granted, you can use keyword ranking positions and organic traffic data to show the value of your strategy. But many stumble when it comes to turning those SEO metrics into data to prove how it impacts a business’ bottom line.

Because of this, almost one third (31%) of SEOs say their biggest challenge is proving the value of their strategy to leaders and stakeholders.

Statistical SEO split-testing helps to solve that issue. You can compare the uplift in traffic on Variant pages to any trends in revenue generated from those URLs, and confidently explain that your SEO experiment made a difference to your bottom line.

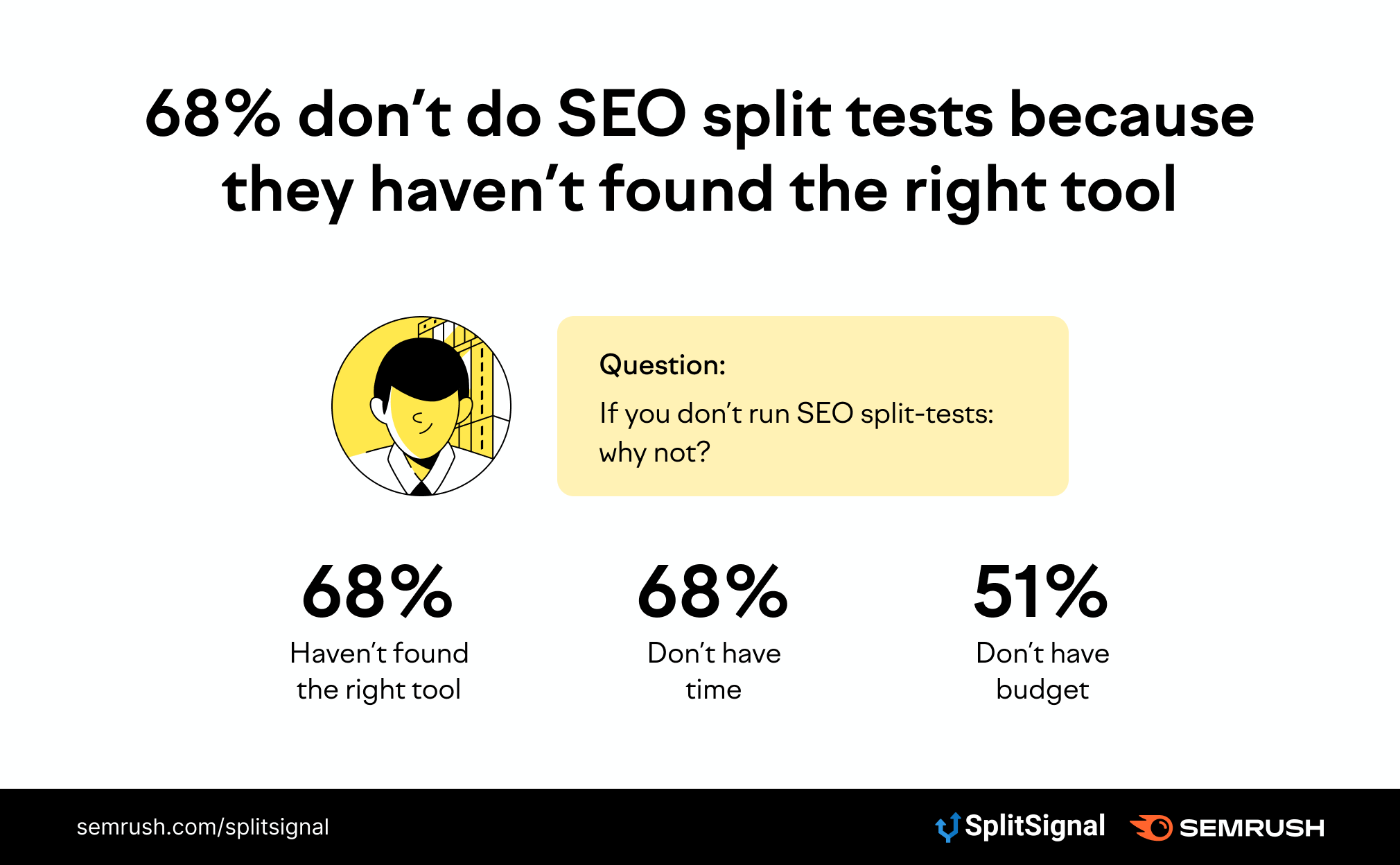

68% don’t do SEO split-tests because they haven’t found the right tool

So, why don’t more companies run SEO tests? Some 68% of respondents who don’t run SEO tests say it’s put on the back-burner because they haven’t found the right tool. Interestingly, the same amount (68%) of respondents avoid SEO testing because they can’t find the time to do it.

That’s no surprise; the manual process for SEO testing is lengthy, complex, and expensive. You’ll need to figure out a way to fairly divide pages into the Control and Variant groups, ask your development team to make changes to the Variant group, and calculate when you’ve collected enough data to reach statistical significance. Each step is a challenge in its own right.

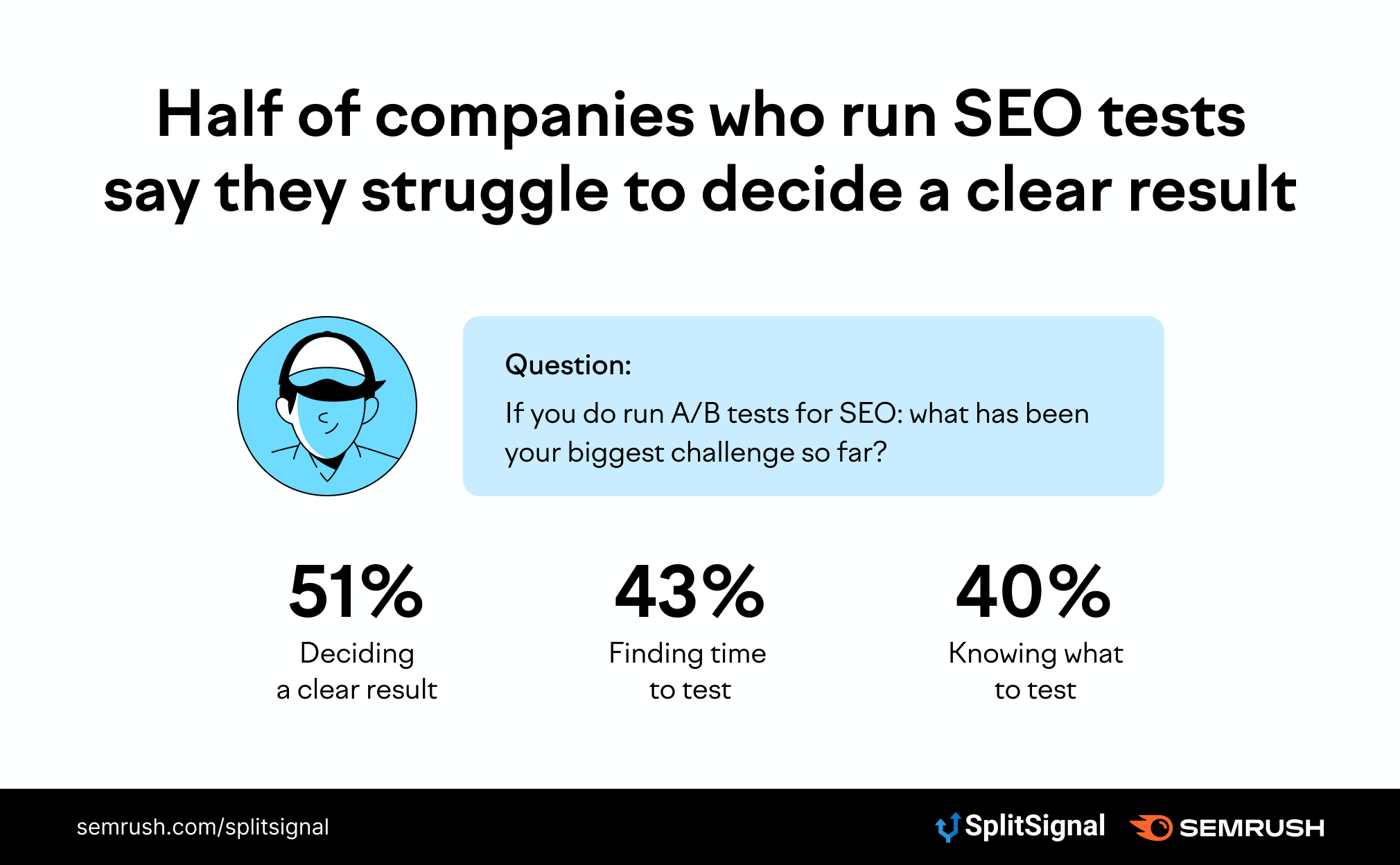

Half of the companies who run SEO tests say they struggle to decide a clear result

The inability to decide on a clear result is a problem for 51% of respondents. More than half struggle to decide when a test reaches statistical significance — and therefore, whether their SEO test was successful or not.

Other big SEO testing challenges include a lack of time to commit to their experiments (43%) and not knowing what to test (40%).

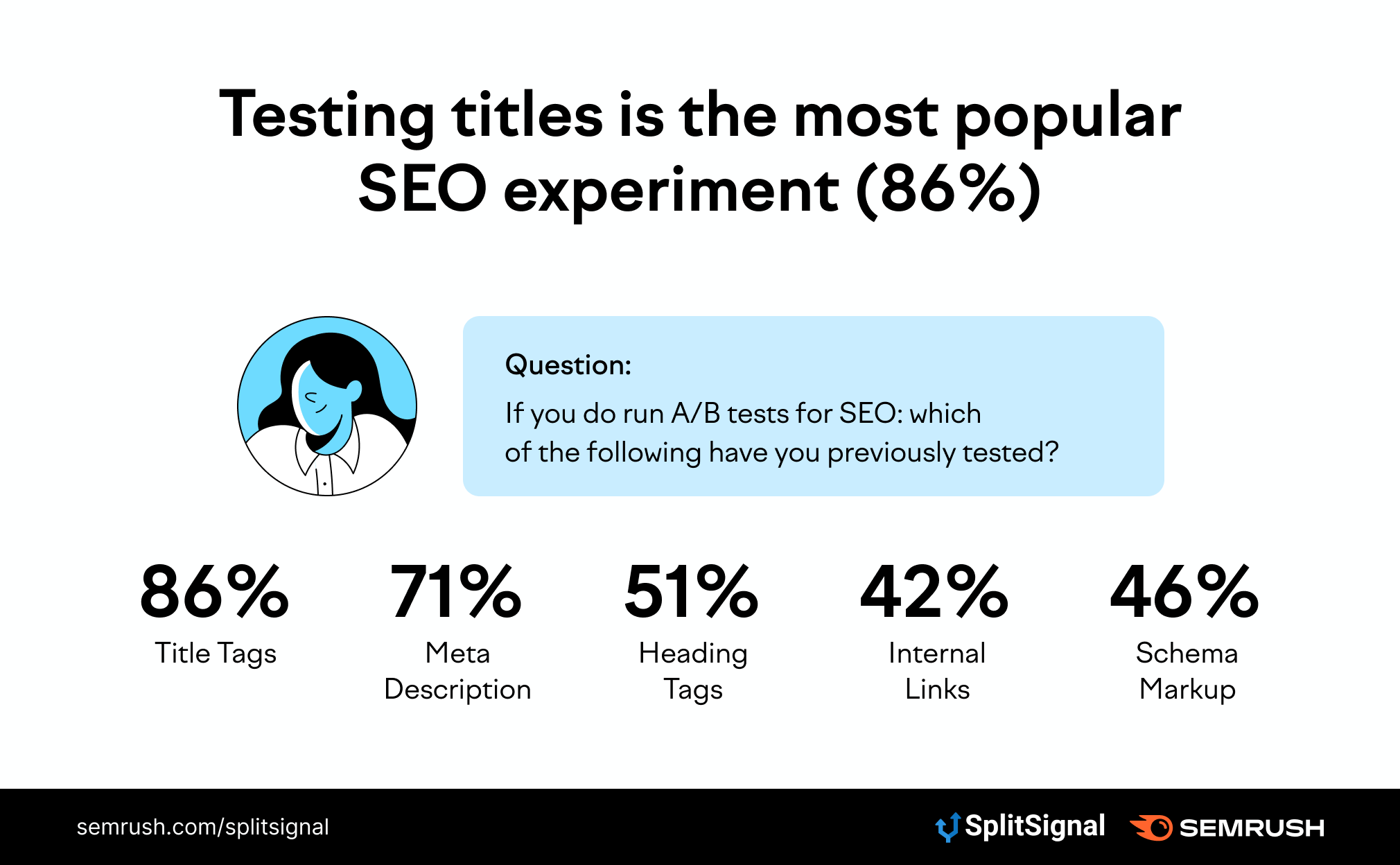

Testing titles is the most popular SEO experiment (86%)

There are tons of SEO experiments to choose from — ranging from a small tweak to a meta title, right the way through to the implementation of Schema markup.

Page titles are the most popular on-page element to test, with 86% of respondents with experience in testing saying they’ve tweaked a page title to monitor its result. That’s shortly followed by meta descriptions (71%) and heading tags (51%).

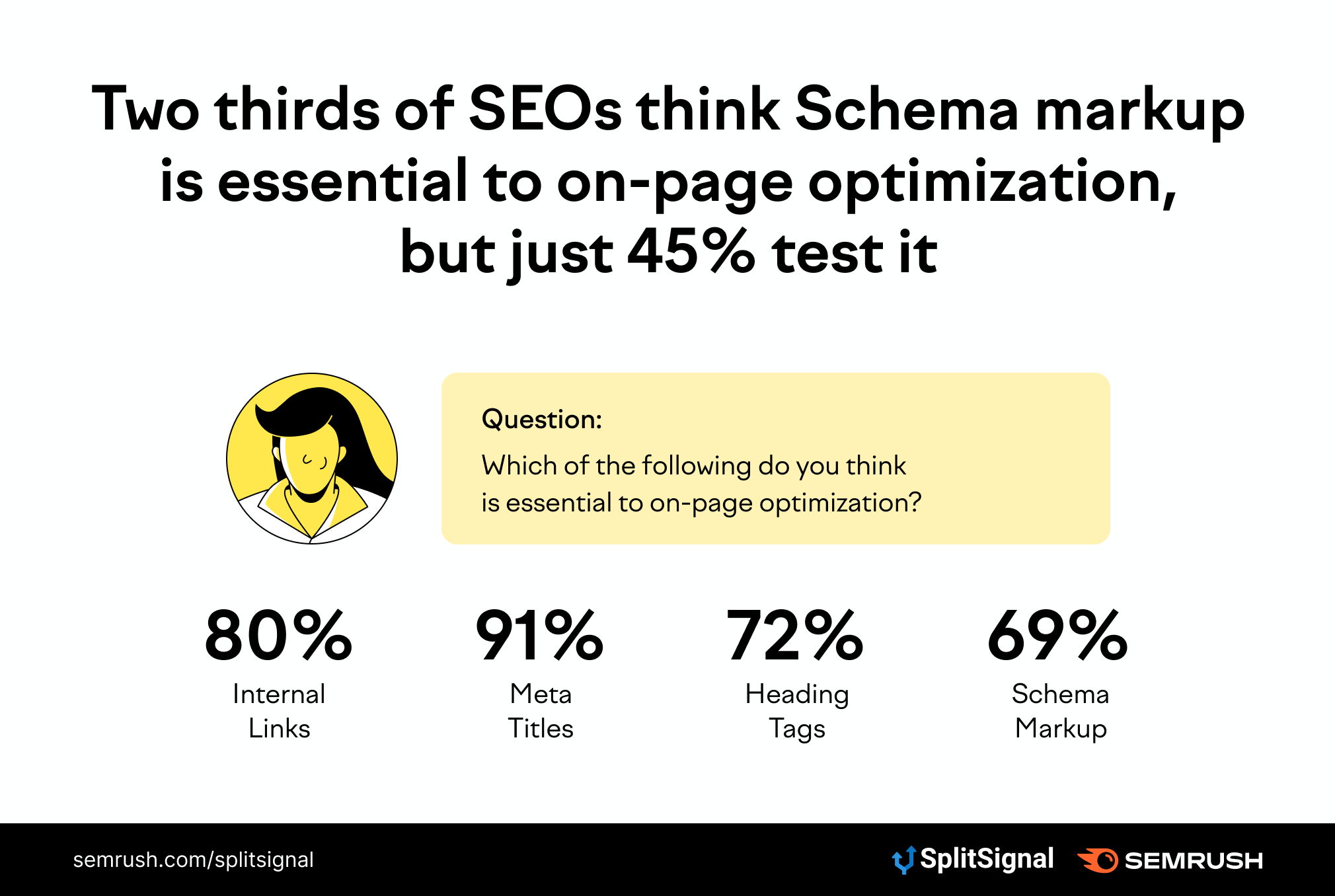

80% of SEOs think internal links are essential to on-page optimization, but just 42% test it

Internal links that point to other pages on your website are clearly important. Four out of five SEOs agree that they’re essential to on-page optimization.

But there’s a contradiction: Despite the general consensus that they’re important, just 42% have tested the SEO impact of removing (or changing) internal links on a templatized page.

Two-thirds of SEOs think Schema markup is essential to on-page optimization, but just 45% test it

Schema markup is another type of on-page optimization that helps search engines understand the data on a page. They often use this information to deliver rich results such as featured snippets, star ratings, and news highlights.

Again, there’s a discrepancy between the importance of Schema and the frequency it gets tested by SEOs. Two-thirds of respondents believe it’s essential to on-page optimization — yet just 45% have tested its impact.

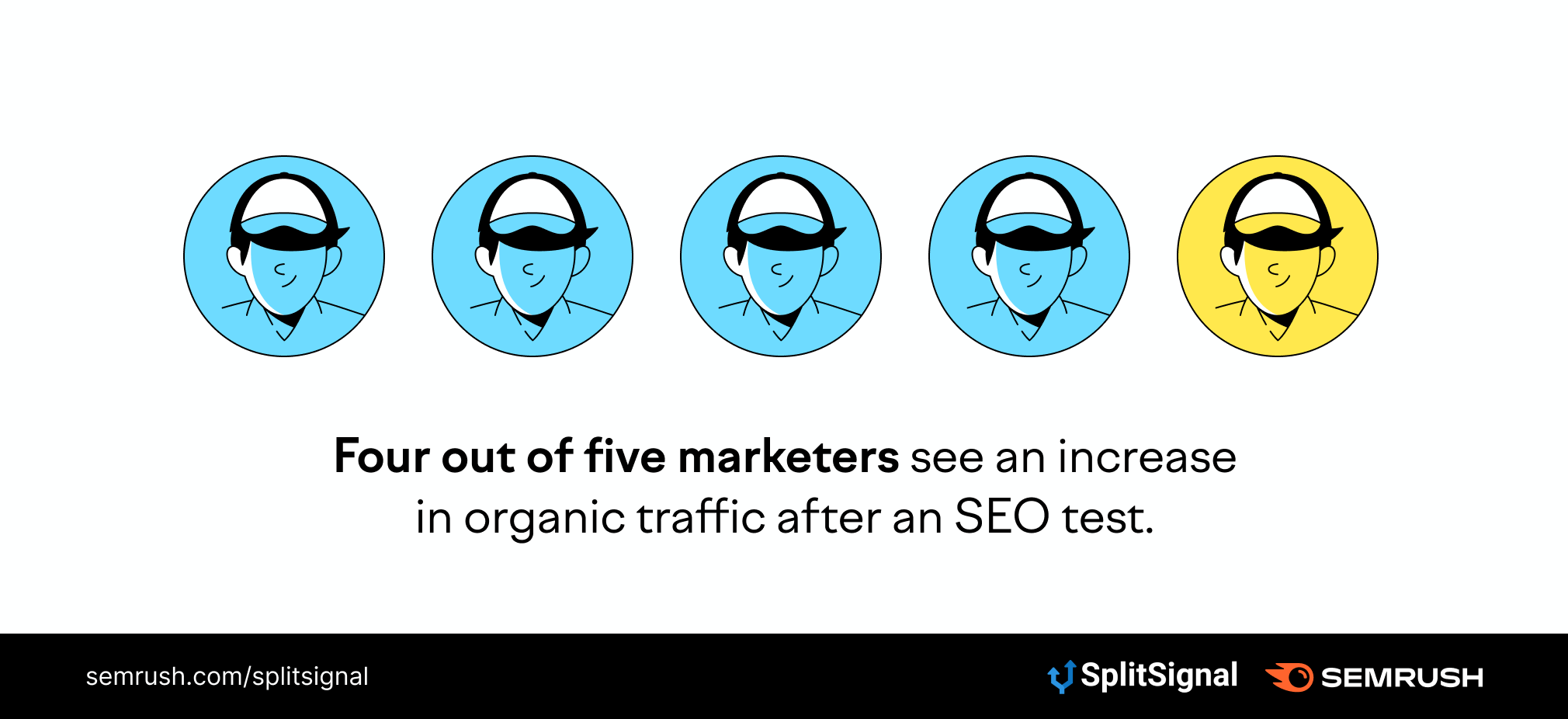

Four out of five marketers see an increase in organic traffic after an SEO test

The 35% of marketers who aren’t testing their SEO strategy are missing out on traffic. Four out of five respondents reported an increase in organic traffic after running an SEO test — something that most strategies are based around.

Not only that, another 74% reported an increase in organic click-through rate. Almost half (49%) saw an uplift in customers as a direct result of their SEO split-testing.

Ready to run an SEO test?

The benefits of running an SEO test are second to none — and frankly, we were surprised to hear that SEO split-testing falls on the back-burner for most large teams.

Are you one of the SEO departments that avoid testing because you don’t have the right tool?

![The State of Statistical SEO Split-Testing in 2021 [Research]](https://static.semrush.com/blog/uploads/media/4c/b6/4cb6e791cf08ebd1a9bc21009df708ae/state-of-statistical-seo-split-testing.svg)