What is SEO Split-Testing?

Experimentation is at the heart of digital marketing. Whether testing different ad formats or performing CRO with landing page designs, A/B tests allow you to validate large-scale changes and enhance your conversion funnel.

A/B Tests are the way to go—by setting control and variation, you can measure the estimated impact before scaling up changes. But when it comes to SEO, it’s not as easy as it sounds.

Our State of Statistical SEO Split-Testing found that while the vast majority of marketers believe SEO is “very” or “crucially” important to their business, just 65% actively test their strategy.

In this guide, we’ll cover what SEO split-testing is, the things people often get wrong about SEO testing, and how you can run your own experiments.

- What is SEO split-testing?

- The difference between SEO testing and CRO

- The benefits of running SEO experiments

- Is my website a good fit for running an SEO split-test?

- The different types of SEO tests

- How to run an SEO test

What is SEO Split-Testing?

A/B testing is the process of making small changes to a group of pages and monitoring its SEO impact.

It works by splitting pages with similar intent into Control and Variant groups—such as category pages on an ecommerce site, or vendor pages on a marketplace site.

You’ll make changes on the Variant pages, like adding “free” to the meta description”, and compare the difference in key SEO metrics. The goal is to improve click-through rates, organic traffic, and keyword ranking positions on URLs in the Variant group.

SEO Testing vs. CRO

Conversion Rate Optimization (CRO) has firmly made its seat at the marketing table. With traditional CRO and user experience tests, you can simply split-test users by creating multiple versions of a page/element and randomly presenting either version to your audience. Then, you can gather the outcomes and run a simple Chi-Squared test to measure and validate the impact.

SEO testing is often lumped in the same bucket. But with SEO, Google’s bots add a new layer of complexity to the equation. You cannot simply present two versions of the same webpage to Google for indexing. That’s defined as “cloaking” and against search engine guidelines.

We have to get a bit more creative with statistical SEO testing. You’re not trying to change user behavior on a page (like getting people to convert). Instead, you’re focusing on Googlebot. It’s not optimizing for what the reader sees; it’s what search engine bots read to decide where a page should rank.

Granted, SEO and CRO work hand-in-hand. The more people you can get to your site through SEO testing, the more traffic your CRO team has to convert. But they’re not the same activity.

Why Should I Split-Test My SEO Strategy?

Now we know the difference between SEO and CRO testing, you might be questioning whether it’s worth your time. After all, your SEO department is busy—and asking the dev team for their time to run a test? It better be worth it.

Let’s take a look at four big challenges that SEO split-testing helps to solve.

Prove the Value of SEO to Stakeholders

SEO has a reputation for being difficult to track. Traditionally, it’s also the least measurable marketing channel compared to email, PPC, and social media. It’s why almost a third of SEOs say that proving the value of SEO to stakeholders is their biggest struggle.

Another challenge of SEO is the uncertainty of the outcome of any campaign or test you’ll run. While your hypothesis may seem sound, there’s no guarantee that it is valid.

For example, sitewide changes can have extensive negative consequences. There’s the risk of potentially dropping your visibility and CTRs, setting your business back significantly, and wasting serious time and money.

So, you have to treat SEO like scientific research—using the scientific method to formulate a hypothesis, test it, analyze the results, and make informed decisions from there.

By first running controlled experiments on desired changes, we can transform any potential failures into proven or rejected hypotheses, which we can learn from and use to modify our approach and expectations for future experiments. The result? An ability to prove ROI of SEO efforts as a valuable earned media channel.

Competitive Advantage

Speaking of the ability to showcase results, four out of five marketers see an increase in organic traffic after an SEO test. Yet our survey found that just 65% of companies with 100+ employees are actively testing their SEO strategies.

There are various reasons for SEO testing falling on the back burner, including:

- Not having the right tool (68%)

- No time to commit to SEO testing (68%)

- No budget allocated for SEO tests (51%)

Running SEO tests gives you the advantage over those companies. You quickly find tweaks to overtake them in SERPs.

But while it’s great to have positive results from your statistical SEO tests, it’s not just positive tests that matter. The consistent testing process is what helps you to grow traffic. You can find out which tweaks tank organic traffic on your Variant pages before rolling it out sitewide.

SEO is Unpredictable

SEO can be tricky. In order to maintain and improve its dominance in the search engine market, Google is constantly tweaking its algorithm—making the art of SEO a game of constant adjustments.

There were several core updates in the past year that generated significant buzz in the industry. Still, the reality is that Google makes, on average, multiple changes to its search algorithm every day (totaling in the thousands each year).

This dynamic nature of search makes your pursuit of higher SERP visibility and click-through rates that much more difficult. The strategies you’re using now might not work in a years’ time. Every search market and every domain is unique.

Therefore, testing different meta-descriptions, title formats, structured data, and page layouts (just to name a few) is necessary to uncover your recipe for optimization.

Not only that, but SEO testing helps you stay ahead of the curve. You’ll create a strategy that proactively goes after stronger ROI, as opposed to stressful reacting to algorithm changes.

Is My Website a Good Fit to Run an SEO Split-Test?

Ready to run your first SEO test? Before you start creating your first (or next!) experiment, it’s crucial to make sure that your website is large enough to run an experiment.

Running an SEO test on a small website without much organic traffic may cause unreliable results. It’ll be almost impossible to isolate the results of your test with external factors, like fluctuations in search demand, causing Control data to vary.

Generally speaking, sites running an SEO test should have at least 300 templatized pages—such as category or product pages, blog pages, or vendor pages.

Each of those templatized pages should drive a minimum of 30k monthly organic sessions (per test). But the more traffic, the better. You get more opportunities to run reliable tests with data that isn’t dramatically impacted by external factors.

The Different Types of SEO Testing

There are two different ways to run an SEO test.

Time-Based

A time-based SEO test does what the name suggests. It happens when you make changes to a group of pages and allow a specific period of time to pass before monitoring the impact.

With this type of testing, you don’t need to divide pages into Control and Variant groups. Organic traffic data from a control period pre-test is compared to traffic after the time elapses.

A/B Split Test

A statistical SEO split-test happens when you divide pages with similar intent into Control and Variant groups, and monitor the differences between each. Unlike time-based testing, you don’t have to wait a specific period of time before analyzing the results. You can usually draw conclusions from the data within 14 to 42 days.

How Does SEO Split-Testing Work?

Are you ready to run your first experiment? Here are three simple steps to running a statistical SEO test.

1. Choose Elements to Test

The first stage in any experiment is to choose which SEO elements you’ll test. This doesn’t always need the concrete theory behind it. Sometimes, testing can be used to see whether a hunch is right.

Some options include:

- Removing a brand name from the title tag

- Adding "free shipping" to the meta description

- Implementing review Schema markup to a landing page

- Using keywords in an image’s alt text

- Shortening breadcrumbs for ecommerce product pages

If you’re not convinced that these small changes will generate meaningful results, consider this: One online liquor retailer removed the last part of the breadcrumb in an SEO test. Over the 21-day experiment, the Variant pages saw an 8.7% increase in organic traffic compared to the unchanged Control group.

2. Divide Pages and Run the SEO Test

The next stage is to divide your templatized pages into Control and Variant groups. Implement the change on your Variant pages using client-side or server-side code:

- Server-Side: This type of SEO testing happens when the element you’re changing is fundamentally hardwired into the page itself. While it does give better user experiences because there is no flickering, it’s much more complex and invasive. You’ll need to rely on already-busy development teams to implement the changes.

- Client-Side: This happens when the element you’re changing uses Javascript code. There’s a slight flicker between the old and new version of a page, but it is the easiest type of SEO testing. The code is easier to install and remove; code doesn’t need hardwiring, and there’s no need for external help from developers.

You can do this manually or using SEO testing software.

Manual SEO Testing

Before we get into the trenches of running an SEO test, there are some things you need to know upfront—particularly that you’ll have a heavy reliance on development and data science teams.

Depending on the test you’re running, you’ll need dev input to install and remove the code on each page. You’ll also need data science teams to divide pages and analyze the data.

Got those two resources down? The first stage in running a manual SEO A/B test is to divide your pages. Use historical data to identify the types of templatized pages you want to test (with your data science team’s support).

Next, ask your dev or engineering team to create the code that changes the element on the Variant pages.

Finally, allow the test enough time to draw conclusions from; 3 to 4 weeks if using the CausalImpact model, or 4 to 6 months if you’re using another analysis method.

Read more here: Using Google Search Console to Better Test SEO Changes

SEO Testing Software

Not all SEO tests have to be created from scratch. There are several testing tools you can use to run SEO experiments, each dividing your pages into Control and Variant groups, implementing changes, and measuring results.

The right one for your business depends on the size of your site, model of measurement, and element you’re testing through client- or server-side code.

Running an SEO test using SplitSignal is the best choice for large websites with high volumes of organic traffic. It will split pages into Control and Variant groups using historical data. And it uses client-side testing so SEOs can take control of their tests without relying on busy dev teams. The Javascript code can be created, uploaded, and ready to test within minutes.

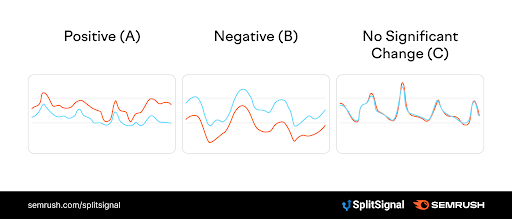

SplitSignal also interprets data on your behalf. No confused looks when comparing endless rows of data—the tool will compare results between the two groups and clearly state whether it was a positive or negative test.

3. Measure Results

The final stage in running SEO tests is to measure the results. Despite it being the most important step, it’s the biggest challenge; 51% of SEOs don’t know how to decide on a clear result for their SEO experiments.

You can use various models to determine the results of an SEO experiment:

- Pre/Post Analysis: If you’re running a manual SEO test, you’ll likely be using the pre/post model. While it’s definitely better than nothing and helps you to validate your SEO decisions, it does have issues. First: the band of error is much higher because you don't have a Control group. There’s also a higher chance of having a fake positive/negative test, leading you to base your SEO strategy on inaccurate data.

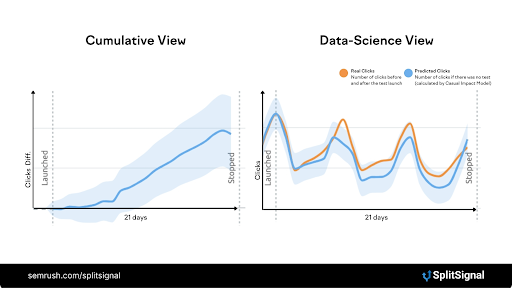

- CausalImpact: This is what SplitSignal uses. It compares real traffic from the Control group against traffic from the Variant group. If the difference is big enough, the test is statistically significant.

Run a Statistical SEO Test With SplitSignal

Changes to Google’s ranking algorithm are inevitable, and there’s no secret recipe for increasing organic traffic.

This is why experimentation is a necessary element of SEO; by running experiments before making a site-wide change, a potentially damaging drop in traffic/clicks is avoided while also allowing you to test more variations.

Unsuccessful tests are also fine. In fact, they’re at the core of the scientific method. A rejected hypothesis isn’t a failure; it’s a conclusion—something we can learn from and utilize to construct future iterations. However, without an easy way to streamline this experimentation, a lot of time and resources can be wasted.

With SplitSignal, you can streamline experimentation on your site, mitigate the potential risks of large-scale changes, and uncover some major wins for your organic traffic and visibility.

How much has a fear of wasted resources stopped you from taking risks with your marketing strategy? How do you justify the investment in resource-heavy changes to your site with the uncertainty of their impact?

With SplitSignal, you can let your creativity flow without any anxiety and take your SEO to the next level. Don’t let loss aversion limit your brand’s marketing potential.

Ready to start testing? Start a pilot with SplitSignal today.