Why are only a few of my website’s pages being crawled?

If you’ve noticed that only 4-6 pages of your website are being crawled (your home page, sitemaps URLs and robots.txt), most likely this is because our bot couldn’t find outgoing internal links on your Homepage. Below you will find possible reasons for this issue.

There might be no outgoing internal links on the main page, or they might be wrapped in JavaScript. If you have a Pro subscription, our bot won’t parse JavaScript content, so if your homepage has links to the rest of your site hidden in JavaScript elements, we will not read them and crawl those pages.

Although crawling JavaScript content is only available for Guru and Business users, we can crawl the HTML of a page with JS elements, and we can review the parameters of your JS and CSS files with our Performance checks regardless of your subscription type (Pro, Guru, or Business).

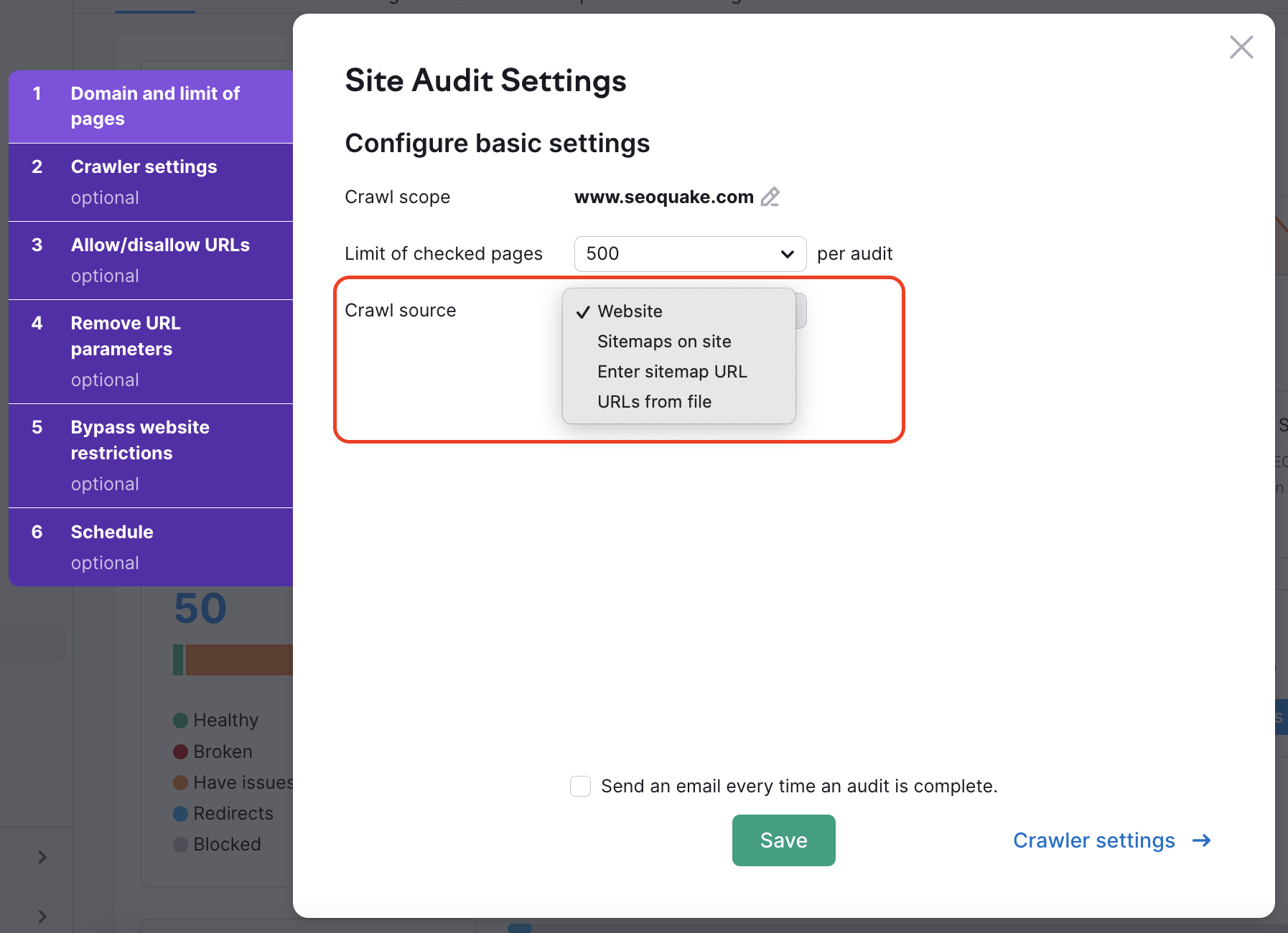

In both cases, there is a way to ensure that our bot will crawl your pages. To do this, you need to change the crawl source from “website” to “sitemap” or “URLs from file” in your campaign settings:

“Website” is a default source. It means we will crawl your website using a breadth-first search algorithm and navigate through the links we see on your page’s code—starting from the homepage.

If you choose one of the other options, we will crawl links that are found in the sitemap or in the file you upload.

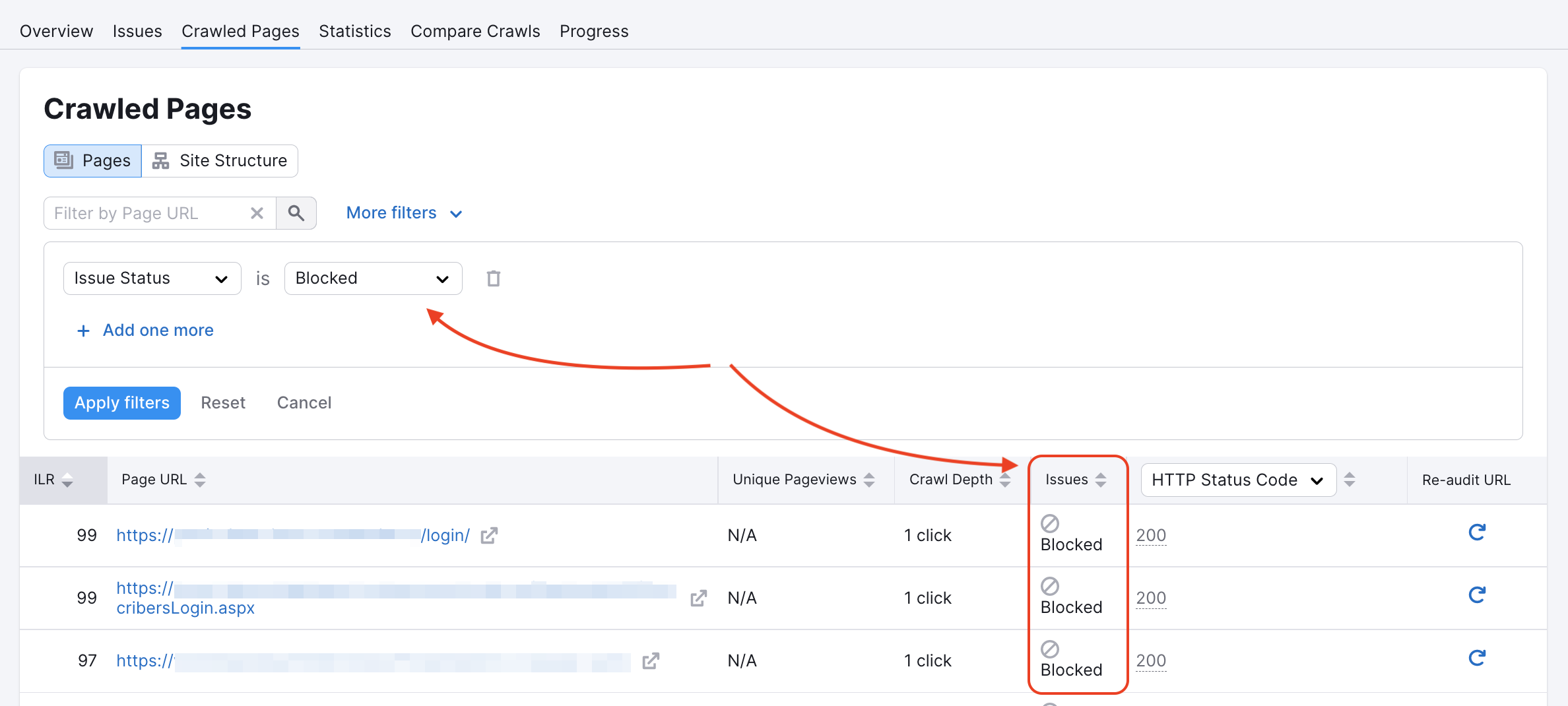

Our crawler could have been blocked on some pages in the website’s robots.txt or by noindex/nofollow tags. You can check if this is the case in your Crawled pages report:

You can inspect your Robots.txt for any disallow commands that would prevent crawlers like ours from accessing your website.

If you see the code shown below on the main page of a website, it tells us that we’re not allowed to index/follow links on it and our access is blocked. Or, a page containing at least one of the two: "nofollow", "none", will lead to a crawling error.

<meta name="robots" content="noindex, nofollow">

You will find more information about these errors in our troubleshooting article.

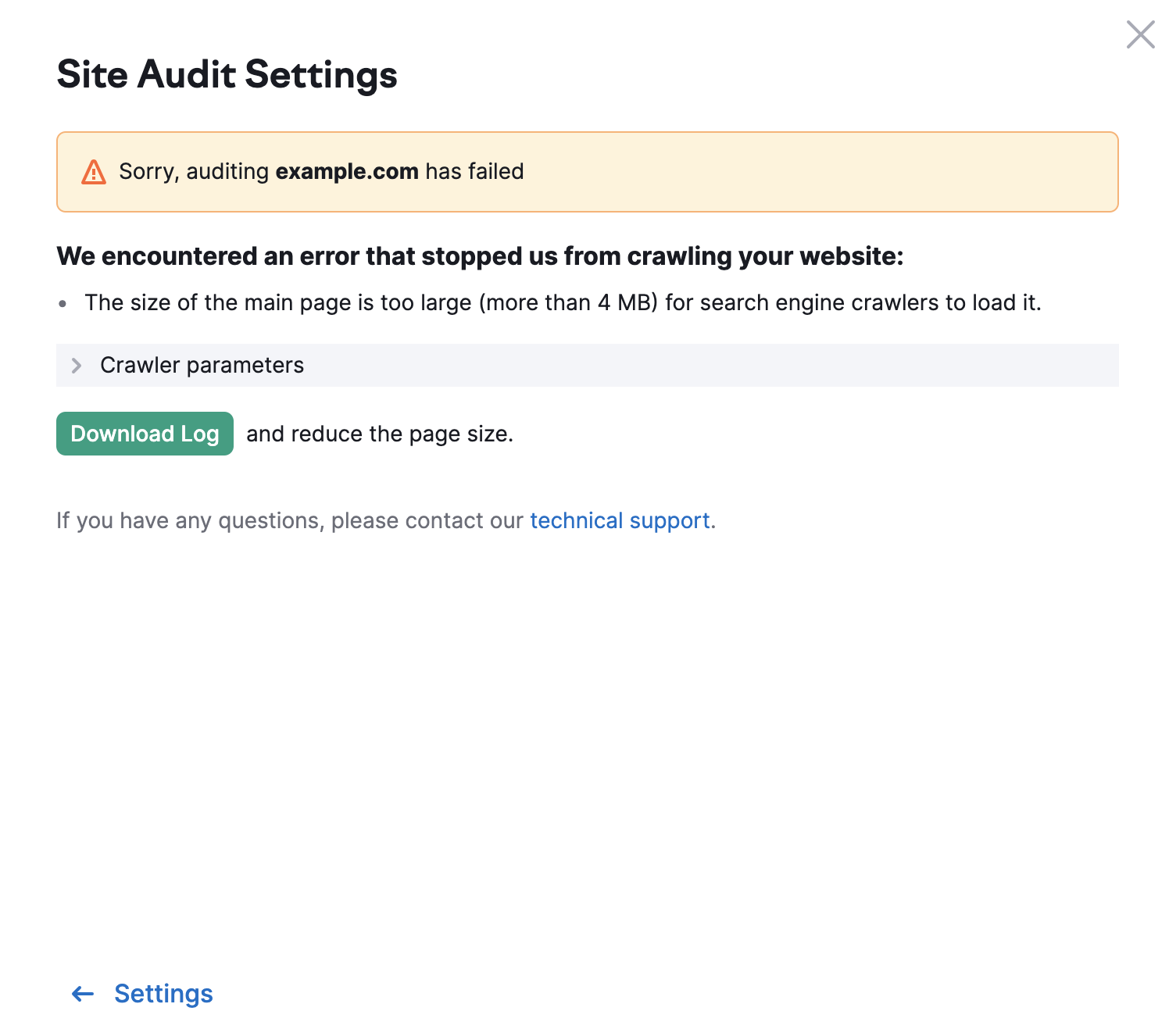

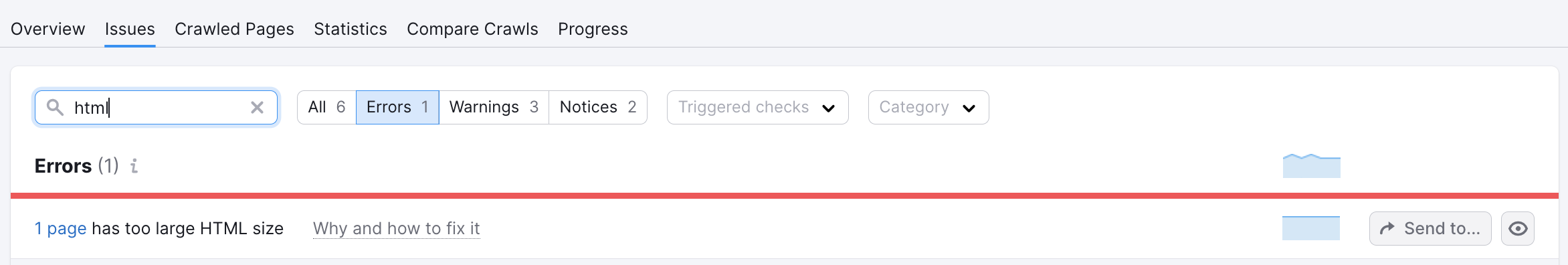

The limit for other pages of your website is 2MB. In case a page has too large HTML size, you will see the following error:

- What Issues Can Site Audit Identify?

- How many pages can I crawl in a Site Audit?

- How long does it take to crawl a website? It appears that my audit is stuck.

- How do I audit a subdomain?

- Can I manage the automatic Site Audit re-run schedule?

- Can I set up a custom re-crawl schedule?

- How is Site Health Score calculated in the Site Audit tool?

- How Does Site Audit Select Pages to Analyze for Core Web Vitals?

- How do you collect data to measure Core Web Vitals in Site Audit?

- Why is there a difference between GSC and Semrush Core Web Vitals data?

- Why are only a few of my website’s pages being crawled?

- Why do working pages on my website appear as broken?

- Why can’t I find URLs from the Audit report on my website?

- Why does Semrush say I have duplicate content?

- Why does Semrush say I have an incorrect certificate?

- What are unoptimized anchors and how does Site Audit identify them?

- What do the Structured Data Markup Items in Site Audit Mean?

- Can I stop a current Site Audit crawl?

- Using JS Impact Report to Review a Page

- Configuring Site Audit

- Troubleshooting Site Audit

- Site Audit Overview Report

- Site Audit Thematic Reports

- Reviewing Your Site Audit Issues

- Site Audit Crawled Pages Report

- Site Audit Statistics

- Compare Crawls and Progress

- Exporting Site Audit Results

- How to Optimize your Site Audit Crawl Speed

- How To Integrate Site Audit with Zapier

- JS Impact Report